Listen to the article

Medical experts are increasingly concerned about patients using artificial intelligence tools for health advice, warning that these technologies can provide incorrect information and lead to dangerous health outcomes.

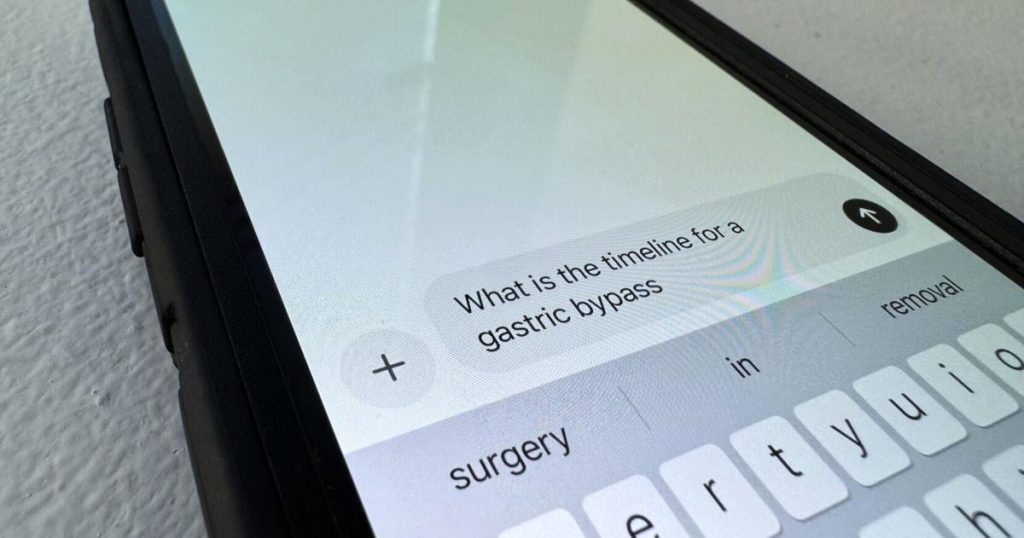

Dr. Kris Nemeth, an anesthesiologist with Baptist Health Paducah, says he’s noticing a troubling trend of patients arriving at appointments armed with health information obtained from AI chatbots. While he appreciates patients being proactive about their health, he cautions that AI systems like ChatGPT often deliver inaccurate medical information.

“The problem is that AI is good at providing information that sounds correct and authoritative, but it may not actually be accurate,” Dr. Nemeth explained. “These systems are trained on vast amounts of data from the internet, including outdated or incorrect medical information, and they don’t have the ability to determine what’s truly reliable.”

The consequences of relying on AI for medical advice can be serious. Patients might delay seeking proper care for serious conditions or attempt inappropriate self-treatment based on AI recommendations. Medical professionals report cases where patients arrived with dangerous self-diagnoses or refused recommended treatments because their AI consultation contradicted their doctor’s advice.

According to a recent study published in the Journal of the American Medical Association, AI chatbots provided completely accurate medical information in only about 67% of cases. Even more concerning, in approximately 5% of test cases, the AI systems gave potentially harmful recommendations that could lead to severe health complications if followed.

Healthcare providers acknowledge that the appeal of AI health tools is understandable. With appointment wait times averaging 26 days for new patients to see a physician in some specialties, immediate responses from AI can seem like an attractive alternative. Additionally, many patients face financial barriers to healthcare access or live in rural areas with limited medical services.

Dr. Lisa Cooper, Director of the Johns Hopkins Center for Health Equity, points out that this digital divide creates another layer of healthcare inequality. “Those with limited internet access or digital literacy can’t even access these AI tools, while others might rely too heavily on them because they have no alternatives,” she noted.

Medical associations across the country are developing guidelines for patients about the responsible use of AI health information. The American Medical Association recommends that patients use AI tools only as a supplement to—never a replacement for—professional medical care.

“Think of AI as a starting point for health questions, not the final word,” said Dr. Nemeth. “These tools can help you formulate questions for your doctor, but they shouldn’t be making your healthcare decisions.”

Healthcare systems are also exploring ways to integrate AI responsibly into the medical landscape. Some hospitals are piloting AI-assisted triage systems that work under physician supervision, allowing doctors to review AI recommendations before they reach patients.

Tech companies developing medical AI applications have responded to concerns by adding more disclaimers and emphasizing that their tools should not replace professional medical care. OpenAI, the creator of ChatGPT, recently updated its user guidelines to more prominently display warnings when users ask health-related questions.

Experts suggest patients follow simple guidelines when using AI for health information: verify information with reputable health websites like the CDC or Mayo Clinic, always consult healthcare providers about significant health concerns, and be skeptical of definitive diagnoses or treatment recommendations from AI systems.

“The technology is advancing rapidly, and there may come a day when AI becomes a reliable medical assistant,” Dr. Nemeth said. “But we’re not there yet, and the stakes are simply too high when it comes to your health to rely on systems that are still prone to significant errors.”

As AI continues to evolve, the medical community emphasizes that the doctor-patient relationship remains irreplaceable, with human judgment, experience, and the ability to consider a patient’s complete medical history still far superior to even the most sophisticated algorithms.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

9 Comments

The potential for AI-driven misinformation to have dangerous health consequences is really concerning. Patients need to be aware of these risks and maintain a healthy skepticism when using these tools.

I can see both the benefits and risks of using AI for medical advice. While the technology can be helpful, it’s clear that human expertise is still vital when it comes to complex health issues. Patients should approach with caution.

This is an important issue as AI becomes more prevalent in our lives. I’m glad to see doctors being proactive about educating patients on the limitations of these systems when it comes to medical advice.

Yes, it’s critical that people understand the risks and don’t blindly trust AI, especially for serious health concerns. Consulting qualified professionals is still essential.

Definitely a valid concern. AI systems can provide information that sounds authoritative but may not be fully accurate or up-to-date. Patients should always double-check with their doctors before making any major health decisions.

Agreed. Doctors have years of training and experience that AI systems simply can’t match when it comes to complex medical issues.

This is an important warning from the medical community. AI systems, no matter how advanced, simply can’t replace the nuanced judgment and specialized knowledge of trained healthcare professionals. Relying too heavily on chatbots could lead to serious problems.

Interesting to see the medical community raising concerns about the risks of over-relying on AI for health advice. While these technologies can be helpful, it’s critical that patients verify information with qualified medical professionals.

I can understand the physicians’ perspective. While AI can be a useful tool, it shouldn’t replace human medical expertise. Patients need to be cautious about relying too heavily on chatbots for sensitive health matters.