Listen to the article

Iranian Fake News Operation Uncovered Using AI-Generated ‘British’ Social Media Personas

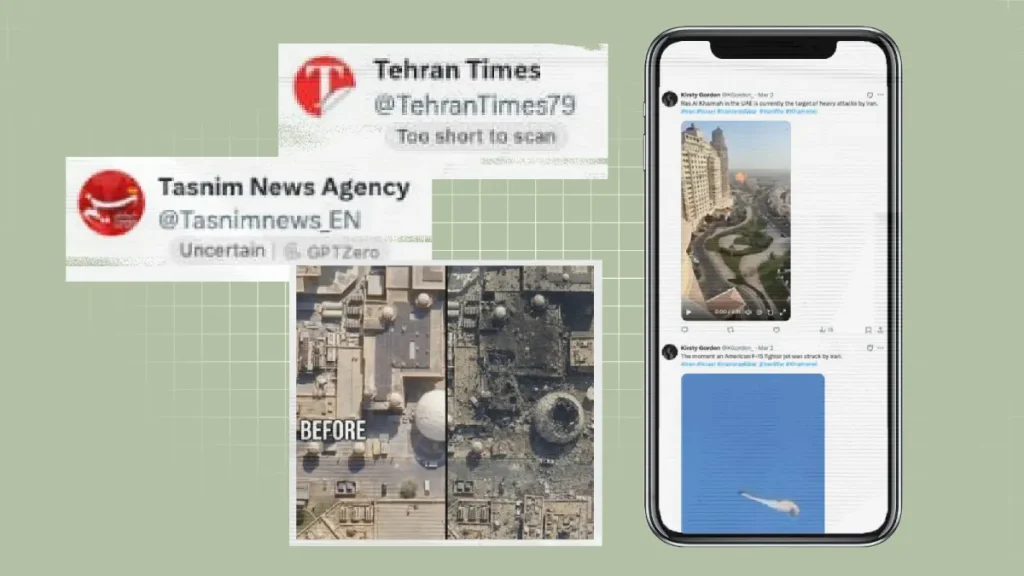

Security experts have uncovered an elaborate Iranian disinformation campaign employing artificial intelligence to create fictitious British social media personalities. These fabricated accounts have been spreading divisive content and propaganda across multiple platforms, raising new concerns about the evolving sophistication of state-backed influence operations.

The network, attributed to Iran’s Islamic Revolutionary Guard Corps (IRGC), operates dozens of convincing fake personas with AI-generated profile images that appear authentically British. According to researchers at Recorded Future, a threat intelligence firm that uncovered the operation, these accounts strategically amplify pro-Iranian messaging while attempting to inflame political and social tensions in the United Kingdom and United States.

“We’re seeing a concerning evolution in Iran’s disinformation tactics,” said Dr. Helen Warrell, a cybersecurity analyst who specializes in state influence operations. “By leveraging AI to create realistic Western personas, they’ve developed a more subtle and potentially more effective approach to information warfare.”

The fake accounts typically present themselves as ordinary British citizens with seemingly authentic biographical details and consistent posting patterns that mimic genuine users. Many claim occupations like journalists, academics, or political commentators to establish credibility. The operation has been particularly active during periods of heightened geopolitical tension, such as the ongoing Israel-Hamas conflict and disputes over Iran’s nuclear program.

What distinguishes this campaign from earlier Iranian efforts is its technological sophistication. Previous Iranian influence operations often used stolen profile pictures or easily identifiable fake accounts. This newer generation employs advanced AI image generation to create faces that pass casual inspection and can withstand basic verification efforts.

“The quality of these AI-generated personas has improved dramatically in the past year,” noted cybersecurity researcher Marcus Jenkins from the Atlantic Council’s Digital Forensic Research Lab. “They’re no longer making obvious mistakes like asymmetrical facial features or bizarre background elements that used to give away synthetic images.”

The content spread by these accounts follows consistent themes that align with Iranian state interests: criticism of Western policies toward Iran, amplification of social divisions within Western societies, and promotion of narratives that undermine public trust in democratic institutions.

Social media platforms have struggled to identify and remove these sophisticated fake accounts. Meta, Twitter (now X), and other major platforms have taken down several networks linked to this operation, but new accounts quickly emerge with improved techniques to avoid detection.

The campaign also demonstrates how Iran has adapted its influence operations for different regional contexts. Content targeting British audiences focuses on Brexit divisions, economic inequality, and the monarchy, while US-targeted content emphasizes partisan political divisions and racial tensions.

Intelligence officials worry that these operations could become more disruptive as AI technology continues to advance. The ability to generate not just static images but potentially video and audio content that appears authentic poses significant challenges for maintaining information integrity online.

“What we’re witnessing is just the beginning,” warned former GCHQ analyst Richard Thompson. “As generative AI becomes more accessible and sophisticated, the barriers to creating convincing fake personas will continue to fall, making detection increasingly difficult.”

Media literacy experts emphasize the importance of critical consumption habits when engaging with political content online. They recommend verifying information through multiple sources, checking account histories for suspicious patterns, and being particularly skeptical of emotionally charged political content from unfamiliar sources.

The discovery comes amid broader concerns about foreign interference in Western democracies, with multiple countries developing increasingly sophisticated digital influence capabilities. Security agencies in both the UK and US have issued warnings about Iranian influence operations targeting their citizens, particularly in the lead-up to elections and during international crises.

As platforms and security researchers race to develop better detection methods, the cat-and-mouse game between state actors and those defending information ecosystems continues to escalate, highlighting the emerging battlefield of AI-enabled information warfare.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

16 Comments

This is quite concerning. The use of AI-generated fake personas to spread disinformation is a worrying trend. We need to be vigilant and fact-check claims, especially on social media, to avoid being misled.

I agree, the sophistication of these operations is alarming. It highlights the need for better detection and mitigation of such influence campaigns.

This article raises valid points about the dangers of AI-driven disinformation campaigns. While the technology may enable more sophisticated tactics, it also presents opportunities for developing more effective detection and mitigation strategies. A multi-stakeholder approach will be key to addressing this challenge.

Absolutely. Collaboration between technology companies, policymakers, and the public will be essential in building resilience against the evolving threat of state-backed influence operations.

The deployment of AI-generated fake accounts to spread disinformation is a concerning development that undermines the integrity of online discourse. Strengthening media literacy and critical thinking skills among the public will be crucial to combating the spread of such manipulative content.

The article raises valid points about the evolving tactics used by state actors to sow division. While AI advances can enable these threats, they may also help in developing more effective detection and response measures.

That’s a fair assessment. Addressing the challenge of AI-generated disinformation will require a multifaceted approach involving technology, policy, and public education.

This is a troubling example of how advanced technology can be exploited for malicious purposes. Strengthening international cooperation and information-sharing may be necessary to tackle these cross-border influence operations more effectively.

This article highlights the need for greater scrutiny and accountability around the use of AI in social media. Policymakers and technology companies must work together to develop effective safeguards and detection methods to counter the threat of state-sponsored disinformation campaigns.

Agreed. Collaborative efforts between the public and private sectors, as well as international cooperation, will be essential in addressing this evolving challenge.

The article highlights the need for greater transparency and accountability around the use of AI in social media. Responsible development and deployment of these technologies should be a priority to mitigate potential harms.

Agreed. Proactive measures, such as improved platform policies and user education, can help address the growing threat of AI-driven disinformation campaigns.

This is a concerning development, as the use of AI to create convincing fake accounts could make it increasingly difficult to identify and counter state-sponsored influence operations. Maintaining digital literacy and critical thinking skills will be crucial.

The use of AI to create convincing fake personas is a worrying development that could undermine the integrity of online discourse. Addressing this challenge will require a combination of technological solutions, policy reforms, and public awareness initiatives.

The article raises valid concerns about the potential impact of these AI-generated fake accounts on public discourse and trust. Developing robust fact-checking mechanisms and digital literacy programs will be crucial to mitigate the spread of disinformation.

Absolutely. Empowering users to critically evaluate online content and identify manipulative tactics is key to building resilience against such influence campaigns.