Listen to the article

YouTube Bans Iranian AI-Generated Propaganda Videos in Landmark Moderation Decision

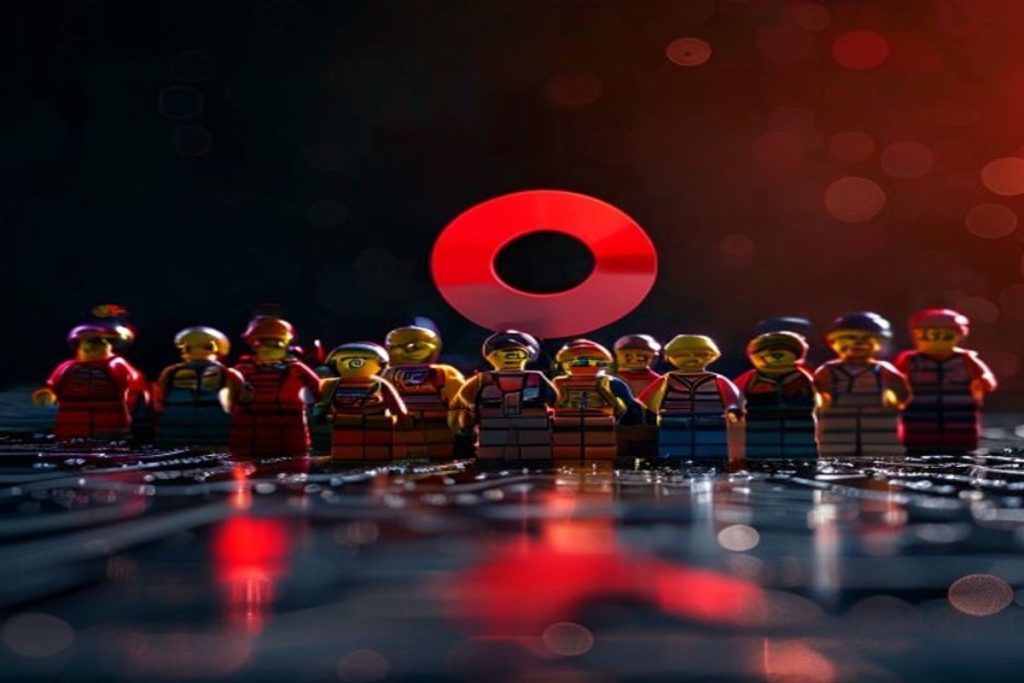

Iran’s Foreign Ministry has formally condemned YouTube following the platform’s termination of a channel linked to Supreme Leader Khamenei’s office. The channel, which belonged to the Supreme Leader’s Focal Point, had been publishing AI-generated videos that depicted Iranian military narratives using Lego-style animation.

The decision, announced on April 14, marks a significant shift in how major platforms are handling state-sponsored synthetic media. YouTube cited sanction compliance violations and “coordinated inauthentic behavior” as the basis for the removal.

Tehran responded with strong diplomatic language, characterizing the takedown as an attack on digital sovereignty and free expression. Iranian officials have threatened to file complaints with international telecommunications bodies, signaling the government’s concern about maintaining its media presence on Western digital platforms.

Industry observers note the significance of YouTube’s classification decision. By treating these stylized AI videos with the same regulatory weight as conventional military propaganda, the platform has established that visual sanitization through cartoon-like formats will not exempt state-sponsored content from enforcement actions.

“This is a clear message that content moderation policies are catching up to the creative ways state actors try to circumvent them,” said a digital policy expert who requested anonymity due to the sensitivity of the issue. “The medium is no longer providing cover for the message.”

The banned channel had been gaining significant traction leading into 2026, leveraging algorithms that favor visually distinctive short-form content. Analysts describe the Lego-style videos as part of a broader “meme-warfare” strategy aimed specifically at Western audiences. The animation style was deliberately chosen for its universally disarming quality, potentially allowing political narratives to reach viewers who might otherwise avoid traditional propaganda formats.

The Iranian state-affiliated outlet had been quietly pivoting toward this content strategy, creating videos that could easily transcend language barriers and cultural contexts—a significant advantage in global information operations.

This enforcement action reflects a rapid evolution in how major tech platforms are approaching AI-generated content from state actors. Traditional content moderation systems were primarily designed to identify problematic text and conventional imagery, not visually benign content that carries political messaging through metaphor and context.

“The challenge for platforms has always been distinguishing between creative expression and coordinated information operations,” explained Dr. Maria Sanchez, a researcher specializing in digital propaganda at Columbia University. “What’s new here is the recognition that even innocent-looking animation can serve strategic communication objectives when produced by sanctioned entities.”

Major platforms have been investing heavily in detection tools specifically designed for what industry insiders now call “adversarial synthetic media”—content that appears visually harmless but carries political intent. These tools are becoming increasingly sophisticated at identifying patterns in AI-generated content that might indicate state sponsorship or coordinated campaigns.

For AI generation tool providers, this case raises important questions about potential liability and responsibility. As detection systems improve, companies providing AI creation capabilities may face increased pressure to implement stronger know-your-customer requirements or more restrictive licensing terms to prevent their tools from being used in sanctions-violating activities.

The broader implication points toward an increasingly fragmented global information environment. As Western platforms enforce stricter policies against state-affiliated content from sanctioned entities, those governments will likely accelerate development of alternative distribution infrastructure.

“What we’re witnessing is the early stages of a splintered digital landscape,” said technology policy analyst James Warren. “When mainstream platforms close doors, state actors don’t stop creating content—they simply find or build new channels where Western moderation can’t reach.”

The YouTube ban on Iran’s AI Lego videos may eventually be remembered as a turning point in the evolving relationship between synthetic media, platform governance, and geopolitical information operations—a case study in how even the most creatively disguised propaganda is coming under greater scrutiny in our increasingly AI-mediated information environment.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

11 Comments

This story touches on some complex issues around free speech, national security, and the regulation of online content. It’s an area that will likely see continued debate and evolution.

The diplomatic response from Iran highlights how sensitive this issue is from a geopolitical perspective. Platforms have to navigate these tricky waters carefully.

I appreciate YouTube taking action to remove these AI-generated propaganda videos. Synthetic media poses real challenges for platforms trying to maintain integrity and authenticity.

It will be interesting to see if this decision sets a new standard for how platforms approach state-sponsored content using emerging technologies like AI.

I’m curious to see how Iran responds to this ban. They seem intent on maintaining an online media presence, even if it involves using AI to skirt platform rules.

This situation highlights the challenges platforms face in moderating complex geopolitical content. Striking the right balance between free expression and combating propaganda is an ongoing challenge.

The use of Lego-style animation to create AI-generated propaganda is a creative but concerning tactic. Platforms will need to stay vigilant in detecting and addressing these types of synthetic media.

The use of Lego-style animation to create AI-generated propaganda videos is an interesting and concerning tactic. It speaks to the evolving nature of information warfare in the digital age.

YouTube’s decision to ban these videos sets an important precedent. It will be interesting to see if other platforms follow suit in how they handle this type of synthetic media.

This is an interesting precedent for how major platforms handle state-sponsored synthetic media. Regulating AI-generated propaganda is a tricky balance between free speech and national security concerns.

YouTube’s decision to remove these Lego-style videos suggests they take this issue seriously. It will be worth watching how other platforms respond to similar content.