Listen to the article

The decade-long battle against social media disinformation campaigns has entered a new chapter with X’s controversial attempt to expose foreign actors manipulating online discourse. The latest effort comes nearly ten years after Buzzfeed News first exposed the Internet Research Agency, a Russian “troll farm” orchestrating coordinated propaganda campaigns across social platforms.

Dating back to at least the 2008 presidential campaign, Russian operatives have maintained multiple fake social media accounts to shape American political discourse. Despite this long-documented threat, the United States continues to debate the scope of foreign interference while fraudulent accounts proliferate across platforms.

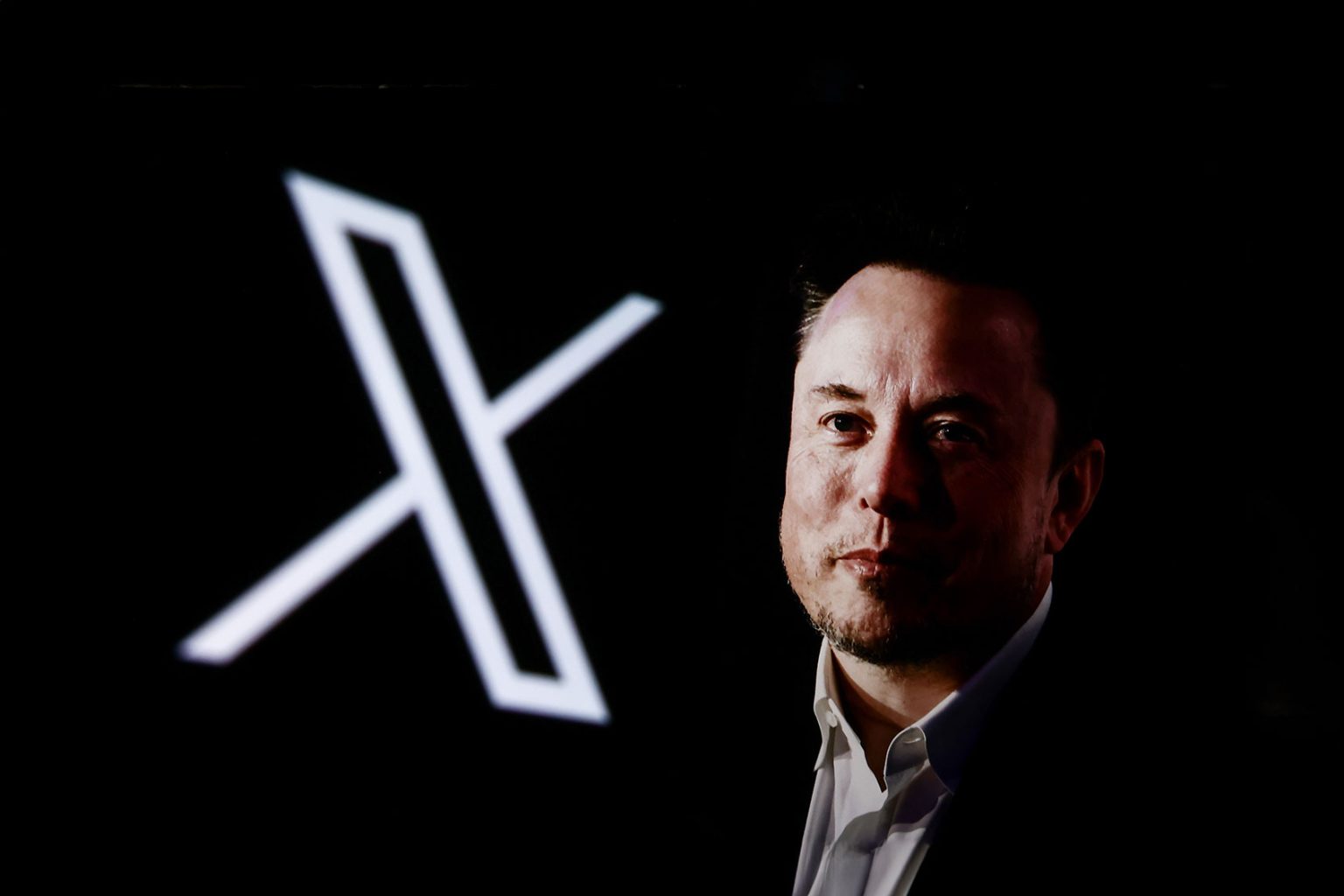

The problem intensified after Elon Musk’s 2022 acquisition of Twitter (now X). Under Musk’s leadership, the platform reinstated accounts previously banned for hate speech and disinformation, including former President Donald Trump’s. A 2024 CNN investigation examining 56 pro-Trump accounts on X revealed “a systematic pattern of inauthentic behavior.” Fifteen accounts displayed blue verification checkmarks despite their questionable authenticity, while eight used stolen photos of European influencers to establish credibility.

Critics point to Musk’s personal amplification of extremist content and retaliatory actions against journalists as evidence of deteriorating platform standards. His administration has dismantled teams previously responsible for combating falsehoods and conspiracy theories. The platform’s engagement-driven monetization model thrives on inflammatory content that fuels culture wars – a dynamic that served Trump’s base until recent internal MAGA conflicts threatened the coalition’s stability.

In mid-November, following a public appeal from Fox News’ Katie Pavlich claiming “Foreign bots are tearing America apart,” X’s head of product Nikita Bier promised action within 72 hours. The hastily implemented location-exposing feature aimed to identify foreign accounts masquerading as American users.

The rollout proved chaotic and error-prone, yet garnered praise from conservative figures including Florida Governor Ron DeSantis and podcaster Dave Rubin – the latter confirmed by the Justice Department as a subcontractor of Russian intelligence. Several prominent MAGA-aligned accounts were revealed to be operating from Nigeria, exposing a pattern where users deliberately pose as right-wing agitators to maximize engagement.

Bier temporarily suspended the feature after acknowledging data inaccuracies. Critics warned the tool endangered vulnerable users, particularly journalists reporting on authoritarian regimes, who could be targeted based on incorrectly displayed locations.

The journalism community faces particular vulnerability to these disinformation campaigns. While political reporters once heavily utilized Twitter for breaking news and analysis, Musk’s reduction of safeguards has diminished this function, though journalists remain disproportionately affected by inauthentic accounts.

X isn’t alone in facing scrutiny over platform safety. Recently unsealed court documents revealed Instagram’s former head of safety testified that the company maintained a “17x” strike policy for accounts engaging in human trafficking – allowing 16 violations before suspension.

More troublingly, the documents allege Meta studied solutions to child safety issues but shelved fixes to protect growth. Internal research reportedly established causal connections between their platforms and mental health problems including anxiety, depression, and negative social comparison. One study, internally labeled “Project Mercury,” found users who deactivated Facebook or Instagram for a week reported reduced depression, loneliness, and anxiety.

These revelations highlight a fundamental conflict: social media platforms thrive on engagement metrics, with inflammatory content reliably driving user interaction. Platform operators have little financial incentive to implement guardrails that might reduce engagement, regardless of content origin.

Industry experts argue these systems were deliberately constructed to maximize profit at the expense of transparency and safety. The situation demonstrates why self-regulation is insufficient – platforms built around engagement-driven business models are unlikely to voluntarily sacrifice revenue for public welfare. What’s needed, critics maintain, is comprehensive regulatory reform rather than incremental improvements to content moderation.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

13 Comments

The investigation into suspicious pro-Trump accounts on X is quite alarming. Verification checkmarks alone don’t guarantee authenticity, as this case illustrates.

Agreed, the findings highlight the need for more robust account verification and moderation to weed out coordinated inauthentic behavior.

The article highlights a longstanding issue with foreign interference in US political discourse via social media. It’s a complex problem without easy solutions.

Absolutely, the use of fake accounts to sway public opinion is very concerning. More transparency and stronger authentication measures are needed across social platforms.

The article raises valid concerns about the potential for increased disinformation and manipulation of political discourse under Elon Musk’s leadership at Twitter/X.

I share those concerns. Effective content moderation and authentication measures are crucial to maintain the integrity of online discussions.

Interesting to see how Elon Musk’s changes at Twitter/X could impact the broader social media landscape. Definitely raises concerns about the spread of disinformation if moderation is relaxed.

I agree, the reactivation of banned accounts is worrisome. Platforms need to strike a careful balance between free speech and combating coordinated propaganda campaigns.

While Elon Musk’s changes at Twitter/X may spark innovation, the potential for abuse by bad actors is real. Disinformation campaigns can undermine democratic discourse.

Elon Musk’s changes at Twitter/X could have far-reaching implications for the social media industry. Maintaining a healthy balance between free speech and combating disinformation will be key.

Absolutely. The reactivation of banned accounts is a concerning move that could enable the spread of harmful propaganda and misinformation.

The long history of foreign actors exploiting social media to interfere in US politics is deeply troubling. Stronger safeguards are needed to protect democratic processes.

This is a complex issue without easy solutions. Balancing free speech and combating disinformation is an ongoing challenge for social media platforms.