Listen to the article

Dense Retrieval Breakthrough Challenges AI Giants in Fact-Checking Arena

Researchers at the University of Galway have developed a groundbreaking fact verification system that challenges the dominance of large language models in combating online misinformation. The team, led by Alamgir Munir Qazi, John P. McCrae, and Jamal Abdul Nasir, has created DeReC (Dense Retrieval Classification), a framework that not only matches but exceeds the accuracy of more complex AI systems while dramatically reducing processing time.

In an era where misinformation spreads rapidly across digital platforms, the need for efficient and reliable fact-checking tools has never been more urgent. Manual verification processes remain slow and resource-intensive, creating a pressing demand for automated solutions that can scale effectively.

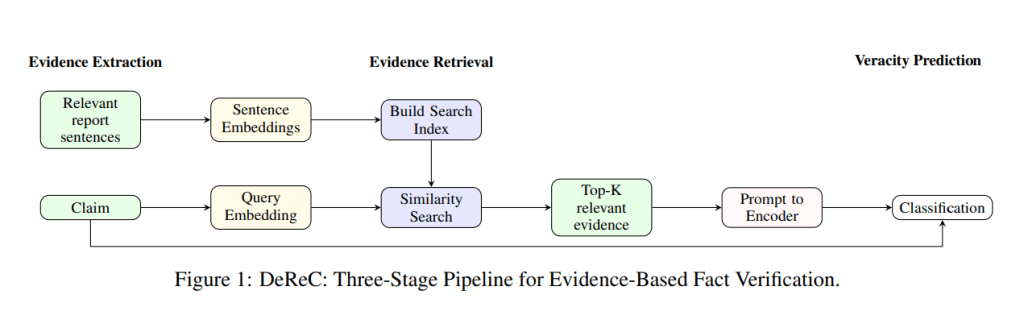

DeReC takes a fundamentally different approach from current state-of-the-art systems. Rather than relying on the generation capabilities of large language models (LLMs), which are prone to hallucinations and require significant computational resources, DeReC employs a three-stage pipeline centered around evidence retrieval and focused classification.

“The system uses sentence embeddings and similarity search algorithms to quickly identify the most relevant information from source documents,” explained one of the researchers. “This grounded approach provides more reliable verification than methods that generate explanations which might themselves contain inaccuracies.”

The performance improvements are substantial. In benchmark testing, DeReC achieved an F1 score of 65.58% on the RAWFC dataset, significantly outperforming previous leading methods that topped out at 61.20%. Even more impressive are the efficiency gains – the system reduces processing time by up to 95% on the RAWFC dataset and 92% on LIAR-RAW compared to explanation-generating language models.

These efficiency improvements stem from DeReC’s lightweight framework, which utilizes just 1.5 billion parameters – a fraction of what’s required by most large language models. This makes the system not only more accurate but also more practical for real-world deployment where computational resources may be limited.

The Galway team’s research challenges a growing assumption in the AI field that ever-larger models are necessary for improved performance on specialized tasks. Their findings suggest that carefully engineered, targeted systems can actually outperform general-purpose AI in specific applications like fact verification.

“This is particularly significant as we see the ongoing arms race in AI development pushing toward increasingly massive models,” noted an independent expert. “The Galway research demonstrates that smarter, not necessarily bigger, approaches can deliver superior results in some domains.”

The modular design of DeReC also offers advantages for future development. As improved embedding models emerge, they can be easily integrated into the system, ensuring it remains adaptable and scalable. This addresses a common criticism of highly specialized AI systems – that they quickly become obsolete as technology advances.

The researchers acknowledge certain limitations, particularly that retrieval quality depends heavily on the completeness and impartiality of the evidence corpus. Future work will explore dynamic evidence corpus updates, multilingual verification capabilities, and lightweight methods for generating explanations to complement the system’s classifications.

As social media platforms and news organizations grapple with the proliferation of false information, systems like DeReC could provide a more practical path forward than current approaches relying on resource-intensive language models. By combining accuracy with efficiency, such systems may finally offer scalable solutions to the persistent challenge of online misinformation.

The research has been published on the scientific preprint server ArXiv and is already generating significant interest among both academic and industry professionals working on automated fact-checking systems.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

As a skeptic, I’m interested to see the real-world performance of this dense retrieval system. Accuracy is crucial, but I’d also want to know the limitations and potential biases. Fact-checking is a complex challenge that requires vigilance.

The team’s approach of using a focused pipeline instead of relying on large language models is intriguing. I look forward to seeing how it stands up to further testing and real-world deployment.

Interesting development in the fight against misinformation. Efficient fact-checking tools are crucial in the digital age. I’m curious to see how this dense retrieval approach compares to other AI-based systems in terms of accuracy and scalability.

This could be a valuable addition to the arsenal against fake news. The speed and accuracy improvements are promising.

This is an intriguing development in the fight against online misinformation. Reducing processing time while maintaining accuracy is a significant achievement. I’m interested to see how this dense retrieval system compares to other AI-based fact-checking tools, especially in the mining and commodities space.

The focus on evidence retrieval is a key aspect of this system. Verifying claims against credible sources is crucial for effective fact-checking, especially in technical domains like mining and energy.

This is an important advancement in the field of automated fact-checking. Reducing processing time while maintaining accuracy is a significant achievement. I wonder how well this system would perform on complex, nuanced claims compared to human fact-checkers.

The focus on evidence retrieval is a smart approach. Verifying claims based on credible sources is crucial for effective fact-checking.

This is an exciting development in the fight against online misinformation. Improving the speed and accuracy of fact-checking is vital in our digital age. I’m curious to see how this system performs across different types of claims and how it evolves over time.

The emphasis on evidence retrieval is a promising aspect of this system. Grounding fact-checking in credible sources is essential for building trust and credibility.

As someone interested in the mining and commodities space, I’m glad to see advancements in automated fact-checking. Misinformation in this sector can have real-world consequences, so tools like DeReC could be invaluable. I wonder how it would handle claims related to resource extraction and energy production.

Leveraging dense retrieval to enhance fact-checking is a smart approach. I’m curious to see how this system performs on complex, technical claims within the mining and energy domains.