Listen to the article

FAKE IMAGE SHOWS MADURO HANDCUFFED IN VEHICLE, EXPERTS CONFIRM AI GENERATION

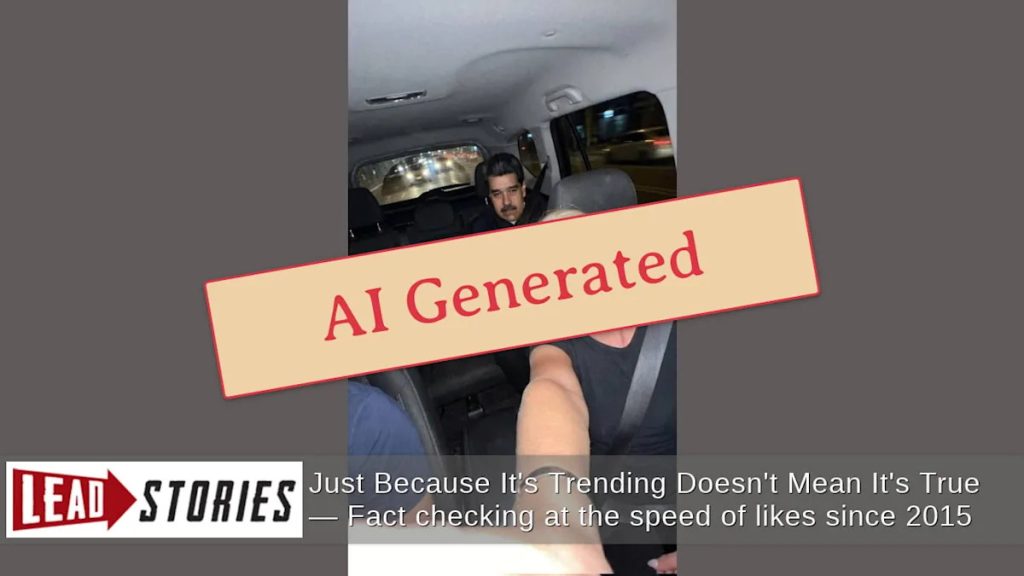

A digitally fabricated image depicting Venezuelan President Nicolás Maduro handcuffed in the back seat of a car has been circulating on social media, according to multiple artificial intelligence detection tools that have confirmed the photo is not authentic.

The image, which shows a blonde woman taking a selfie with what appears to be a detained Maduro, was shared on X (formerly Twitter) by the account @Partisangirl on January 4, 2026. The post, which has since gained significant traction, was accompanied by the caption: “The United States deep state is not just a clown show it’s the whole circus.”

Digital forensic analysis tools quickly identified telltale signs of AI manipulation. Google’s Gemini technology detected a SynthID watermark embedded within the image – a digital signature that indicates all or part of the content was created or altered using Google’s artificial intelligence tools.

Further verification came from Hive Moderation’s AI-generated content detector, which assessed with 93.3 percent confidence that the image was artificially created. The analysis specifically pointed to Google’s Gemini3 as the AI model responsible for generating the fabricated scene.

This incident highlights the growing challenge of misinformation in the digital age, particularly regarding political figures in geopolitically sensitive regions. Venezuela, under Maduro’s leadership since 2013, has been a focal point of international tensions, especially with the United States, which has imposed various sanctions against the government.

The proliferation of such convincingly altered images poses significant risks to public discourse about international relations. Venezuela remains a politically polarized nation, with Maduro’s leadership contested by opposition forces both within the country and internationally. False visual evidence like this fabricated image can potentially inflame tensions or create confusion about actual events.

Social media platforms have struggled to contain the spread of AI-generated misinformation. Despite advances in detection technology, as demonstrated by the tools that identified this particular fake, many manipulated images continue to circulate widely before being debunked.

The timing of such content is particularly concerning as it coincides with ongoing political instability in Venezuela and shifting diplomatic relations in the region. Fabricated images depicting the arrest or detention of a sitting head of state could potentially trigger diplomatic incidents or public unrest if taken at face value.

Media literacy experts emphasize the importance of verifying images before sharing them, particularly those showing extraordinary or politically charged situations. The increasing sophistication of AI-generated imagery makes traditional visual authentication increasingly difficult for average users.

Digital watermarking systems like SynthID represent an important technological response to this challenge, embedding invisible markers in AI-generated content that specialized tools can detect. However, not all AI generation tools implement such safeguards, and detection technology remains in a constant arms race with ever-improving generation capabilities.

As AI-generated visual content becomes more prevalent and harder to distinguish from authentic media, verification tools and critical media consumption skills will become increasingly essential components of informed citizenship in the digital age.

The fabricated Maduro image serves as a reminder that even the most visually convincing content requires scrutiny, particularly when it portrays politically sensitive scenarios that would represent major geopolitical developments if true.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

16 Comments

This incident serves as a reminder that we should always approach online content, especially viral or sensational material, with a healthy dose of skepticism. Verifying the source and authenticity of digital media is crucial in an age of deepfakes and AI-generated fakes.

Well said. Fact-checking and relying on authoritative sources are essential skills in the digital age, as the line between reality and fabrication can become increasingly blurred.

The use of AI to create fake images and spread misinformation is concerning. While the technology is advancing, it’s reassuring to see that detection tools are also improving to identify these manipulations.

You’re right, the battle against digital disinformation is an ongoing one. But the fact that experts were able to quickly verify this image as artificial is a positive sign that the tools to combat such content are becoming more sophisticated.

Interesting, this ‘viral’ selfie seems to be a fabrication. AI-generated content can be quite convincing these days, but it’s important to verify the authenticity of images, especially those involving public figures.

Agreed, digital forensics can uncover telltale signs of AI manipulation. It’s good that experts were able to detect the SynthID watermark and confirm this was an artificial creation, not an authentic photo.

While AI-generated content can be quite convincing, it’s reassuring to see that experts have the tools and expertise to identify these manipulations. This case reinforces the importance of critical thinking and fact-checking when consuming digital media.

Agreed. The ability to quickly verify the authenticity of this image is a positive sign that the battle against digital disinformation is progressing, even as the technology behind it continues to advance.

This case highlights the importance of media literacy and being cautious about the origin and veracity of online content, especially when it involves public figures or sensitive political situations.

Absolutely. In an age of rampant misinformation, we all need to be more discerning consumers of digital media and rely on reputable sources to separate fact from fiction.

This is a good reminder to be skeptical of online content, even if it seems sensational or goes viral. Fact-checking and verifying the sources is crucial, especially for images and videos that could be digitally manipulated.

Absolutely. In an era of deepfakes and AI-generated media, it’s essential that we approach online content with a critical eye and rely on authoritative sources to validate authenticity.

The identification of this image as AI-generated is a testament to the rapid advancements in detection technologies. While the use of such tools to create false content is concerning, it’s reassuring to see that experts are staying ahead of the curve in identifying these manipulations.

Absolutely. The ability to quickly verify the authenticity of this image is a positive sign that the fight against digital disinformation is progressing, even as the underlying technology becomes more sophisticated.

It’s concerning to see how easily AI can be used to create and spread false images. This incident serves as a wake-up call for the need to improve digital authentication and fact-checking processes.

You’re right, this is a concerning development that highlights the growing challenge of verifying the authenticity of online content. Robust detection tools and heightened media literacy will be crucial going forward.