Listen to the article

Fake Donald Trump Jr. Audio Supporting Russia in Ukraine War Exposed as Deepfake

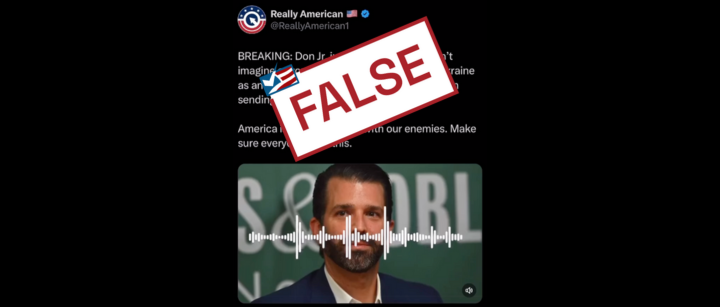

A sophisticated AI-generated audio clip falsely depicting Donald Trump Jr. saying the United States “should have been sending weapons to Russia” instead of Ukraine has spread across social media platforms, prompting swift denunciations from Trump Jr.’s team.

“This is 100% fake AI generated audio,” said Andrew Surabian, a Republican strategist and spokesperson for Trump Jr., in a statement posted on X (formerly Twitter) that was later reshared by Trump Jr. himself.

The fabricated clip was designed to mimic an episode of Trump Jr.’s podcast “Triggered with Donald Trump Jr.” from February 25th, creating the illusion of Trump Jr. responding to a caller. The audio included inflammatory statements suggesting America should have allied with Russia rather than Ukraine in the ongoing conflict.

“I honestly can’t imagine that anyone in their right mind picking Ukraine as an ally when Russia is the other option… The U.S. should have been sending weapons to Russia,” the fake audio claimed.

Digital forensics experts who analyzed the recording confirmed it was created using artificial intelligence. Hany Farid, chief science officer at GetReal Labs and professor of digital forensics at the University of California Berkeley, explained that multiple AI detection models classified the voices “with high confidence as AI-generated.”

“This was not a simple voice cloning as it involved the interplay between two voices,” Farid noted. “I’ve been seeing this trend recently and it is somewhat expected as the technology gets better and the adversary becomes more proficient and sophisticated in their use of these AI tools.”

The deepfake emerged amid heightened tensions surrounding U.S. policy toward Ukraine. The United States has provided approximately $174.2 billion in military and humanitarian aid to Ukraine since Russia’s 2022 invasion, according to the Congressional Research Service.

Former President Donald Trump has recently made controversial statements regarding the conflict, claiming in a February 18 speech that Ukraine “started” the war and suggesting it could have avoided the conflict by ceding territory to Russia. Reports also indicate that Trump has been reaching out to Russian President Vladimir Putin in recent weeks, ostensibly to improve relations and broker a peace deal.

The fabricated audio clip gained significant traction on social media, with some users accepting it as authentic evidence of pro-Russian sentiment within the Trump family. One post claimed, “America is officially siding with our enemies. Make sure everyone sees this,” while another stated, “Statements like this are really easy to understand when you realize that Jr’s daddy is a Russian asset.”

Even FactPostNews, an official Democratic Party account, shared the fake audio on X before deleting it, according to ABC News reporting.

Verification against the actual February 25 episode of Trump Jr.’s podcast on Rumble confirmed that no such exchange occurred during the broadcast.

This incident highlights the growing sophistication of AI-generated deepfakes and their potential to spread misinformation, particularly in politically charged contexts. As AI technology continues to advance, distinguishing between authentic and fabricated content becomes increasingly challenging for social media users.

The spread of the fake audio also underscores how tensions surrounding U.S. policy toward Ukraine and Russia remain a flashpoint in American politics, with various actors potentially seeking to exploit these divisions through sophisticated technological deception.

As the 2024 presidential election approaches, experts warn that AI-generated deepfakes could become more prevalent, requiring increased vigilance from both social media platforms and users to identify and counter such misinformation.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

7 Comments

I’m glad the experts were able to quickly identify this audio as a fake. With the increasing sophistication of AI, we’ll likely see more of these types of deceptive clips in the future. Maintaining a critical eye is essential.

While it’s concerning to see these types of AI-generated fakes circulating, I’m hopeful that increased awareness and improved detection methods will help limit their impact. Maintaining a critical eye is key.

Interesting case of AI-generated audio being used to spread disinformation. It’s concerning how advanced these deepfake technologies have become and the potential for abuse. I’m glad the Trump Jr. team was quick to call this out as fake.

The Trump Jr. team’s swift denunciation of this deepfake is encouraging. It’s important that public figures take a strong stance against the spread of disinformation, even when it seems to align with their perceived interests.

This is a timely reminder of the need for robust digital forensics capabilities to combat the rise of synthetic media. Fact-checking and media literacy will be crucial in the years ahead as these technologies become more advanced.

This is a troubling example of how misinformation can spread so rapidly online, especially when it involves high-profile political figures. It’s crucial that we remain vigilant and fact-check claims, no matter the source.

Absolutely. The ease with which these deepfakes can be created is alarming. We need robust fact-checking and media literacy efforts to combat the rise of synthetic media and protect the integrity of public discourse.