Listen to the article

In a troubling development highlighting the growing challenge of deepfake technology, fabricated audio recordings purportedly featuring former President Donald Trump making inflammatory statements about the Epstein files have been circulating on social media platforms.

The recordings, which have gained significant traction online, feature an AI-generated voice resembling Trump’s, making extreme claims about refusing to release the Epstein files and threatening drastic measures such as starting a war or allowing Americans to starve during a government shutdown.

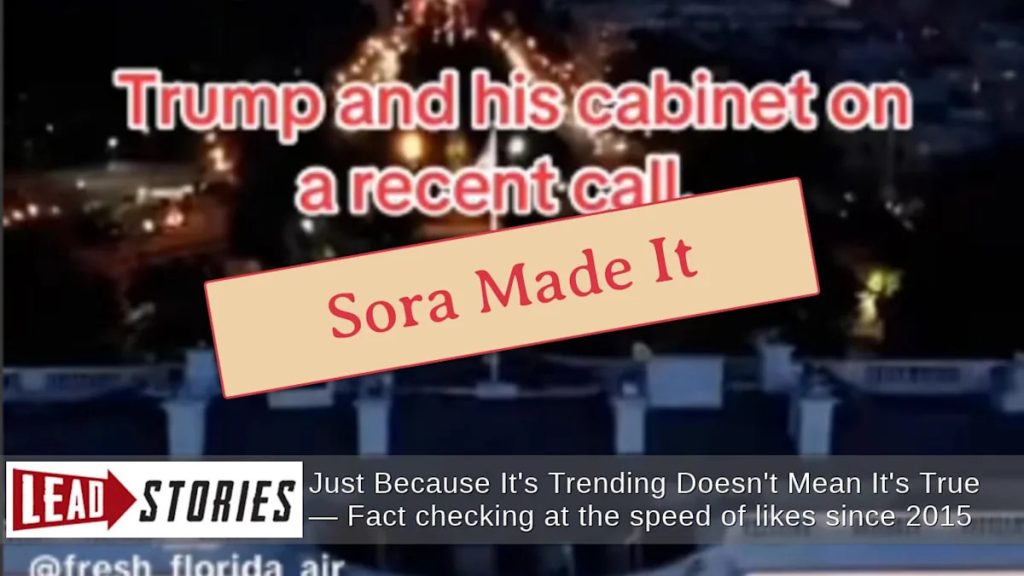

Digital forensics experts have confirmed that these recordings are fraudulent. The clearest evidence of manipulation appears in the form of a “Sora” watermark visible in the upper right corner of the original posts. Sora is a text-to-video AI tool developed by OpenAI, the same company behind ChatGPT, and is capable of creating realistic-looking and sounding fake content.

The first recording emerged on TikTok via an account called “fresh_florida_air” during a government shutdown on November 5, 2025. The video featured a caption stating “Trump and his cabinet on a recent call” and contained audio of a voice mimicking Trump saying: “I don’t fucking care how long this shutdown lasts. We will not lose to the Democrats. We will not release the Epstein files. I don’t care if the entire country starves. SNAP benefits can go to…”

A second fabricated recording appeared on the same TikTok account on November 16, 2025, with equally inflammatory content. In this version, the AI-generated Trump voice is heard saying: “Not releasing the Epstein files. Fuck Marjorie Taylor Green. I don’t care what you do. Start a fucking war. Just don’t let them get out. If I go down, I will bring all of you down with me.”

Both recordings lack any contextual information about how or where they were supposedly captured, a critical red flag for authenticity. No credible news sources have corroborated these alleged statements, and the recordings contain no metadata that would validate their origin.

The spread of these deepfakes comes at a time when concerns about AI-generated misinformation are at an all-time high. Technology experts have been warning about the potential for deepfakes to disrupt political processes and spread disinformation, particularly during sensitive periods like government shutdowns or election cycles.

“What makes these fakes particularly concerning is how convincing they sound to the untrained ear,” said Dr. Claire Wardle, a disinformation researcher at the Harvard Kennedy School, who was not directly involved in identifying these specific deepfakes. “Without careful scrutiny of the source and context, many viewers might believe these are authentic recordings.”

Social media platforms have struggled to keep pace with the rapid evolution of deepfake technology. While platforms like TikTok have policies against misleading content, the viral nature of sensational posts often means they reach millions of viewers before being flagged or removed.

The reference to “Epstein files” appears designed to tap into existing conspiracy theories surrounding Jeffrey Epstein, the financier who died in jail while awaiting trial on sex trafficking charges. Documents related to Epstein’s case have been the subject of intense speculation and interest across the political spectrum.

Digital literacy experts recommend that social media users exercise extreme caution when encountering inflammatory audio or video content, particularly if it appears to show public figures making statements that would be considered highly unusual or out of character.

“Always check if the content comes from verified accounts of reputable news organizations,” advises the Media Literacy Project. “Be particularly suspicious of sensational content that appears only on individual social media accounts with no institutional backing.”

As AI technology continues to advance, the line between authentic and fabricated content becomes increasingly blurred, making critical media consumption skills more essential than ever in navigating today’s information landscape.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

The exposure of these AI-generated deepfakes is a wake-up call for all of us. We need to be vigilant, educate ourselves, and support efforts to regulate and mitigate the misuse of this powerful technology.

Well said. Combating the spread of disinformation should be a top priority for policymakers, tech companies, and the public alike.

This is a concerning development, but I’m glad the experts were able to expose these recordings as fakes. We must remain vigilant against the spread of disinformation, especially around sensitive political issues.

I’m curious to learn more about the specific techniques used to create these deepfakes and how they were detected. Understanding the technology is key to combating it.

While the technology behind these deepfakes is impressive, the use of it to spread misinformation is deeply troubling. We must work to develop robust authentication methods to combat this growing threat.

Agreed. The potential for this technology to be abused is alarming, and we need stronger safeguards and accountability measures to protect the integrity of our information landscape.

Wow, this is a concerning development. Deepfake technology is becoming increasingly sophisticated and poses a real threat to truth and transparency. I’m glad the experts were able to identify these recordings as fraudulent.

It’s scary how realistic the audio sounds. We have to be vigilant about verifying the authenticity of content, especially from high-profile figures.

This is a sobering reminder of the need for critical thinking and fact-checking in the digital age. I’m glad the experts were able to identify these recordings as fakes, but the underlying issue of deepfake technology remains a significant challenge.

Absolutely. We must continue to invest in research and development to stay ahead of these evolving threats to our information ecosystem.

The use of AI-generated deepfakes to spread disinformation is a worrying trend. It’s crucial that we develop robust methods to detect and counter these manipulated media.

I agree, this highlights the urgent need for better regulation and oversight of emerging technologies like text-to-speech AI.