Listen to the article

The year 2025 marked a pivotal shift in disinformation tactics worldwide, with AI-generated content emerging as the dominant force in spreading false narratives. Short, viral AI videos garnered millions of views across platforms, ranging from harmless entertainment to deliberate manipulation of public opinion on critical issues like Russia’s war in Ukraine and political figures such as French President Emmanuel Macron.

Powered by increasingly sophisticated generative AI, deepfake technology and precision-targeted social media campaigns, these disinformation efforts blurred reality at unprecedented speed and scale. From synthetic videos designed to influence voter behavior to false-flag operations fueling international tensions, the threat to democratic processes and public trust reached new heights.

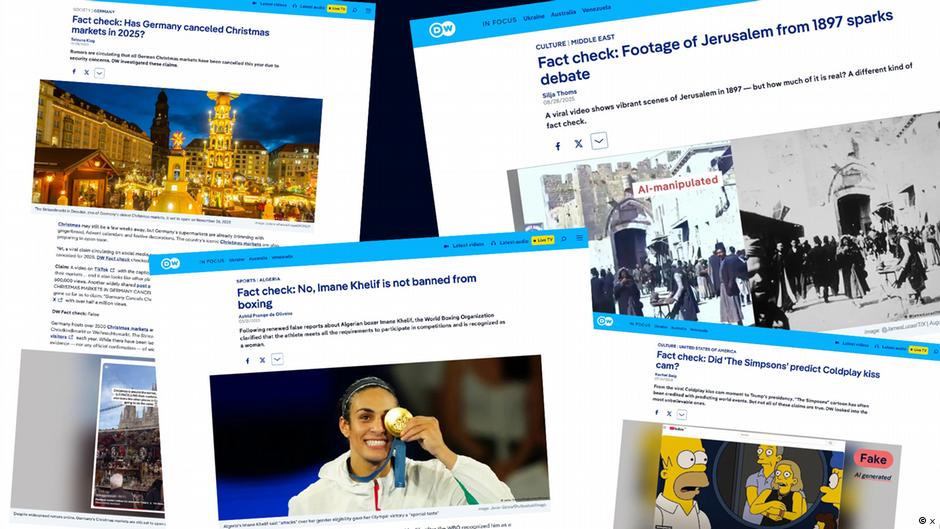

Deutsche Welle’s fact-checking team published over 180 investigations in 2025, addressing false narratives across politics, health, climate, technology, sports and history. Their work revealed several concerning trends dominating the year’s disinformation landscape.

Electoral disinformation proved particularly pervasive, with targeted campaigns aimed at influencing voters in Brazil and Moldova. Ukrainian President Volodymyr Zelenskyy remained a constant target of false claims, including allegations from U.S. President Donald Trump that he had lost popular support. These claims intensified during Zelenskyy’s White House visit in March, triggering a surge in misinformation. New York City’s newly elected mayor, Zohran Mamdani, similarly faced waves of disinformation before and after his victory.

Health misinformation ranged from bizarre but relatively harmless claims that sunscreens increase cancer risk or that certain foods affect a baby’s skin color during pregnancy, to dangerous assertions like “a healthy diet can cure breast cancer.” In Pakistan, false rumors that HPV vaccines caused infertility and disabilities sparked public hesitancy and even led to threats against healthcare workers.

Climate science continued to be distorted by deniers. When satellite data showed growth in Antarctic ice sheets during summer, this was immediately weaponized to claim climate change was reversing or entirely fabricated. DW’s fact-checkers explained the complex reality behind these simplistic interpretations.

The growing reliance on AI for information retrieval became another concerning trend. According to the Reuters Institute Generative AI and News Report 2025, the weekly usage of generative AI systems like ChatGPT and AI-powered news consumption roughly doubled over the year. While AI fact-checking tools gained popularity, a recent study found they frequently delivered inaccurate or misleading information.

Emotional and controversial topics proved effective vehicles for disinformation. After Trump signed a decree banning transgender women from women’s sports competitions, false narratives about transgender athletes proliferated online. DW analyzed relevant studies and consulted experts to provide factual context. Algerian boxer Imane Khelif, a cisgender woman, faced renewed false reports questioning her gender identity.

Historical disinformation also saw significant spread. In February, DW debunked myths about death tolls in the 1945 Allied bombing of Dresden, where some users exaggerated casualties tenfold. Earlier, Alice Weidel, Germany’s far-right Alternative for Germany (AfD) chancellor candidate, falsely claimed Adolf Hitler “was a communist, not right-wing” during a live conversation with Elon Musk—a claim historians unanimously reject.

Viral online hoaxes thrived throughout 2025. These included AI-manipulated images of Muhammad al-Muhammad, a Syrian teenager who helped stop a knife attack in Hamburg, designed to question his role in the incident. False claims circulated that Christmas markets in Germany had been canceled or that Muslims had “stormed” Christmas markets across Europe.

Collaboration proved essential in combating these threats. DW joined forces with German public broadcaster ARD’s Fact Check Network and the European Broadcasting Union (EBU) Spotlight Network to tackle election-related disinformation and expose coordinated influence operations—from Russian campaigns to misleading narratives about Gaza. One investigation with the EBU revealed that Israel was using its government advertising agency to run paid international campaigns aimed at shaping public opinion across Europe and North America.

The proliferation of deepfakes presented unprecedented challenges. As AI-generated content became easier to create and harder to detect, the line between genuine and fabricated media grew increasingly blurred. Examples ranged from seemingly innocent videos of yetis during LA protests and bunnies on trampolines to more concerning content like fabricated footage of European leaders waiting to meet Trump or synthetic AI-generated newscasts.

After Meta discontinued fact-checking services on Facebook and Instagram in the United States, many users turned to AI chatbots like Grok on X (formerly Twitter) to verify information. However, these tools frequently provided inaccurate assessments. In one notable example, Grok misidentified a current photograph from Gaza as an old image from Iraq, inadvertently fueling further misinformation.

As 2025 concluded, the experience highlighted that despite technological advances, effective fact-checking still requires human expertise and collaborative efforts across organizations and borders to counter increasingly sophisticated disinformation campaigns.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

The disinformation landscape described here is truly concerning. I’m glad to see fact-checkers are working hard, but the sheer scale of the problem is daunting. We need robust, coordinated efforts to combat this threat to truth and democracy.

Interesting to see how AI-generated content has become a major disinformation threat. The scale and speed of these fake narratives is truly concerning. I’m glad fact-checkers are working hard to debunk them, but the battle against digital deception seems far from over.

Agreed, this is a worrying trend that requires ongoing vigilance. Fact-checking is crucial, but we also need proactive solutions to limit the spread of AI-powered misinformation.

It’s alarming to see how deepfakes and targeted social media campaigns can so easily manipulate public opinion. Maintaining trust in democratic institutions will be a major challenge in the years ahead.

Definitely a complex issue that will require a multifaceted approach. Improved AI detection, media literacy, and platform accountability will all be important pieces of the puzzle.

The details provided here on the rise of AI-powered disinformation tactics in 2025 are truly eye-opening. The speed and scale at which false narratives can spread is deeply concerning. I commend the fact-checkers for their important work, but the sheer volume of this challenge must be overwhelming.

The details provided here on the disinformation tactics of 2025 are eye-opening. The use of AI-powered deepfakes and targeted social media campaigns to manipulate public opinion is truly alarming. I commend the fact-checkers for their important work, but the scale of this challenge is daunting.

This is a concerning glimpse into the disinformation landscape of the future. The sophistication of AI-generated content and its ability to rapidly spread false narratives is deeply troubling. Fact-checking efforts are crucial, but the battle against digital deception seems far from won.

Agreed. Combating this threat will require a multi-pronged approach, including improved AI detection, platform accountability, and public education. Vigilance and innovation will be key to protecting democratic discourse.

Fascinating insights into the evolving disinformation tactics of 2025. The use of AI-generated content to sow confusion and division is worrying. Fact-checking efforts are crucial, but clearly more needs to be done to stay ahead of these sophisticated methods.

Agreed. Proactive solutions, stronger platform accountability, and public education will all be key to combating this challenge. Vigilance and innovation will be required to protect democratic discourse.

Wow, the rise of AI-generated disinformation is a concerning development. I’m glad to see fact-checkers are on the case, but the sheer volume and precision of these tactics must be incredibly challenging to keep up with. Maintaining trust in information sources will be crucial in the years ahead.

Completely agree. This is a complex, multi-faceted problem that will require coordinated efforts from tech companies, governments, and the public. Disinformation resilience needs to be a top priority.

This is a sobering look at the disinformation landscape of 2025. The speed and scale of these AI-powered tactics is truly alarming. I commend the fact-checkers for their tireless work, but the battle against digital deception appears far from over.