Listen to the article

In a dramatic turn of events, viral images allegedly showing Venezuelan President Nicolás Maduro in custody of U.S. forces have been confirmed to be sophisticated AI-generated fabrications.

The images began circulating widely on social media shortly after U.S. President Donald Trump announced the capture of Maduro and his wife Cilia Flores during “Operation Southern Spear” on January 3, 2026. While the administration confirmed the operation’s success, no official photographs of Maduro in custody had been released during the initial hours after the announcement.

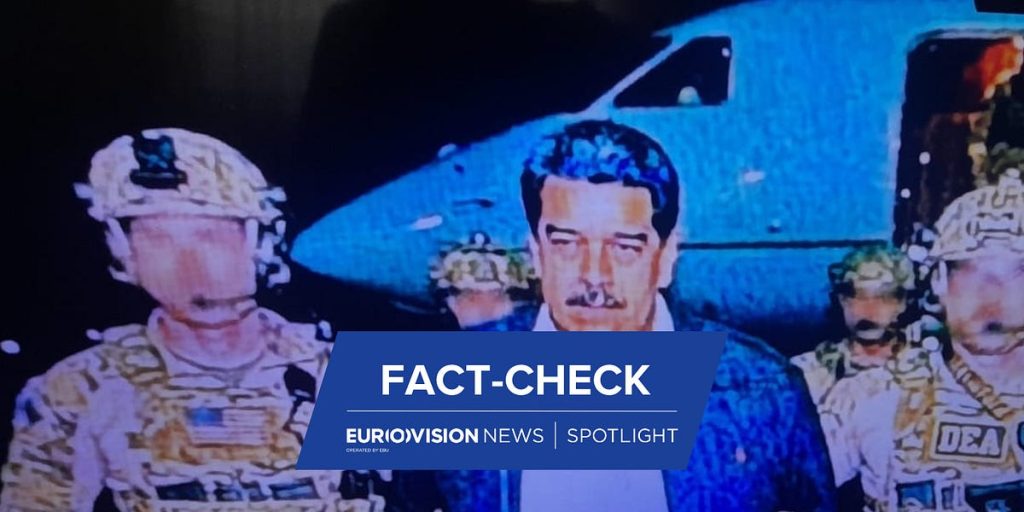

Multiple versions of the fabricated images spread quickly across platforms. The most widely shared photo purported to show Maduro being escorted by two Drug Enforcement Administration agents near a small aircraft. This image was deliberately designed to resemble a news broadcast screenshot, complete with a ticker-style text overlay and grainy quality that complicated immediate verification.

Digital forensics experts examined the viral photographs using Google’s SynthID tool, which detects embedded watermarks in AI-generated content created with Google’s software. Despite attempts to mask AI origins through filters, date stamps, and lower resolution, the analysis conclusively identified AI watermarks across significant portions of the images.

“SynthID’s detection in any part of an image is a strong indicator that Google’s AI software was used in its creation,” explained one digital forensics expert. “Unlike some other detection tools that simply scan for inconsistencies, the watermark detection system provides definitive evidence of artificial generation.”

A more sophisticated series of images later appeared in an Instagram slideshow, depicting Maduro being led around an airfield by U.S. personnel. These higher-resolution photos were clearly designed to mimic the style of official government documentation. However, forensic analysis confirmed every image in the collection contained AI watermarks with “very high confidence” across large portions of each photograph.

Tellingly, the Instagram account that shared these images described its owner as a “Professional in artificial intelligence” who trains others in “how to master AI and After Effects” — a clear admission that should have raised immediate suspicions.

Later in the day, President Trump did share what appears to be an authentic image via Truth Social showing Maduro in a gray Nike tracksuit, wearing eye shields and ear defenders, reportedly aboard the USS Iwo Jima. This official image bore no resemblance to the outfit or setting depicted in the AI-generated content that had already spread widely.

This incident highlights growing challenges for media organizations and the public in distinguishing between authentic and artificially generated visual content during breaking news events. While detection tools like SynthID provide some protection, the increasing sophistication of AI-generated imagery threatens to complicate understanding of significant world events.

The rapid spread of these convincing fakes demonstrates the evolving nature of misinformation in the digital age. News consumers should maintain heightened skepticism toward unverified images during developing stories, particularly those involving high-profile international incidents where official visual confirmation may be delayed.

As digital manipulation technology continues advancing, media literacy and verification skills become increasingly crucial for navigating a landscape where seeing is no longer necessarily believing, especially during moments of international significance.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

This is a troubling development that underscores the growing threat of AI-generated disinformation. Maintaining trust in media and information sources is critical for a healthy democracy. Strengthening verification methods and public awareness should be a top priority.

Well said. The ability to create highly realistic fake media poses significant risks, and we must stay vigilant in developing effective countermeasures. Collaboration between tech companies, governments, and civil society will be crucial in this effort.

This is a troubling example of how AI can be weaponized to spread disinformation and erode public trust. The ability to generate highly realistic fake media poses significant risks, especially in the context of geopolitics and national security.

Agreed. The development of effective detection methods and public awareness campaigns must be prioritized to stay ahead of malicious actors exploiting these technologies. Vigilance and critical thinking are key.

This is a concerning development, highlighting the need for greater transparency and accountability around the use of AI, especially in sensitive contexts like national security and politics. Fact-checking and digital authentication must keep pace with the rapid evolution of these technologies.

Absolutely. As AI capabilities expand, the potential for malicious actors to leverage them for propaganda and deception grows. Vigilance and robust verification processes are essential to maintain public trust.

As AI continues to advance, the potential for abuse and manipulation of visual media is increasingly concerning. This case highlights the urgent need for robust verification processes and media literacy education to combat the spread of disinformation.

Absolutely. The stakes are high, as fake media can have far-reaching consequences for decision-making, public discourse, and even national security. Collaborative efforts between technology companies, policymakers, and the public will be essential.

Interesting case study on the growing threat of AI-generated disinformation. It’s crucial that we develop robust methods to quickly verify the authenticity of images and videos, especially those with high political and social impact.

Agreed. Detecting AI-fabricated content will only become more challenging as the technology advances. Rigorous digital forensics and public awareness are key to combating this issue.

The proliferation of AI-generated fake media is a serious threat to informed decision-making and democratic discourse. This case underscores the importance of critical thinking, media literacy, and reliance on authoritative, fact-based sources.

Well said. Combating misinformation and deepfakes requires a multi-faceted approach involving technological, educational, and policy-based solutions. Collaborative efforts across sectors will be crucial.