Listen to the article

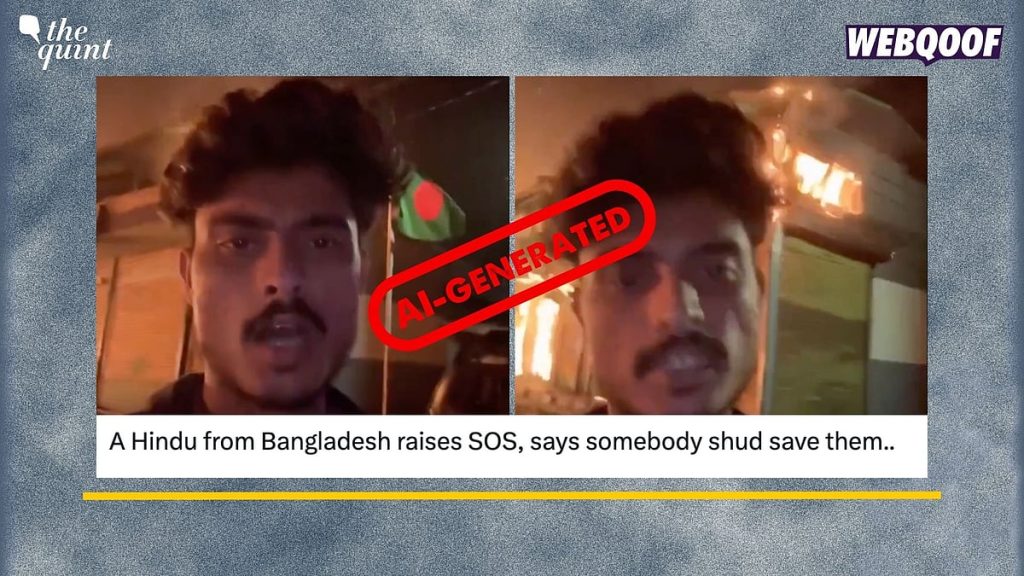

AI-Generated Video Falsely Depicts Anti-Hindu Violence in Bangladesh

Amid rising concerns about religious tensions in South Asia, fact-checkers have identified a fabricated video circulating on social media that purportedly shows violence against Hindus in Bangladesh. The investigation revealed the clip to be AI-generated content designed to inflame communal tensions.

The video, which has gained significant traction across multiple platforms, claims to document violent incidents targeting Hindus in Bangladesh on December 23. It features a man narrating disturbing scenes while urging viewers to “support otherwise like Dipu Chandra Dash all Hindus will be killed in Bangladesh.”

When subjected to forensic analysis, the footage yielded no matches with credible news reports covering recent events in Bangladesh. A comprehensive reverse image search traced the origin of the video to an Instagram account under the username ‘tarobhaikuldeepp,’ who appears to have created and disseminated the misleading content.

Several telltale signs point to the clip’s artificial nature. Most notably, the narrator mispronounces the name of Dipu Chandra Das—a Hindu factory worker who was indeed killed by a mob in Bangladesh—as “Dipu Chadar.” This type of pronunciation error is characteristic of AI-generated content, which often struggles with proper nouns and regional names.

The fabricated video emerges against a backdrop of genuine concerns about religious minorities in Bangladesh. The country has experienced periods of communal tension, with incidents of violence against Hindus reported in recent years. These authentic situations make fabricated content particularly dangerous, as it can be difficult for viewers to distinguish between real and manufactured threats.

Digital misinformation experts warn that AI-generated content represents a growing challenge for social media platforms and fact-checkers alike. As generative AI technology becomes more sophisticated and accessible, the potential for creating convincing false narratives increases substantially.

“What makes this particularly concerning is how the creator has referenced a real incident—the killing of Dipu Chandra Das—to lend credibility to the fabricated content,” explained Dr. Samantha Bradshaw, a digital disinformation researcher at American University. “This hybrid approach of mixing real events with fabricated footage makes detection more challenging for ordinary users.”

Bangladesh, a predominantly Muslim nation with approximately 13 million Hindus comprising nearly 8% of its population, has experienced sporadic communal tensions throughout its history. The relationship between Bangladesh and neighboring India, where Hindus form the majority, adds another layer of sensitivity to such misinformation.

Social media platforms have struggled to contain the spread of the video, which continues to be shared across WhatsApp groups and other messaging platforms even after being debunked. The incident highlights the limitations of content moderation systems when confronting sophisticated false information.

Authorities in both Bangladesh and India have urged citizens to verify information through official channels before sharing potentially inflammatory content. Digital literacy advocates emphasize that checking multiple credible news sources remains the most effective defense against such misinformation.

This case illustrates a troubling evolution in digital misinformation tactics, where AI technology is leveraged to create content specifically designed to exacerbate existing social tensions. The convincing nature of these fabrications poses significant challenges for societies already navigating complex religious and ethnic relationships.

As investigations continue, fact-checking organizations are working to trace the origins and motivation behind the creation of this particular piece of misinformation, which appears deliberately crafted to stoke fear among Hindu communities both within Bangladesh and abroad.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

Disturbing to see this AI-generated video being used to spread misinformation and inflame communal tensions. Fact-checking is crucial to counter the spread of such fabricated content online.

Agreed. Spreading unverified claims, even if they seem plausible, can have serious consequences. We need to be vigilant about scrutinizing sources and information, especially on sensitive issues.

This case highlights the importance of verifying the authenticity of visual content, especially when it relates to sensitive issues. Fact-checking and responsible reporting are essential to counter the spread of disinformation.

Absolutely. The proliferation of AI-generated media makes it increasingly challenging to distinguish truth from fiction. Rigorous analysis and cross-checking sources are crucial to upholding journalistic integrity.

I’m glad the fact-checkers were able to trace the origins of this misleading video and expose it as an AI-generated fabrication. It’s a sobering reminder of the need for media literacy and scrutiny of online content.

Completely agree. We must stay vigilant and not fall victim to the manipulative tactics of those seeking to create division and unrest through the use of AI technology.

This is a concerning example of how AI-generated content can be weaponized to spread misinformation and target vulnerable communities. Fact-checkers play a crucial role in exposing these kinds of fabrications.

Well said. Maintaining a vigilant and critical eye when consuming information online is more important than ever, given the increasing sophistication of AI-powered disinformation campaigns.

This case serves as a stark reminder of the potential for AI-generated content to be exploited for malicious purposes. Fact-checking and media literacy are essential tools in the fight against the spread of disinformation.

Agreed. Responsible use of AI technology and robust fact-checking processes are crucial to maintaining trust in the information ecosystem and protecting vulnerable communities from the harm of fabricated narratives.

It’s a shame that AI technology is being misused in this way to create false narratives and stoke religious divisions. Responsible use of these tools is essential to prevent the spread of disinformation.

Absolutely. While AI has many beneficial applications, we must ensure proper safeguards are in place to prevent malicious actors from exploiting the technology for nefarious purposes.

It’s troubling to see how easily AI can be used to create false narratives and manipulate public perception. This underscores the need for stronger regulations and ethical guidelines around the development and deployment of such technologies.

Well said. As AI capabilities continue to evolve, we must remain vigilant and proactive in addressing the potential for misuse. Collaboration between policymakers, tech companies, and civil society is key to finding solutions.