Listen to the article

#

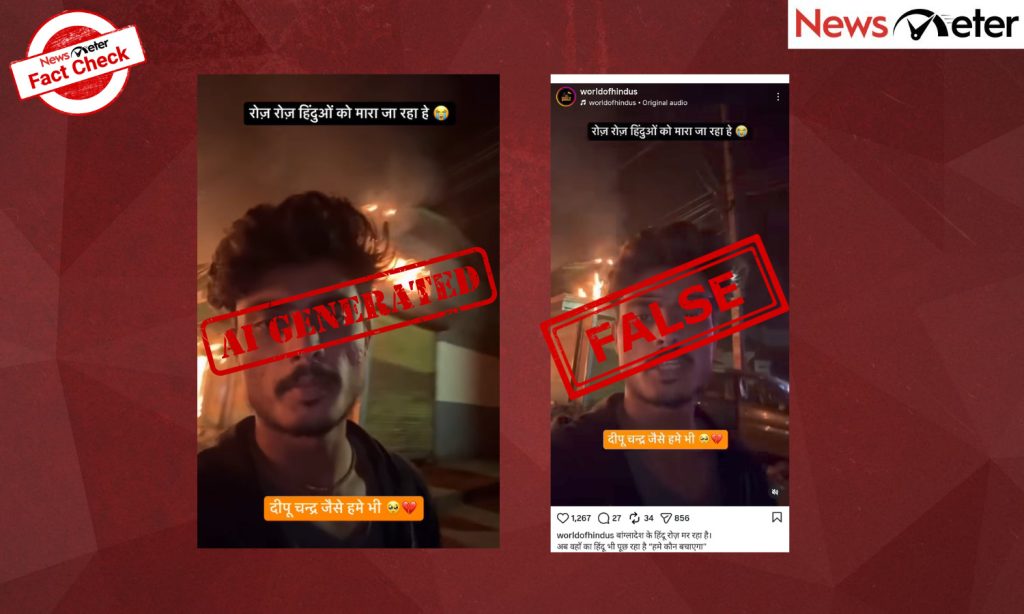

AI-Generated Video Falsely Shows Bangladeshi Hindu Man Pleading for Help During Communal Tensions

A fabricated video circulating widely across social media platforms falsely depicts a Bangladeshi Hindu man pleading for international assistance amid alleged communal violence. Digital forensic analysis has confirmed the footage is entirely AI-generated, marking another concerning instance of synthetic media being used to inflame religious tensions in South Asia.

The manipulated video shows a man walking down a street in what appears to be a vlog-style recording, with burning buildings visible in the background. Speaking in Hindi, the man claims: “This is Bangladesh. It is nighttime. They will finish us off like Deepu Chadar. You can see for yourself what is happening. Please share this video as much as possible so that someone can save us. I don’t understand how everything suddenly went so wrong.”

The video emerged in a sensitive context, just days after a real incident in which Dipu Chandra Das, a Hindu man, was lynched in Bangladesh’s Mymensingh district on December 18, 2025. Social media posts sharing the fabricated content included captions such as “Hindus in Bangladesh are dying every day. Now even the Hindus there are asking, ‘Who will save us?'”

An investigation by NewsMeter has conclusively determined the video is synthetic. Close analysis revealed multiple technical inconsistencies typical of AI-generated content, including a car with a door colored differently from its body and the subject’s unnatural lack of blinking throughout the footage.

Two independent AI detection tools confirmed the findings. Hive Moderation, a visual AI detector, scored the video at 99.6 percent probability of containing artificially generated elements. Additionally, Hiya Audio Intelligence Console determined the audio contained only a 1 percent match with markers typically found in genuine human speech, indicating almost certain AI voice synthesis.

The manufactured video appears designed to exploit existing religious tensions in Bangladesh, where the Hindu minority population has faced periodic violence. The timing coincides with heightened sensitivity following the actual death of Das, seemingly attempting to capitalize on real concerns to spread misinformation.

Digital rights experts have expressed growing alarm about the sophistication of AI-generated content being used to inflame communal divisions. “This represents a dangerous evolution in disinformation tactics,” said Dr. Aisha Rahman, a digital misinformation researcher at the South Asian Digital Rights Foundation. “The technology has reached a point where casual viewers might not recognize these videos as fake without specialized analysis.”

The incident underscores the challenges facing social media platforms and fact-checkers as AI-generated content becomes increasingly realistic and easier to produce. Meta, which owns Instagram where the video gained significant traction, has stated it is enhancing detection systems for synthetic media but acknowledges the technological arms race with content creators.

Authorities in both India and Bangladesh have urged citizens to verify information through official channels before sharing potentially inflammatory content related to communal matters. Bangladesh’s Ministry of Information has established a dedicated hotline for reporting suspected AI-generated content circulating about religious communities.

As technology continues to advance, experts emphasize that critical media literacy and awareness of AI capabilities are becoming essential skills for navigating an increasingly complex information landscape.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

7 Comments

While the plight of religious minorities in South Asia is a complex and important issue, we must be careful not to exacerbate tensions through the use of synthetic media. Responsible journalism and open dialogue are needed to find constructive solutions.

This AI-generated video is deeply concerning. While the situation for Hindus in Bangladesh is indeed troubling, spreading disinformation like this is not the answer. We need factual reporting and responsible dialogue to address these complex issues properly.

The lynching of Dipu Chandra Das was a tragic real-world event, and I hope the authorities in Bangladesh take appropriate action. However, using AI to create false victim narratives is not the way to raise awareness or seek justice.

I’m glad the digital forensics could verify this video as fake. It’s important to be vigilant about the rise of synthetic media and how it can be misused to sow discord. Fact-checking is crucial to counter the spread of false narratives.

It’s disheartening to see how technology can be misused to spread false narratives, even about serious issues like religious violence. I commend the Disinformation Commission for their work in exposing this particular instance of synthetic media abuse.

This case highlights the growing threat of AI-generated content being used to manipulate public opinion and inflame social divisions. I hope the authorities can take strong action against those responsible for creating and spreading this kind of disinformation.

Spreading misinformation, even with good intentions, can have serious consequences. I appreciate the Disinformation Commission’s efforts to call out these kinds of fabricated videos and maintain factual reporting on sensitive topics.