Listen to the article

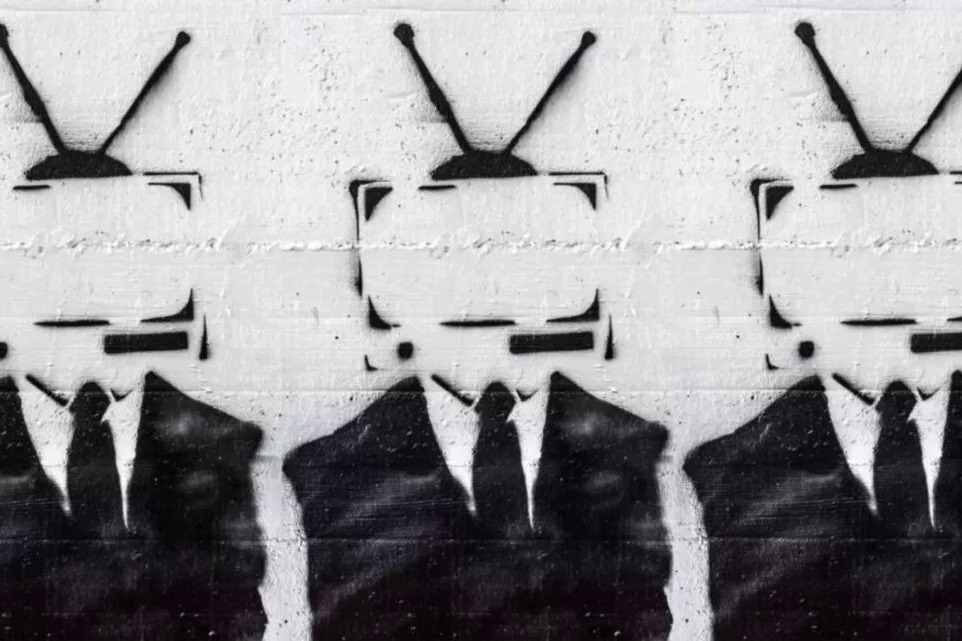

Russian Cyber Operations Intensify with AI-Generated Fakes Targeting Europe

Europe is witnessing an alarming surge in Russian cyber operations and AI-generated fake content as part of Moscow’s increasingly sophisticated information warfare campaign. In 2025, Russian operatives are extensively deploying neural networks, deepfakes, and generative videos to manipulate public opinion, discredit Ukrainian and European politicians, and distribute fabricated news designed to mimic reputable media outlets.

Ukraine’s Center for Countering Disinformation has identified 191 Russian information operations since the beginning of 2025, generating at least 84.5 million views. These operations extend beyond traditional deepfakes—where faces and voices are substituted—to include partial fakes that overlay synthetic audio on real footage, add fictional scenes to authentic videos, or generate entirely synthetic content. Particularly concerning are fake videos presented as legitimate content from established media organizations.

At the center of the Kremlin’s disinformation arsenal is Storm-1516, an operational model officially condemned by France in May 2025. This propaganda network distributes AI-generated fakes and rumors through fake websites and social media accounts. According to France’s digital security agency VIGINUM, Russia has utilized Storm-1516 since at least 2023, deploying it in 77 operations targeting France, Ukraine, and other Western nations. The network has been linked to Russian individuals and organizations, including the Main Intelligence Directorate (GRU) and the think tank Geopolitical Expertise.

John Mark Dougan, an American citizen who relocated to Russia in 2016, plays a pivotal role in the network by contributing to Storm-1516 content through various websites such as CopyCop. According to VIGINUM, coordination and financing are overseen by Yuriy Khoroshenskiy, believed to be a GRU officer from Unit 29155 and implicated in a 2020 cyberattack on Estonia. The content produced by Storm-1516 is subsequently amplified through Russian diplomatic accounts on X (formerly Twitter) and state media outlets including Sputnik, RIA Novosti, TASS, RT, and Rossiyskaya Gazeta.

Beyond Storm-1516, Russia operates a broader fake-content production machine. Social media platforms regularly feature AI-generated videos where Russian operators impersonate European politicians. German Chancellor Friedrich Merz has been a primary target, with researchers identifying more than 700 fake accounts spreading fabricated statements created with AI technology. Some videos even show Merz falsely endorsing dubious investment platforms in scam schemes. Similar fakes have targeted Estonian Prime Minister Kaja Kallas.

One notable manipulation involved an AI-generated video showing the phrase “Do you want to ban the Russian language?” allegedly projected onto Narva Castle’s wall. Journalists quickly identified this as an entirely AI-generated creation. Other targeted campaigns have focused on Ukrainian refugees, featuring altered voices and manipulated footage from international media, as well as AI-generated clips of “mobilized Ukrainians in tears” created using the Sora model. Ukraine’s General Staff has debunked these materials by highlighting unnatural speech patterns, clothing distortions, and other digital artifacts.

Experts emphasize that AI technology has made disinformation both more widespread and convincing. Many fake videos circulate through pseudo-media websites, making detection increasingly difficult. Even professional analysts face challenges, as direct links to original content often disappear when fakes are quickly deleted or reposted elsewhere.

European specialists are calling for greater responsibility from major technology platforms. VIGINUM reports that while social networks have been notified about interference, their responses remain slow. The EU has implemented the Digital Services Act and Digital Markets Act, but enforcement remains weak, according to legal experts.

A significant challenge is the disparity in resources between European government structures and Russia’s propaganda apparatus. While Russian propaganda and censorship bodies employ thousands, the EU relies largely on independent analytics for similar functions. Experts suggest that granting researchers access to platform data would facilitate proving Big Tech’s role in amplifying Kremlin narratives.

In this environment of widespread AI-driven disinformation, citizens must take responsibility for verifying information. Marju Himma, associate professor at the University of Tartu, notes that distinguishing deepfakes from authentic footage is increasingly challenging, making critical thinking and information literacy essential skills. Several verification tools—including InVID/WeVerify, Forensically, and DeepFake-o-Meter—can help detect manipulation, editing traces, and content-spread patterns.

Researcher Signe Ivask points out that deepfakes often contain telltale signs, such as unnatural eye movements, mismatched speech and facial expressions, strange lighting artifacts, and blurred areas. The ultimate goal of Russian disinformation is to provoke strong emotional reactions and impulsive sharing—a tactic employed ahead of Estonia’s local elections.

As Europe confronts this growing threat, the consensus among experts is clear: protecting against AI-driven fakes increasingly depends on citizens themselves. The more attentive and critically minded people become, the better their chances of resisting Russia’s expanding manipulation campaigns.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

9 Comments

While the technical capabilities behind these deepfakes are impressive, the intent to deceive and sow discord is deeply concerning. Rigorous fact-checking will be paramount going forward.

I hope European leaders take decisive action to counter this threat. Investing in detection tools and media literacy campaigns could help inoculate the public against these synthetic fakes.

I wonder what the long-term impact of this disinformation blitz will be on European politics and society. It’s crucial that citizens are educated on spotting these synthetic fakes.

You raise a good point. Media literacy and critical thinking skills will be essential for the public to navigate this increasingly complex information landscape.

The Kremlin’s use of AI and deepfakes to undermine democratic institutions is a worrying development. Strengthening cybersecurity and international cooperation will be key to fighting back.

This is a troubling escalation in Russia’s information warfare tactics. The proliferation of AI-generated fakes could have far-reaching consequences if left unchecked.

This is very concerning. The scale and sophistication of these Russian disinformation campaigns is alarming. We must remain vigilant and fact-check everything we see online.

Deepfakes and AI-generated fakes pose a serious threat to information integrity. Governments and tech companies need to invest heavily in detection and mitigation technologies.

Absolutely. Proactive measures are critical to counter the spread of these fabricated materials and maintain public trust.