Listen to the article

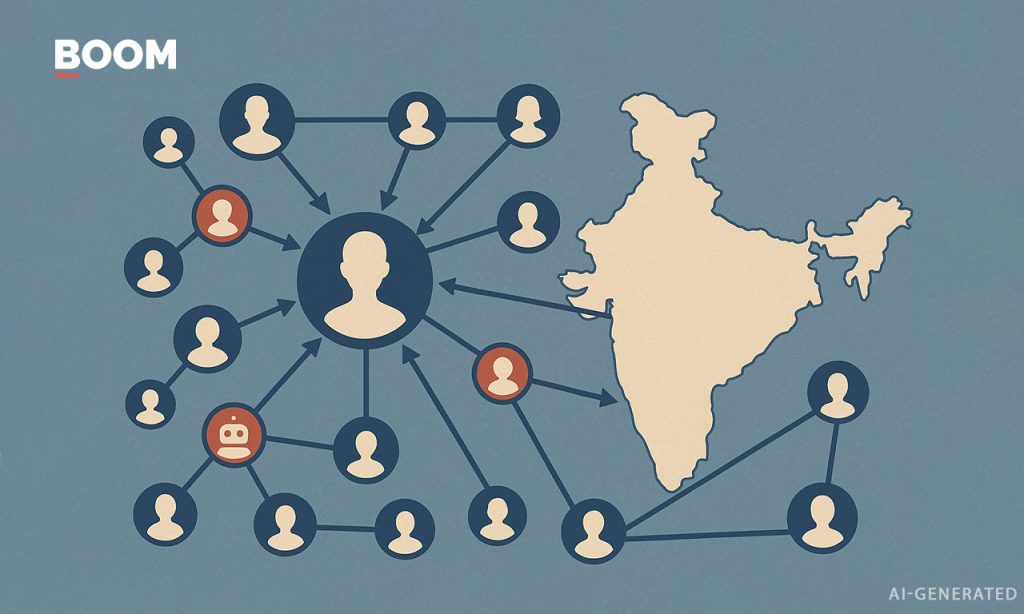

Indian Air Force Targeted by AI-Driven Disinformation Campaign

An elaborate disinformation campaign using artificial intelligence has been targeting India’s military and governmental institutions since the Pahalgam terror attack and Operation Sindoor earlier this year. The sophisticated effort came into sharp focus in November when a fake video circulated on X showing Air Chief Marshal AP Singh criticizing the government over the induction of indigenous Tejas fighter jets after a fatal crash at the Dubai Air Show.

The video, featuring an AI-cloned voice of the Air Chief Marshal, was quickly identified as fraudulent. It represents just one example from a coordinated campaign that has produced over 30 synthetic media instances flagged by fact-checkers in recent months.

Analysis of the accounts involved reveals a network operating primarily from Pakistan, according to location data available through X’s “About this account” feature. Key disseminators include handles like @InsiderWB, @Baba_Thoka, @Hawkss_eye, and @abubakarqassam, which have repeatedly appeared in debunking reports by Indian fact-checkers.

“This bears all the hallmarks of a coordinated influence operation from a troll farm,” said an expert familiar with the situation. “The synchronization, rapid amplification patterns, and creation of fake Indian-appearing personas are textbook tactics, but what makes this unique is the widespread deployment of generative AI.”

The campaign has strategically targeted moments of national crisis and attempted to exploit internal divisions along religious and caste lines, particularly in sensitive regions like Manipur and Ladakh. Indian authorities have responded by requesting X to legally withhold these accounts within India and using the government’s PIB Fact-Check handle to debunk false claims.

India’s top defense establishment has been a primary target of the operation. Disinformation narratives include fabricated admissions of tactical failures, false statements about territorial concessions to China, inflated casualty figures, and manufactured quotes suggesting the politicization or communalization of the armed forces.

Pamposh Raina, who heads the Deepfakes Analysis Unit of the Trusted Information Alliance in India, noted that the increased public visibility of military officials has inadvertently created more opportunities for manipulation. “Military personnel are giving more interviews and making more public remarks post Operation Sindoor, making it easier for bad actors to exploit this content,” Raina explained.

The sophistication of these fakes varies, with some containing telltale signs like distorted insignia and name tags on military uniforms, or mispronunciations of Indian names. In one notable instance, Bihar Chief Minister Nitish Kumar’s name was consistently pronounced as “Neetish Kumar” across multiple deepfakes.

The operation has also created fake news articles, fabricated letters, and impersonated prominent journalists including Ravish Kumar, Palki Sharma of Firstpost, and NDTV’s Shiv Aroor to push false narratives.

Perhaps most alarming was a series of deepfake videos claiming that Ladakhi climate activist Sonam Wangchuk had died in police custody. Wangchuk’s wife Gitanjali Angmo quickly refuted this claim, confirming she had visited her husband in his Rajasthan prison on the day the false videos were circulated.

The timing of this disinformation surge coincides with reduced content moderation at X following Elon Musk’s acquisition of the platform. With 22.17 million Indian users as of October 2025 – making India X’s fourth-largest market – the platform provides fertile ground for influence operations. The dismantling of X’s trust and safety teams and restricted access to monitoring tools have further complicated efforts to analyze and counter such campaigns.

While the precise actors behind this operation remain unidentified, the patterns suggest a coordinated effort aimed at undermining India’s institutions and exacerbating internal divisions through increasingly sophisticated technological means.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

16 Comments

Deepfakes amplifying false narratives against India’s military and institutions is extremely concerning. Robust fact-checking and accountability measures are crucial to counter these malicious influence operations.

Absolutely. Maintaining the integrity of online information is vital, especially on issues of national security. Rapid response and transparency will be critical to combating these coordinated campaigns.

The use of AI-cloned voices to create fake videos of military officials is a serious issue. Fact-checking and attribution efforts are crucial to exposing and countering these malicious campaigns.

Agreed. Transparent and accountable platforms are needed to protect against the exploitation of emerging technologies for disinformation purposes.

It’s concerning to see this level of sophisticated AI-driven disinformation targeting India. Fact-checkers play a vital role in debunking these fake videos and exposing the bad actors behind them.

Absolutely. Maintaining digital integrity and public trust is crucial, especially when it comes to national security issues. Rigorous verification is key to fighting these coordinated campaigns.

The use of deepfakes to target India’s military and government is a serious issue that demands a strong response. Fact-checking and accountability measures are crucial to exposing and countering these coordinated influence operations.

Agreed. Protecting the integrity of online information, especially on matters of national security, should be a top priority. Rigorous verification and transparency will be key to combating these malicious campaigns.

This disinformation campaign using AI-generated content is a worrying trend. I hope the relevant authorities can work with tech platforms to quickly identify and remove these synthetic media instances.

Yes, a collaborative approach between government, industry, and civil society will be essential to address the challenge of AI-driven manipulation and restore public trust.

Synthetic media can be a powerful tool for manipulating public discourse. I hope the Indian government and tech companies can work together to rapidly identify and remove these coordinated disinformation attempts.

Yes, a multi-stakeholder approach is necessary to address the complex challenge of AI-driven disinformation. Proactive detection and response will be key to safeguarding national interests.

This type of coordinated disinformation campaign is a worrying trend. I hope the Indian authorities can work with tech platforms to quickly identify and remove these synthetic media instances.

Yes, a collaborative approach between government, industry, and civil society will be essential to combat the spread of deepfakes and other forms of online manipulation.

Deploying deepfake tech to spread disinformation against India’s military and government is a troubling tactic. We need robust fact-checking and accountability measures to counter these malicious campaigns.

Agreed. Synthetic media can be a powerful tool for manipulation, so it’s critical that platforms and authorities stay vigilant against coordinated influence operations.