Listen to the article

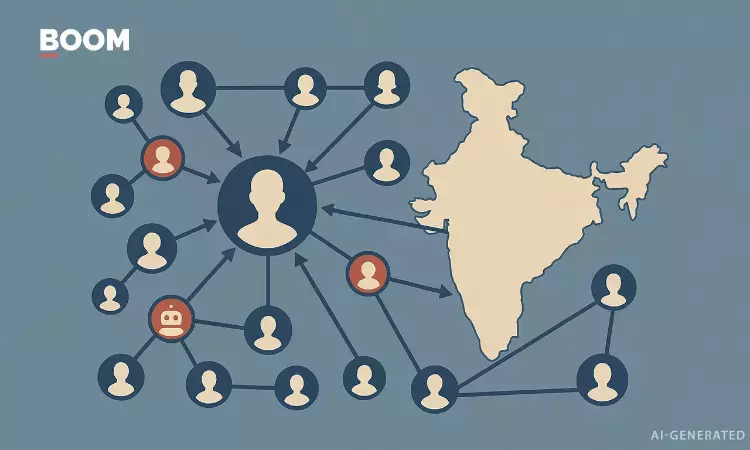

Recent days have seen the rapid spread of manipulated videos across Indian social media, with one particularly concerning clip falsely attributing inflammatory statements to a high-ranking military official.

The doctored video purportedly shows General Upendra Dwivedi, Chief of Army Staff of the Indian Army, announcing plans to reduce the number of non-Hindu soldiers by 50% by 2028. Security experts have confirmed the video is entirely fabricated, raising alarms about the growing sophistication of AI-generated misinformation targeting India’s armed forces.

Jency Raina, who heads the specialized Deepfakes Analysis Unit (DAU) of the Trusted Information Alliance in India, identified several telltale signs of artificial intelligence manipulation in the video. “Upon careful examination, we detected voice cloning technology being used to mimic General Dwivedi’s speech patterns, alongside digitally distorted military insignia and altered name tags,” Raina explained in a statement released Tuesday.

The emergence of this deepfake comes at a particularly sensitive time for India’s military establishment, which has long prided itself on maintaining religious diversity within its ranks. The Indian Armed Forces have personnel from various faiths including Hinduism, Islam, Sikhism, Christianity, and other religions, reflecting the country’s secular constitutional values.

Defense Ministry officials have swiftly condemned the video as a malicious attempt to sow discord within the military and wider society. In an official statement, the ministry called the deepfake “a deliberate effort to undermine national security by creating religious divisions within our armed forces.”

This incident highlights the growing national security threat posed by deepfake technology in India, which has seen a 300% increase in AI-generated misleading content over the past year, according to a recent report by the Internet and Mobile Association of India.

The Trusted Information Alliance, a consortium of technology companies, media organizations, and academic institutions established in 2022, has been at the forefront of combating digital misinformation in South Asia. Their Deepfakes Analysis Unit employs advanced forensic tools to identify and flag manipulated media before it gains widespread traction.

Cybersecurity experts warn that military and government officials are increasingly becoming prime targets for deepfake creators. “High-ranking military personnel make particularly appealing targets because their statements carry significant weight and can influence public opinion on sensitive matters like national security and social cohesion,” said Dr. Sameer Patil, a defense analyst with the Observer Research Foundation in Mumbai.

The Indian government has recently proposed amendments to the Information Technology Rules that would specifically address deepfakes and other forms of synthetic media. The proposed regulations would require platforms to label AI-generated content clearly and impose penalties for creating or sharing harmful deepfakes.

Social media companies including Meta and X (formerly Twitter) have removed the falsified video from their platforms, though security analysts note that the clip continues to circulate on encrypted messaging services like WhatsApp and Telegram.

General Dwivedi himself addressed the situation in a brief video statement released through official Army channels, emphasizing the military’s commitment to secularism. “The Indian Army has always drawn its strength from diversity. We remain steadfast in our constitutional values and reject any attempt to create divisions among our ranks,” he stated.

The incident serves as a stark reminder of the evolving challenges facing information integrity in the digital age, particularly as AI technology becomes more accessible and sophisticated. Experts stress that combating such misinformation requires a multi-pronged approach involving technological solutions, media literacy, and regulatory frameworks.

As India heads toward general elections in 2024, authorities have expressed concerns that deepfakes could increasingly be deployed as tools for political manipulation, making the work of fact-checking organizations and specialized units like the DAU more crucial than ever.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

This is a sobering reminder of the potential for AI-powered misinformation to cause real harm, especially when it targets sensitive national security issues. Staying vigilant and quickly debunking these kinds of deepfakes will be essential going forward.

The Indian military’s religious diversity is an important part of its identity and strength. Attempts to undermine that through the use of deepfake technology are particularly troubling. Rigorous analysis and public awareness campaigns will be critical to counter these threats.

Absolutely. Maintaining the military’s inclusive culture and public trust is crucial, especially in the face of such sophisticated disinformation efforts. Diligent fact-checking and transparency will be key to preserving these core values.

It’s concerning to see the military being targeted by this type of AI-generated disinformation. Given the sensitive nature of national security, these kinds of manipulated videos could have serious ramifications if they’re not quickly debunked and discredited.

The use of voice cloning and altered military insignia to create this deepfake is quite sophisticated. It’s a troubling sign of how advanced these AI-based disinformation techniques have become. Maintaining public trust in institutions like the military will be an ongoing challenge.

You’re right, this is an increasingly complex issue. As AI capabilities advance, the ability to generate highly convincing fake content will only grow. Vigilance and robust fact-checking processes will be essential to combat the spread of this kind of misinformation.

This is a concerning development. AI-generated disinformation can be incredibly difficult to detect and can have serious consequences, especially when it targets sensitive issues like the military. Fact-checking and media literacy will be crucial to combat the spread of these manipulated videos.

I agree, the growing sophistication of deepfakes is alarming. It’s critical that security experts continue to develop new techniques to identify and counter these AI-generated fakes before they can do real damage.