Listen to the article

In Barnsley, local officials are battling a growing tide of misinformation on social media, highlighting the challenges that local governments face in the digital age where false claims can spread rapidly and influence public opinion.

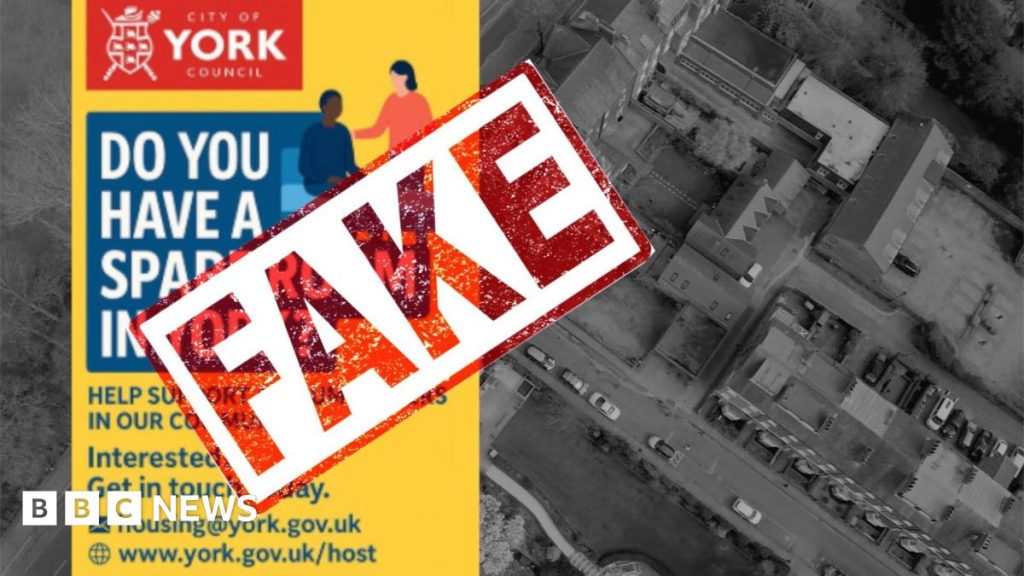

Council leader Sir Steve Houghton expressed concern after being shown a fake AI-generated image of York. “It looks real, I wouldn’t know,” he admitted, underscoring how convincing artificial intelligence-created content has become, even to experienced public officials.

The Barnsley Metropolitan Borough Council has been confronting content creators who publish false information about the authority. In some disturbing cases, when asked to remove inaccurate content, creators have refused. “We’ve even had some people with content saying we’re not going to change this because we’re making money out of it. Now that is unbelievable,” Houghton revealed.

This problem represents a growing challenge for local authorities across the UK, who often lack the resources and technical expertise to combat sophisticated misinformation campaigns. While Barnsley hasn’t specifically encountered AI-generated advertisements targeting the council, they’ve had to develop strategies to address false claims circulating online.

The council has been forced to use its own communication channels to counter misinformation. “We have to use our channels to try and counter a lot of that activity,” Houghton explained, suggesting the authority is having to divert resources toward fact-checking and correcting false narratives.

Of particular concern is the potential impact on community relations. “It is a worry, particularly around social cohesion. We do at the moment get a lot of misinformation about asylum seekers and disinformation about asylum seekers,” Houghton said. The spread of false information about vulnerable groups can exacerbate tensions and potentially lead to real-world consequences in communities already facing social and economic challenges.

The situation in Barnsley reflects a broader national issue as councils across Britain grapple with the effects of online misinformation. The Local Government Association has previously warned that local authorities are increasingly finding themselves targets of coordinated misinformation campaigns that can undermine public trust and hamper service delivery.

“We’ve got to correct that because people need to be safe,” Houghton emphasized, pointing to the council’s responsibility to maintain accurate information that affects public safety and community wellbeing.

The challenge is compounded by the tendency of some residents to believe information simply because it appears online. “People go on social media and go ‘oh, look at this, it must be right or people wouldn’t have put it on’. Well I’m sorry, people do put things on. Sometimes by mistake and they’re wrong but sometimes deliberately and we’ve got to monitor and correct that,” Houghton explained.

The situation in Barnsley highlights the evolving nature of governance in the digital age, where managing online information has become as important as traditional council responsibilities. Local authorities now find themselves in the position of fact-checkers, often with limited resources to tackle sophisticated misinformation.

Digital literacy experts point out that the problem is likely to worsen as AI tools become more accessible, making the creation of convincing fake content easier and more widespread. For resource-constrained local councils, this presents a significant challenge that requires new approaches to communications and public engagement.

As technology continues to evolve, the experiences of councils like Barnsley suggest that local governments may need greater support, training, and resources to effectively combat digital misinformation and maintain public trust in an increasingly complex information landscape.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

19 Comments

The refusal of some content creators to remove false information due to financial gain is deeply concerning. Authorities must find ways to hold these individuals accountable and deter such behavior.

Agreed. Prioritizing profits over the integrity of public discourse is unacceptable and undermines the democratic process.

This is a serious problem that local governments must address. AI-generated misinformation can be incredibly convincing, even to experienced officials. Councils need more resources and technical expertise to combat these sophisticated campaigns.

Absolutely. Refusing to remove false content due to monetary gain is unacceptable. Authorities must find ways to hold these creators accountable.

This is a worrying trend that extends beyond Barnsley. Local governments across the UK must work together to develop coordinated, cross-jurisdictional responses to AI-driven disinformation campaigns.

This is a cautionary tale about the potential dangers of AI technology. While it can be a powerful tool, robust safeguards and oversight are needed to prevent it from being weaponized for malicious purposes.

The fact that even experienced officials can be fooled by AI-generated content is deeply troubling. Local authorities require significantly more resources and expertise to stay ahead of these rapidly evolving threats.

This issue highlights the need for greater digital literacy and critical thinking skills among the public. Residents must be empowered to spot and resist AI-generated disinformation campaigns.

Absolutely. Public awareness and education will be key to combating the spread of this type of misinformation.

Kudos to the Barnsley Council for confronting this challenge head-on. Their experience highlights the need for greater support and guidance for local authorities in navigating the complex landscape of digital misinformation.

Absolutely. Sharing best practices and lessons learned will be vital as other councils work to address similar challenges.

Artificial intelligence is a double-edged sword – it can be a powerful tool, but also a dangerous weapon in the wrong hands. Councils must find ways to harness AI responsibly while mitigating the risks of misinformation.

The Barnsley Council’s experience highlights the urgent need for greater investment in digital literacy and critical thinking skills among the public. Empowering citizens to navigate the online landscape is crucial.

Absolutely. Equipping the public with the tools to identify and resist AI-driven misinformation will be key to strengthening democratic resilience.

The rise of AI-powered disinformation is deeply concerning for democratic processes. Local governments need to develop robust strategies to quickly identify and counter these threats before they can influence public opinion.

Agreed. This represents a growing challenge that will only intensify as AI technology becomes more advanced and accessible. Proactive measures are crucial.

The proliferation of AI-powered disinformation is a grave threat to democratic processes at the local level. Urgent action is needed to safeguard the integrity of information and protect public discourse.

This is a complex challenge that will require a multifaceted approach. Councils must work closely with tech companies, fact-checkers, and the wider community to develop effective strategies.

Collaborative efforts will be crucial. No single entity can tackle this issue alone.