Listen to the article

In the early hours of January 3, 2026, as U.S. special forces arrested Venezuelan President Nicolás Maduro, social media platforms became flooded with a tidal wave of misinformation that experts say vastly outpaced previous crises in both volume and sophistication.

The arrest, officially confirmed by U.S. President Donald Trump via Truth Social and subsequently by Attorney General Pam Bondi, triggered what analysts are calling a “digital shockwave” across major platforms. Rather than factual reporting, users on TikTok, Instagram, and X encountered an overwhelming barrage of AI-generated fakes and repurposed archive footage.

Researchers at the Venezuelan Fake News Observatory, who previously documented 421 major disinformation cases during Venezuela’s contentious 2024-25 election period, estimate that misinformation volume on the first day of the U.S. operation alone exceeded typical monthly averages by 10 to 20 times.

“This represents a catastrophic failure of tech platforms’ moderation systems,” said Dr. Elena Márquez, director of the Observatory. “We’re witnessing the consequences of systematic cuts to content verification infrastructure.”

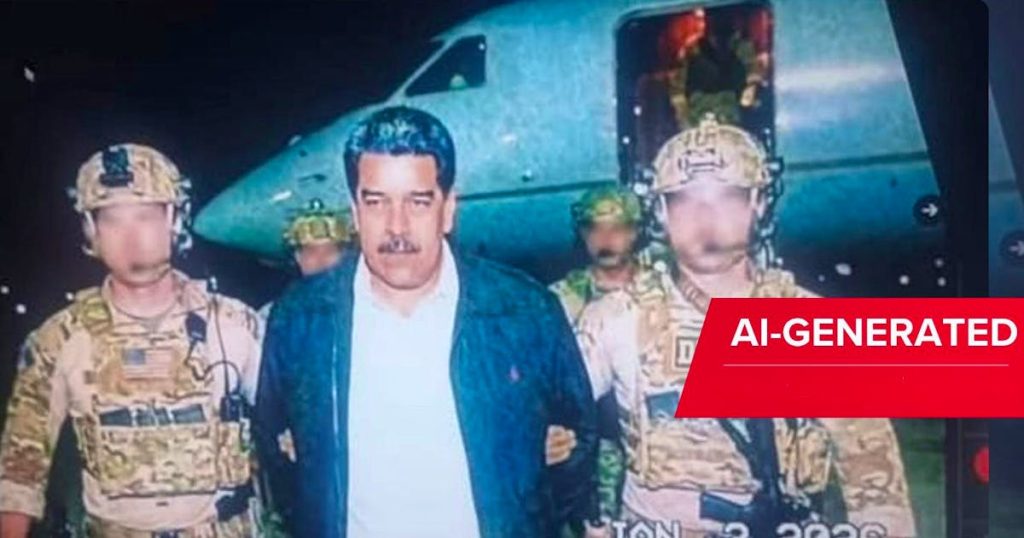

The sophistication of fake content has reached alarming new heights. Minutes after news of the arrest broke, a convincing image showing Maduro flanked by DEA agents went viral, amassing millions of views before technical analysis revealed it as AI-generated. Google DeepMind’s SynthID technology eventually identified an invisible watermark proving the image’s artificial origin.

Industry experts point to recent policy changes as contributing factors to the crisis. In early 2025, Meta officially discontinued its external fact-checking program, while TikTok implemented substantial cuts to its “Trust & Safety” teams. These decisions effectively dismantled critical safeguards against unmarked AI content at a time when generative technology was rapidly advancing.

Platform inconsistency further complicated the situation. While AI image generators produced convincing arrest scenes, some AI systems like ChatGPT initially refused to acknowledge the invasion entirely, even after official U.S. government confirmation had been released.

The economic incentives behind the spread of misinformation remain a key concern. Platform algorithms prioritizing engagement continue to amplify sensational content regardless of accuracy. A video falsely depicting attacks on Caracas—actually footage from November 2025—garnered over two million views on X before being flagged. Similarly, AI-animated clips by creator Ruben Dario generated hundreds of thousands of views on TikTok within hours.

Even political figures participated in spreading misinformation. Laura Loomer, a prominent political commentator, shared images purportedly showing Venezuelans celebrating Maduro’s arrest that were actually from 2024 events.

The judiciary’s response has moved swiftly, with U.S. prosecutors in New York already preparing narco-terrorism and conspiracy indictments against Maduro. Meanwhile, traditional media outlets struggle to compete with the torrent of automated content flooding social platforms.

Media ethics professor Carlos Jiménez from Universidad Central de Venezuela called the situation “a fundamental threat to social stability,” noting: “When citizens can no longer distinguish between rendered fiction and military reality, we’re facing not just an information crisis but a crisis of democratic functioning.”

The Venezuela situation highlights the growing gap between the rapid proliferation of synthetic media and the diminishing capacity for verification. Technical experts recommend that users scrutinize content carefully, looking for anatomical inconsistencies in AI-generated images (particularly hands, teeth, and eyes), checking for logical inconsistencies in lighting and shadows, and verifying sources through multiple established news agencies.

As the U.S. military operation continues to unfold, the information landscape surrounding it serves as a stark warning about the future of crisis reporting in an era of advanced AI capabilities and reduced platform accountability.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

11 Comments

The scale and sophistication of the misinformation campaign is truly alarming. I hope the relevant authorities can quickly contain the spread of these AI-generated fakes.

While the arrest of Maduro is significant, the deluge of false information is deeply concerning. We need stronger safeguards to protect the integrity of the public discourse.

I agree. This highlights the urgent need for tech platforms to invest in more robust content verification and moderation capabilities.

The arrest of Maduro highlights the ongoing struggle for truth and transparency in the digital age. It’s critical that we remain vigilant and rely on authoritative sources to navigate this complex situation.

Agreed. The rapid spread of misinformation underscores the urgent need for improved content moderation and verification systems on social media platforms.

This is a troubling development, but I’m glad to see the Venezuelan Fake News Observatory closely monitoring the situation. Fact-checking will be crucial in the days ahead.

Absolutely. We must be discerning consumers of news and information, especially during fast-moving events like this. Maintaining a critical eye is essential.

The arrest of Maduro is a significant event, but the flood of misinformation is deeply troubling. I hope the authorities can quickly address this crisis.

Navigating the truth in this news cycle will be a major challenge. I hope the Venezuelan Fake News Observatory can provide reliable guidance to the public.

This is a stark reminder of the vulnerabilities in our information ecosystem. We must find ways to build more resilience against the spread of misinformation.

Absolutely. Restoring trust in the media and online discourse should be a top priority for policymakers and tech companies.