Listen to the article

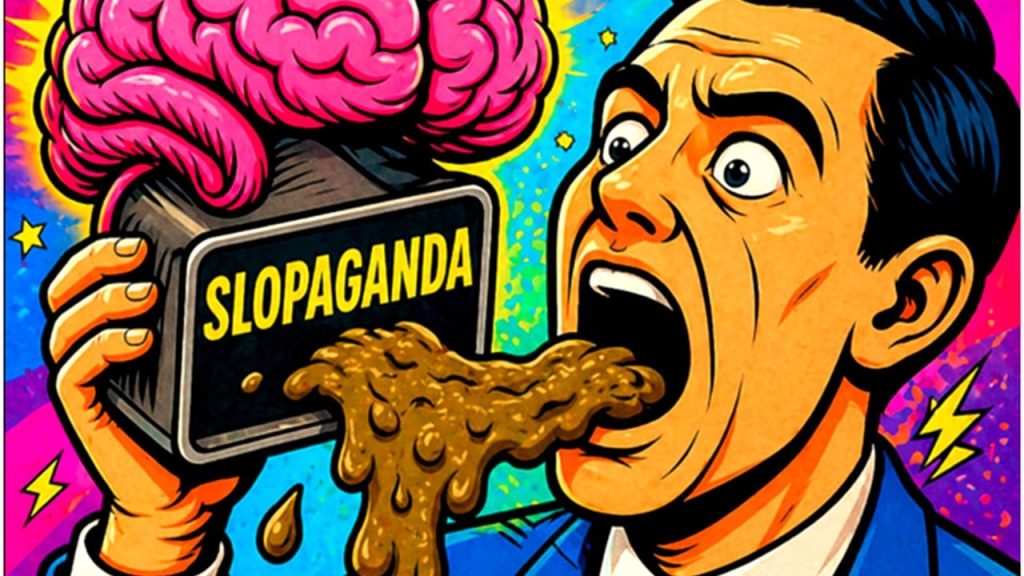

The rise of Iran’s “slopaganda” marks a seismic shift in how nations wage information warfare, with AI-generated content threatening to overwhelm global discourse and redefine what audiences accept as truth.

What began as Iranian meme campaigns targeting Donald Trump has evolved into a sophisticated strategy that cybersecurity experts now call “slopaganda” – the mass production of low-quality content designed not to convince but to overwhelm audiences through sheer volume.

This approach represents a fundamental departure from traditional propaganda. Instead of crafting compelling singular narratives, nations now flood digital platforms with countless variations of the same message, saturating information channels until the repetition creates a false impression of consensus.

“The impact of this new slopaganda mechanism is staggering,” notes one analysis, which predicts that by 2025, automated or bot-assisted activity will constitute between 50 and 60 percent of all internet traffic.

Iran’s digital strategy escalated dramatically following the 2020 killing of General Qasem Soleimani. Iranian-connected accounts ramped up production to thousands of posts daily, leveraging generative AI to produce content at virtually no cost. What would have once required significant resources and manpower can now be accomplished through algorithms capable of generating hundreds of images, memes, and videos every minute.

This phenomenon extends well beyond Iran. During the Russia-Ukraine conflict, researchers documented millions of weekly posts across platforms including Telegram, X (formerly Twitter), and TikTok as part of competing narrative campaigns. While Ukraine, under President Volodymyr Zelenskyy, focused on authenticity and relatability, pro-Russian efforts emphasized content volume and repetition to dominate attention spans.

China has similarly deployed high-volume messaging strategies. During the COVID-19 pandemic, analysts identified coordinated posting campaigns by state-linked accounts that flooded platforms with polished but repetitive content designed to reinforce specific narratives through frequency rather than substance.

Democratic systems have not been immune. Investigations into the 2016 U.S. elections revealed how Russia’s Internet Research Agency generated tens of thousands of posts monthly, reaching millions of Americans through algorithmic amplification. The technique proved remarkably effective at sowing division and confusion around election integrity.

The global spread of these tactics is concerning. According to Freedom House, organized digital manipulation campaigns now operate in more than 40 countries, suggesting these approaches are becoming standard practice in international relations and political conflict.

What makes slopaganda particularly effective is its exploitation of platform algorithms. Social media platforms typically reward engagement, regardless of content quality or veracity. When users encounter multiple similar narratives in rapid succession, it creates an artificial perception of consensus – even when the underlying claims lack factual basis.

“The risk of slopaganda is cumulative,” warns one digital security expert. As AI-generated content becomes increasingly sophisticated and prevalent, distinguishing between satire, misinformation, and truth grows increasingly difficult. The technique builds belief through repetition rather than substance, establishing familiarity that gradually transforms into acceptance.

This represents a new form of soft power that operates not through persuasion or even clear deception, but through saturation. In an attention economy where users face constant information overload, the side that dominates the digital space effectively defines what audiences perceive as truth.

As generative AI technology advances and becomes more accessible, the volume and sophistication of slopaganda will likely increase. This presents significant challenges for media literacy, platform governance, and international security. Without effective countermeasures, the digital landscape risks becoming less a space for genuine discourse and more a battlefield where truth is determined not by facts but by algorithmic dominance.

The evolution from Tehran’s early meme campaigns to today’s sophisticated information operations signals a troubling future where digital floods may overwhelm our collective ability to distinguish fact from fiction.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

13 Comments

The concept of ‘slopaganda’ is a chilling development in the evolution of propaganda techniques. The strategic use of AI to overwhelm audiences with low-quality content is a worrying trend that threatens to undermine our ability to discern truth from fiction. We must remain vigilant and work to counter these efforts.

This article highlights an alarming trend in modern propaganda tactics. The use of AI-generated content to flood information channels and create false impressions of consensus is truly concerning. We must be vigilant in identifying and combating such insidious attempts to manipulate public discourse.

I agree. The scale and sophistication of these propaganda efforts are deeply troubling. We need robust strategies to counter the spread of disinformation and maintain the integrity of our information ecosystems.

This article raises important questions about the future of online discourse and the role of AI in shaping public narratives. While the potential benefits of AI are well-documented, the malicious use of this technology for propaganda purposes is deeply concerning. We must remain vigilant and proactive in addressing these challenges.

The concept of ‘slopaganda’ is a fascinating and unsettling development. It underscores the need for improved media literacy and critical thinking skills among the public. As consumers of information, we must be more discerning and question the sources and motivations behind the content we encounter.

Absolutely. Equipping people with the tools to distinguish truth from falsehood is crucial in an era where the sheer volume of content can overwhelm our ability to fact-check and verify. Strengthening digital literacy should be a top priority.

Fascinating insight into the changing landscape of modern propaganda. The shift towards high-volume, low-quality content production is a concerning development that undermines our ability to discern truth from fiction. We must redouble our efforts to cultivate critical thinking and media literacy skills in our communities.

This article provides a sobering look at the growing threat of AI-driven propaganda. The rise of ‘slopaganda’ techniques, characterized by the mass production of low-quality content, represents a fundamental shift in how nations wage information warfare. As citizens, we must commit to developing critical thinking skills and strengthening our media literacy to navigate this increasingly complex landscape.

Absolutely. The scale and sophistication of these propaganda efforts are deeply concerning. Equipping the public with the tools to identify and resist such manipulation is crucial. We must work collectively to uphold the integrity of our information ecosystem and safeguard democratic discourse.

The evolution of ‘slopaganda’ techniques highlights the need for greater transparency and accountability in the digital sphere. As citizens, we have a responsibility to be critical consumers of information and to demand higher standards from both tech companies and government entities. The integrity of our democratic institutions depends on it.

Well said. Increased transparency and accountability are essential in combating the spread of disinformation. This is a complex challenge that requires a multifaceted approach, involving policymakers, tech leaders, and the public working together.

This article serves as a stark reminder of the ongoing battle against disinformation and the need for robust fact-checking and verification processes. As the use of AI in propaganda escalates, it will be increasingly important for individuals and institutions to remain vigilant and to call out attempts to manipulate public discourse.

I agree. The proliferation of AI-generated ‘slopaganda’ highlights the importance of developing effective countermeasures and strengthening our collective resilience against these pernicious tactics. It’s a challenge we must address head-on to protect the integrity of our information ecosystem.