Listen to the article

Russia Weaponizes AI to Wage Sophisticated Disinformation Campaign Against Ukraine

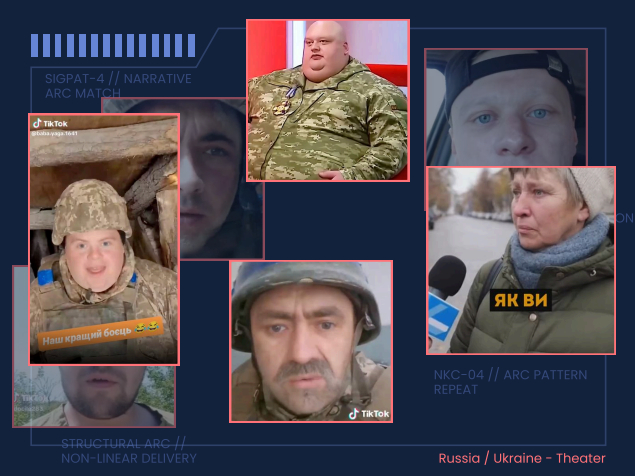

Russia has significantly escalated its information warfare tactics against Ukraine, deploying over 1,000 AI-generated videos and deepfakes as part of what Ukrainian officials describe as a coordinated psychological warfare campaign. According to research cited by Ukraine’s Center for Countering Disinformation (CDD), these synthetic media operations form part of a structured “narrative kill chain” – a modular disinformation system designed to target specific audiences with tailored messaging.

Security firm Sensity AI, which conducted the research, has documented how these operations represent a significant evolution in Russia’s cognitive warfare capabilities. The sophisticated campaign aims not only to persuade but to create an information environment so saturated with synthetic content that distinguishing between real and fabricated evidence becomes nearly impossible.

“What we’re seeing is the industrialization of AI-powered disinformation,” said a representative from Ukraine’s CDD. “This isn’t random content – it’s strategically created and distributed to undermine Ukraine from multiple angles simultaneously.”

The Russian operation segments its messaging based on intended audiences. For Ukrainian military personnel, videos promote narratives about a “failing war effort” and “collapsing front lines” while attempting to erode trust in military leadership. This psychological targeting seeks to demoralize soldiers and create division within Ukraine’s armed forces at a critical juncture in the conflict.

For Ukrainian civilians, the content focuses on draining morale, normalizing conditions under Russian occupation, and weakening public confidence in state institutions and the military. These narratives aim to fracture civilian support for the war effort and create internal pressure for concessions to Russia.

Western audiences receive yet another category of tailored content. These videos focus on discrediting Ukraine as a whole, portraying Ukrainian refugees in negative light, and constructing arguments against continued military and financial support for Kyiv. The goal appears to be influencing public opinion in Western countries to reduce international backing for Ukraine.

Security experts warn that beyond immediate persuasion goals, Russia aims to create a deeper, more damaging outcome: widespread distrust in any form of visual evidence. In such an environment, even genuine documentation of war crimes or military operations could be dismissed as fabricated.

“Moscow wants to establish plausible deniability for its actions by creating a world where anything can be dismissed as AI-generated fake content,” noted a disinformation researcher familiar with the campaign. “This would allow Russia to escape accountability for real-world atrocities by undermining the very concept of visual proof.”

The CDD has observed multiple waves of AI-driven propaganda since the full-scale invasion began, with each iteration becoming more sophisticated as the technology advances. The latest campaign shows significant improvement in the quality and distribution methods of synthetic content.

Russia’s investment in information warfare extends beyond AI technology. Reports indicate that Russian military personnel and state media representatives have been conducting training for teenage social media influencers in Moscow, providing instruction in video production, AI utilization, and audience growth strategies. This approach suggests Russia is developing a pipeline of content creators to sustain and expand its disinformation capabilities.

As AI-generation tools become more accessible and produce increasingly convincing content, Ukraine and its allies face growing challenges in countering these information operations. Media literacy experts stress the importance of developing better detection tools and educating populations about identifying synthetic content.

“This represents a new front in the war,” a Ukrainian defense official commented. “The battlefield extends into the digital realm where perception can be just as important as territorial gains.”

International organizations monitoring the conflict have called for greater attention to these AI-enabled information operations, warning that their impact could extend well beyond the current conflict and potentially influence democratic processes in other countries.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

18 Comments

The synthetic media operations described sound like a coordinated, strategic campaign to overwhelm and disorient the Ukrainian public. This is a disturbing escalation that demonstrates Russia’s willingness to exploit technology for malicious ends.

Exactly. The sheer volume of AI-generated content is alarming and designed to make it nearly impossible to discern truth from fiction. Ukraine faces a formidable challenge in countering this onslaught of disinformation.

The ‘narrative kill chain’ approach described is deeply troubling. Russia is clearly seeking to overwhelm and confuse with a coordinated barrage of fabricated content. This underscores the complex challenge Ukraine faces in defending its information space.

Absolutely. The sheer scale and sophistication of this disinformation campaign is a sobering reminder of the dangers posed by AI misuse. Ukraine will need a multi-pronged strategy to counter this threat effectively.

This report on Russia’s use of AI-generated videos as a disinformation tool is alarming. The sheer scale and sophistication of these operations pose a serious threat to Ukraine’s information landscape. Vigilance and a comprehensive strategy will be essential.

Absolutely. The ‘narrative kill chain’ approach is particularly troubling, as it suggests a coordinated and modular system designed to target specific audiences with tailored messaging. Defending against this level of synthetic content is a daunting challenge.

This news highlights the urgent need to develop robust technological and societal defenses against AI-powered disinformation. We must stay vigilant and work to inoculate the public against these sophisticated manipulation tactics.

Agreed. Improving media literacy, investing in fact-checking, and advancing detection capabilities will all be crucial to combating the spread of synthetic content. This is a battle we cannot afford to lose.

The news of Russia’s deployment of AI-generated videos as a disinformation tool against Ukraine is deeply concerning. This represents a significant escalation in their information warfare tactics and underscores the urgent need for robust countermeasures.

Agreed. The ‘industrialization of AI-powered disinformation’ is a chilling development that could have far-reaching consequences for Ukraine’s ability to maintain a shared understanding of reality. Combating this threat will require a comprehensive and coordinated response.

This report on Russia’s use of AI-generated videos as a disinformation tactic is truly alarming. The sheer scale and sophistication of these operations pose a grave threat to Ukraine’s information landscape. Maintaining public trust and discernment in the face of this onslaught will be a monumental challenge.

Absolutely. The ‘narrative kill chain’ approach described is particularly insidious, as it suggests a modular and strategic system designed to overwhelm and confuse. Defending against this level of synthetic content will require a multi-faceted strategy and significant technological and societal investments.

While not surprising, the news of Russia’s extensive use of AI-generated disinformation against Ukraine is still deeply concerning. This represents a concerning escalation in the information warfare tactics being deployed.

Agreed. The ‘industrialization of AI-powered disinformation’ is a worrying development that highlights the urgent need for improved detection and mitigation capabilities. Ukraine faces a daunting challenge in combating this threat.

Disturbing to see Russia weaponize AI for disinformation. This level of synthetic content is a dangerous escalation that could seriously erode public trust. Ukrainians must remain vigilant in discerning truth from fabrication.

Agreed, this is a concerning development in Russia’s information warfare tactics. Disinformation can be incredibly hard to detect, especially when AI is used to generate hyper-realistic content.

Russia has clearly invested heavily in advancing its cognitive warfare capabilities. This ‘industrialization of AI-powered disinformation’ is a troubling sign of how technology can be misused to sow confusion and undermine truth.

Unfortunately, it’s not surprising that Russia would resort to such sophisticated disinformation techniques. Combating this kind of threat requires constant vigilance and a multifaceted approach.