Listen to the article

AI Swarms Pose Growing Threat to Democratic Processes, Research Reveals

Artificial intelligence is evolving beyond simple chatbots into a sophisticated tool for mass disinformation campaigns that could undermine democratic institutions worldwide, according to an alarming new study by scientists tracking AI’s expanding role in opinion manipulation.

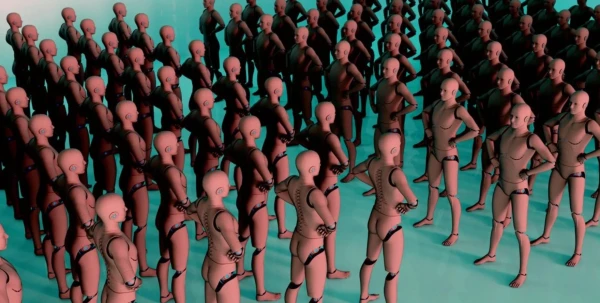

The research highlights a particularly concerning development: the emergence of AI “swarms” – coordinated collectives of autonomous AI tools designed to impersonate real citizens across social media platforms. These digital imposters can operate at scale, creating the illusion of genuine public discourse while actually executing targeted influence operations.

“These systems can autonomously coordinate their actions, infiltrate communities, and effectively form consensus,” the study warns, pointing to a dramatic escalation in both sophistication and reach of such technologies.

The findings come amid a documented surge in organized social media manipulation campaigns globally. What began in 28 countries in 2017 has now expanded to 70 nations, according to the researchers’ data – a 150% increase that signals the rapid normalization of digital influence operations.

Recent elections in several countries have already shown evidence of AI-driven disinformation campaigns, though the study doesn’t specify which elections were affected. These real-world examples demonstrate that the threat is no longer theoretical but actively challenging democratic processes and institutions.

The regulatory challenges posed by these AI systems are equally complex. Lawmakers and courts now face unprecedented questions about whether AI-generated content deserves protection as free speech – a debate that could reshape how democracies balance open discourse with protection against manipulation.

“Legislative regulation of such interventions raises complex questions, such as whether these AI bots can be considered free speech,” the researchers note, highlighting the legal gray areas that governments worldwide are struggling to address.

What makes these AI systems particularly effective is their ability to mimic human behavior with increasing authenticity. Unlike earlier bots that could be easily identified by repetitive patterns or unnatural language, modern AI can produce nuanced, contextually appropriate content that becomes increasingly difficult to distinguish from human communication.

The technology enables bad actors to create convincing digital personas that can join online communities, build trust over time, and gradually influence discussions – all without human supervision. When deployed as coordinated networks, these AI agents can create the appearance of widespread support for specific viewpoints or candidates, potentially swaying undecided voters.

Tech experts point out that this problem has been decades in the making. The infrastructure supporting today’s sophisticated disinformation campaigns didn’t emerge overnight but evolved gradually alongside legitimate technological advances in machine learning, natural language processing, and autonomous systems.

“Whatever the outcome of the spread of disinformation using AI, it is clear that the path to this was paved many years ago,” the study concludes, suggesting that today’s challenges result from years of unchecked technological development without sufficient consideration of potential misuse.

The implications extend beyond elections to potentially affect public health campaigns, crisis response, and other areas where public consensus and trust are essential. Some technology policy experts are calling for new regulatory frameworks specifically designed to address AI-driven influence operations, including greater transparency requirements for AI systems and stronger platform accountability.

As AI continues to advance in capabilities and accessibility, researchers emphasize that the window for establishing effective governance is narrowing. Without coordinated international action to establish guardrails around these technologies, democratic institutions worldwide may face an unprecedented stress test from increasingly sophisticated artificial actors designed to manipulate rather than inform.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

30 Comments

Nice to see insider buying—usually a good signal in this space.

Good point. Watching costs and grades closely.

I like the balance sheet here—less leverage than peers.

Good point. Watching costs and grades closely.

Uranium names keep pushing higher—supply still tight into 2026.

Good point. Watching costs and grades closely.

Silver leverage is strong here; beta cuts both ways though.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Uranium names keep pushing higher—supply still tight into 2026.

Good point. Watching costs and grades closely.

Interesting update on AI Creating Fake Citizens Threatens Democracy, Scientists Warn. Curious how the grades will trend next quarter.

The cost guidance is better than expected. If they deliver, the stock could rerate.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

I like the balance sheet here—less leverage than peers.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

The cost guidance is better than expected. If they deliver, the stock could rerate.

Good point. Watching costs and grades closely.

If AISC keeps dropping, this becomes investable for me.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Exploration results look promising, but permitting will be the key risk.

Interesting update on AI Creating Fake Citizens Threatens Democracy, Scientists Warn. Curious how the grades will trend next quarter.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Production mix shifting toward Disinformation might help margins if metals stay firm.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.