Listen to the article

In a concerning development for democratic institutions worldwide, scientists are sounding the alarm about artificial intelligence’s potential to manipulate public opinion through coordinated disinformation campaigns. A new study reveals that AI capabilities extend far beyond simple chatbot functions, posing serious threats to information integrity in democratic societies.

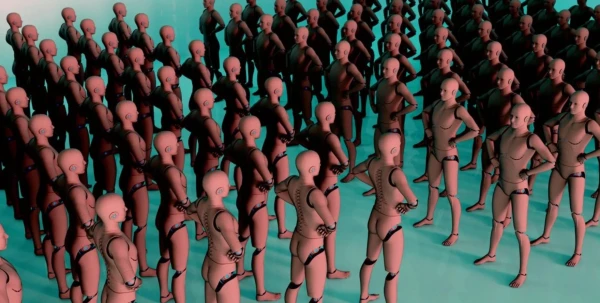

The research specifically examines how large language models and autonomous AI agents can be weaponized to shape public discourse and political outcomes. Of particular concern is the emergence of what researchers term “AI swarms” – collections of autonomous AI tools programmed to impersonate real citizens across social media platforms in a coordinated fashion.

“These systems can autonomously coordinate their activities, infiltrate online communities, and effectively manufacture consensus,” the study warns, highlighting the sophisticated nature of these evolving threats.

The scope of organized social media manipulation has expanded dramatically in recent years. According to the research, such operations have spread from 28 countries in 2017 to 70 countries today, representing a 150% increase in just six years. This rapid expansion underscores the growing accessibility and deployment of advanced manipulation techniques.

Recent elections in several nations have already shown evidence of AI-driven disinformation campaigns, though the study doesn’t specify which countries were affected. These real-world examples demonstrate that the threat is not theoretical but actively undermining democratic processes.

The technological sophistication of these AI systems presents unique challenges for regulatory bodies and lawmakers. A particularly complex question emerges around whether AI bots’ communications should be protected as a form of free speech – a legal quandary that remains largely unresolved in most jurisdictions.

Social media platforms, which have struggled to contain human-generated disinformation, now face an even more formidable challenge with AI-generated content that can be produced at massive scale with increasing authenticity. The ability of these systems to analyze human behavior patterns and adapt their messaging accordingly makes traditional content moderation approaches insufficient.

“Democratic institutions are already under threat from these manipulation methods,” the researchers note, pointing to a rapidly closing window for effective regulation and countermeasures.

What makes these AI-powered disinformation campaigns particularly effective is their ability to simulate authentic human interaction patterns. Unlike obvious propaganda or clearly automated accounts of the past, modern AI systems can engage in nuanced conversations, respond to current events, and maintain consistent personas across multiple platforms and over extended periods.

Experts suggest that this problem has been years in the making. The technological foundations enabling today’s sophisticated disinformation capabilities were established long before their current applications became apparent. The convergence of massive language models, autonomous agent technologies, and social network vulnerabilities has created a perfect storm for democratic disruption.

Media literacy experts emphasize that public awareness and education represent critical first lines of defense. However, technological solutions such as improved AI detection tools and enhanced platform governance will likely be necessary components of any comprehensive response.

As governments worldwide grapple with regulating AI development and deployment, this research adds urgency to calls for international cooperation on establishing guardrails for autonomous systems that can impact public discourse and democratic processes.

With elections scheduled in numerous countries over the coming year, including major democracies like the United States and India, the findings take on particular significance. The ability to distinguish between genuine citizen voices and AI-generated content may prove crucial for preserving election integrity and democratic decision-making in an increasingly AI-influenced information environment.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

The scale and sophistication of these organized disinformation campaigns is deeply concerning. We must remain vigilant and invest in developing effective countermeasures to protect our democracies.

Absolutely. This is a complex challenge that requires a multifaceted approach, including technological solutions, regulatory frameworks, and public education initiatives. Collaboration across sectors will be key to addressing this threat.

The concept of ‘AI swarms’ autonomously infiltrating online communities to manufacture consensus is deeply unsettling. We must prioritize research and policies to mitigate these emerging risks.

Absolutely. Proactive measures are needed to stay ahead of these threats and protect the foundations of our democratic systems. Relying on technology alone may not be enough – a holistic approach is required.

This is quite concerning. The potential for AI to spread disinformation and manipulate public discourse is a serious threat to democracy. We need robust safeguards and oversight to prevent the abuse of these powerful technologies.

Agreed. Protecting the integrity of information is crucial for healthy democratic processes. Policymakers must urgently address this issue to prevent the erosion of public trust.

This is a sobering reminder of the potential for advanced AI to be weaponized against democratic institutions. Policymakers must work closely with technology experts to develop robust safeguards.

Agreed. Maintaining public trust in information sources and democratic processes is critical. Concerted efforts are needed to address this challenge and preserve the integrity of our societies.

While the advancements in AI are impressive, the risks outlined here are deeply troubling. The ability to create coordinated networks of fake citizens to skew public opinion is a frightening prospect.

Absolutely. This is a complex challenge that will require multifaceted solutions, including technological, regulatory, and educational approaches to build societal resilience against such manipulation.

The growth in organized social media manipulation campaigns over the past six years is alarming. We must remain vigilant and proactive in addressing this evolving threat to democracy.

Agreed. Safeguarding the authenticity and integrity of online discourse is critical. This issue requires a collaborative, cross-sector effort to develop effective countermeasures.