Listen to the article

Social media giants face mounting criticism for inadequate content moderation in Arabic-speaking regions, where extremist content and disinformation campaigns flourish largely unchecked compared to Western markets, according to new research.

The disparity highlights a troubling double standard in how technology companies police their platforms globally, experts say. With 350 million Arabic speakers worldwide representing one of social media’s fastest-growing demographics, the moderation gap poses significant risks.

“Groups don’t have to hide what they are doing because the moderation is so full of holes,” said Moustafa Ayad, Executive Director for Africa, Middle East, and Asia at the Institute for Strategic Dialogue (ISD). “It’s just so frustrating. [The social media companies] did investigations around the U.S. elections, even though they were late. It doesn’t feel like they’re doing that in [the] Middle East, proactively or reactively.”

Ayad’s recent research uncovered a sophisticated network of at least 11 Twitter accounts masquerading as attractive women while disseminating pro-Kremlin propaganda in Arabic. These accounts amassed substantial followings before any action was taken—and even then, the accounts were only removed after POLITICO presented Twitter with ISD’s evidence.

This case exemplifies a broader pattern where content that would trigger immediate removal in English often remains accessible in Arabic for extended periods. The inconsistency reflects both technological and cultural challenges facing major platforms like Twitter, Facebook, and YouTube.

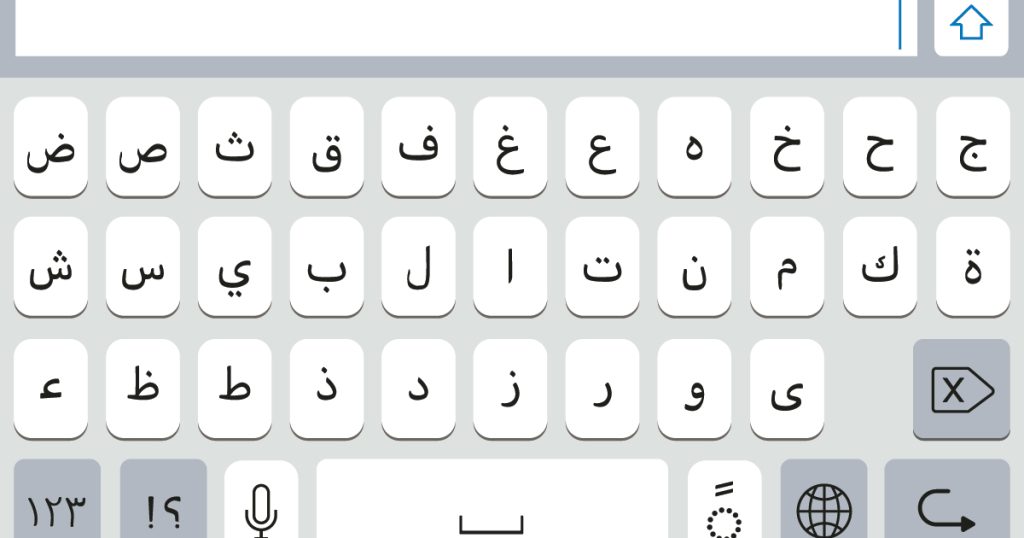

Tech companies have invested heavily in AI-powered content moderation tools, but these systems typically perform best in English and other Western languages. Arabic presents particular difficulties for automated systems due to its complex dialectal variations, rich contextual nuances, and right-to-left script.

Industry insiders acknowledge these technical hurdles but note they’re compounded by inadequate investment in human moderators with appropriate language skills and cultural knowledge. While platforms maintain large teams reviewing English content, Arabic moderation teams remain comparatively understaffed despite the language’s global significance.

The consequences extend beyond mere content policy enforcement. Political disinformation campaigns targeting Arabic-speaking populations can operate with greater freedom than those aimed at Western audiences. Extremist groups similarly exploit these gaps to recruit and radicalize.

The problem has intensified during recent conflicts. During the Israel-Hamas war, researchers documented significant disparities in how violent content was moderated across different languages. Similar patterns emerged during Russia’s invasion of Ukraine, where Arabic became a key battleground for competing narratives.

Media watchdog organizations have repeatedly called for greater transparency from social media companies about their moderation practices across languages. Several have urged platforms to publish language-specific data on content removal rates, response times, and enforcement actions.

For their part, major social media platforms maintain they’re working to improve their capabilities across all languages. However, critics argue progress has been too slow and unevenly distributed, with Arabic-speaking users receiving less protection than their English-speaking counterparts.

The gap also reflects broader questions about Silicon Valley’s global responsibilities. As American companies expanding internationally, platforms face accusations of prioritizing Western markets where regulatory pressure and public scrutiny are most intense.

Some Middle Eastern governments have responded by threatening stringent regulations or outright bans on platforms they view as unresponsive. However, civil society organizations warn such measures often prioritize political control rather than user safety.

What remains clear is that as social media continues to shape global discourse, effective content moderation across languages has become essential to combating extremism and disinformation worldwide. The current disparities suggest there’s substantial work ahead.

For users like those exposed to the network of fake profiles spreading Kremlin propaganda, the status quo means navigating an information environment where bad actors operate with relative impunity. As Ayad’s research demonstrates, until platforms close these moderation gaps, millions of Arabic-speaking users will remain vulnerable to manipulation and exploitation online.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

24 Comments

The cost guidance is better than expected. If they deliver, the stock could rerate.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

If AISC keeps dropping, this becomes investable for me.

Good point. Watching costs and grades closely.

Silver leverage is strong here; beta cuts both ways though.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Uranium names keep pushing higher—supply still tight into 2026.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Uranium names keep pushing higher—supply still tight into 2026.

If AISC keeps dropping, this becomes investable for me.

Good point. Watching costs and grades closely.

I like the balance sheet here—less leverage than peers.

Good point. Watching costs and grades closely.

Silver leverage is strong here; beta cuts both ways though.

Good point. Watching costs and grades closely.

The cost guidance is better than expected. If they deliver, the stock could rerate.

Nice to see insider buying—usually a good signal in this space.

Good point. Watching costs and grades closely.

I like the balance sheet here—less leverage than peers.

Good point. Watching costs and grades closely.

Uranium names keep pushing higher—supply still tight into 2026.