Listen to the article

Deepfake Videos Falsely Show George Will Commenting on Trump Legal Matters

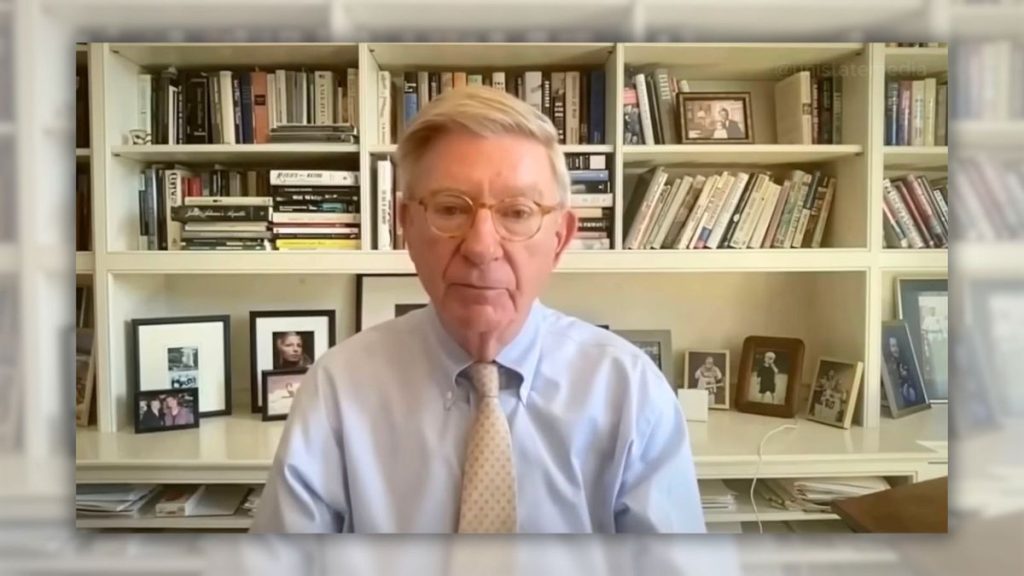

Numerous viral videos purportedly showing conservative columnist George Will discussing recent Supreme Court decisions against Donald Trump are entirely fabricated, an investigation has revealed. The videos, which began circulating in late December 2025, feature Will supposedly commenting on a sealed Supreme Court ruling that gave Trump a “72-hour deadline” to produce financial documents or face jail time.

One of the most widely viewed videos, titled “BREAKING NOW: Trump’s Financial Records Trapped by Supreme Court — 72 Hours or Jail,” displayed an alarming thumbnail showing the former president in handcuffs. The video description claimed the Supreme Court had “stripped Donald Trump of any remaining legal cover” after a “year-long sealed legal battle kept completely out of public view.”

These videos are sophisticated deepfakes created using artificial intelligence to manipulate genuine interview footage of Will, altering his mouth movements and voice to deliver fabricated commentary. The creators used AI to generate both the visuals and the dramatic script that mimics news reporting.

A thorough review of Will’s official social media accounts, his Washington Post columns, and television archives confirms he made no such statements during the time period in question. The Supreme Court did issue a real 6-3 decision on December 23, but it concerned blocking the Trump administration’s attempt to deploy National Guard troops in Chicago—not financial records or compliance deadlines.

Some of these deceptive videos did include YouTube’s “altered or synthetic content” label, though these disclaimers were often buried at the bottom of video descriptions where many viewers would miss them. One example from the LivingGolden YouTube channel used footage of Will from an MLB Network appearance in March 2025 as the base for its manipulation.

The trend extends beyond Will to other political commentators. A channel called “Inside the Capitol” has published similar deepfakes featuring MSNBC host Rachel Maddow supposedly discussing various political developments. The videos have spread across multiple platforms including Facebook and TikTok.

This wave of AI-generated content appears timed to exploit public interest following the actual Supreme Court ruling on December 23 about National Guard deployments. By mixing real news events with fabricated commentary from respected political analysts, these videos create a veneer of credibility that can mislead viewers.

Media experts have increasingly warned about the dangers of AI-generated deepfakes in the political sphere. These technologies make it increasingly difficult for consumers to distinguish between authentic reporting and fabricated content, particularly when the fake videos incorporate real news events as context.

The Washington Post was contacted regarding these false depictions of their columnist but had not provided comment at publication time. The YouTube channels distributing the fake Will videos offered no contact information, making it difficult to reach the creators directly.

The proliferation of these sophisticated deepfakes underscores the evolving challenges facing social media platforms, news consumers, and journalists as AI tools become more accessible and their outputs more convincing. While YouTube has implemented some labeling systems for altered content, these measures often rely on creator disclosure and may be insufficient to prevent widespread misinformation.

For news consumers, the incident serves as a reminder to verify information through official sources and to approach viral political content with heightened skepticism, especially when it makes dramatic claims about sealed court rulings or imminent legal consequences for public figures.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

9 Comments

I’m curious to learn more about the technical capabilities behind these deepfake videos. How are the visuals and audio so convincingly altered? This highlights the need for better detection and mitigation of such synthetic media.

Fabricating news reports and attributing false statements to respected public figures is a serious offense. Generating misleading content like this should be prosecuted to the full extent of the law.

Agreed. Protecting the integrity of information and media is crucial for a healthy democracy.

While deepfakes can be used for entertainment or artistic purposes, this case demonstrates the potential for serious harm when the technology is misused for political gain or to mislead the public.

It’s troubling to see how sophisticated deepfake technology has become. We must be vigilant in verifying the authenticity of online content, especially when it involves prominent public figures.

This is concerning. Deepfake videos can spread misinformation quickly and undermine public trust. I hope authorities act swiftly to identify the creators and take appropriate action.

I’m curious to know if there are any technological solutions or policies being developed to better detect and mitigate the spread of deepfake content. This is a complex challenge that requires a multi-faceted approach.

The widespread circulation of these fabricated videos is alarming. I hope the investigation can uncover the full scope of this disinformation campaign and hold the perpetrators accountable.

Absolutely. Spreading false information, especially about legal matters and the judicial system, is a grave threat that needs to be addressed forcefully.