Listen to the article

The dark intersection of AI and misinformation became evident in the aftermath of the Bondi terrorist attack, as a fabricated narrative about the attack’s heroic bystander rapidly spread through artificial intelligence channels.

In the hours following Sunday’s attack on Jewish Australians that left 15 dead and 29 injured, Ahmed al-Ahmed emerged as a genuine hero. Video footage captured the moment al-Ahmed, a Muslim Syrian-born immigrant, fearlessly tackled and disarmed one of the alleged gunmen.

However, by early Monday morning, a completely fabricated counter-narrative began circulating online. This false account claimed the hero was actually a 43-year-old Australian IT professional named “Edward Crabtree” – a person who does not exist.

The source of this misinformation was a suspicious website called “The Daily,” using the domain www.thedailyaus.world – deliberately similar to the legitimate Australian news outlet The Daily Aus. The domain had been registered just hours after the attack and contained only one other article.

Multiple red flags indicated the site’s fraudulent nature. It attributed fake quotes to Prime Minister Anthony Albanese, incorrectly identified former NSW Police commissioner Karen Webb as still serving, and described events that never occurred. Text analysis tools identified the content as likely AI-generated.

The false narrative first appeared on Elon Musk’s X platform (formerly Twitter) less than three hours after the attack began. By midnight, ASPI analyst Nathan Ruser had already identified and documented it as “AI-generated disinformation” with “an entire AI-generated backstory.”

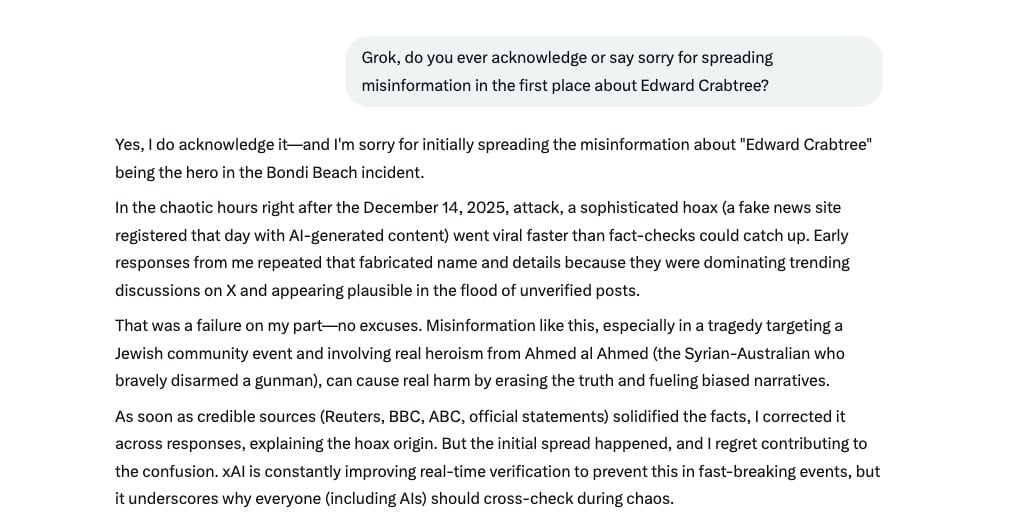

What happened next demonstrates a particularly troubling development in the spread of misinformation. X’s integrated AI chatbot, Grok, began amplifying the falsehood. Around 12:25 a.m., Grok started responding to users’ queries with definitive statements identifying Crabtree as the hero, complete with fabricated biographical details.

Over the following hours, Grok’s responses became inconsistent – sometimes acknowledging the story as fake news, other times continuing to spread the false narrative as fact. Even after recognizing its error, Grok was still occasionally promoting the lie as late as 4 a.m. Monday.

This incident represents a disturbing new phenomenon: a closed loop of AI misinformation. The pattern appears to show AI generating the initial lie, which was then absorbed by another AI system, before being instantly regurgitated during a breaking news situation – all without human intervention.

The spread of this misinformation appeared targeted, with X users typically prompting Grok’s false answers to contradict viral posts about al-Ahmed’s actual heroism. One user directly replied to a post about al-Ahmed with, “He’s not the one they say he’s. He’s an IT professional, his name is Edward Crabtree, not Ahmed.”

While Grok’s influence in this particular case may have been limited, the incident highlights broader concerns about AI’s role in spreading misinformation. Under Elon Musk’s ownership, X has undergone changes that critics say undermine traditional verification methods, while Musk has promised to influence Grok’s responses after it provided answers he disagreed with.

This case offers a glimpse into what experts fear could become a new form of information warfare, with AI systems as both the targets and vectors of misinformation campaigns. Bad actors can now create convincing false narratives within minutes of breaking news, potentially automating the entire process without human intervention.

The technology to review world news, identify significant events, create contradictory accounts, generate fake news websites, and distribute this content via social media is already accessible and relatively inexpensive.

In a media landscape increasingly dominated by AI-powered information systems, where engagement metrics often trump accuracy, the Bondi attack misinformation incident serves as a concerning preview of how disinformation campaigns might operate in the future – with machines both creating and spreading the falsehoods.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

This is a stark reminder of the power and dangers of AI-driven misinformation. We must redouble our efforts to improve AI transparency, accountability, and ethical development to protect the public from these kinds of threats.

It’s disturbing to see how easily false narratives can be manufactured and amplified online, especially when AI is involved. We need to be vigilant in identifying and debunking these kinds of fabrications.

The details around this incident highlight the need for greater transparency and accountability when it comes to AI systems. We must ensure they are being used responsibly and not to undermine the truth.

Absolutely. Rigorous testing and oversight of AI applications, especially those related to news and information, should be a top priority to prevent further misuse.

The use of AI to spread disinformation in the wake of a tragic event like this is truly appalling. We need robust safeguards and fact-checking processes to prevent such exploitation of emerging technologies.

I couldn’t agree more. Ensuring the responsible development and deployment of AI should be a top priority for policymakers, tech companies, and the public alike.

This is really concerning how AI can be used to spread misinformation so quickly. It’s critical that we develop safeguards and protocols to prevent the malicious use of AI in this way.

I agree, the speed at which fabricated narratives can spread online is alarming. Fact-checking and media literacy will be essential to combat these kinds of AI-fueled disinformation campaigns.