Listen to the article

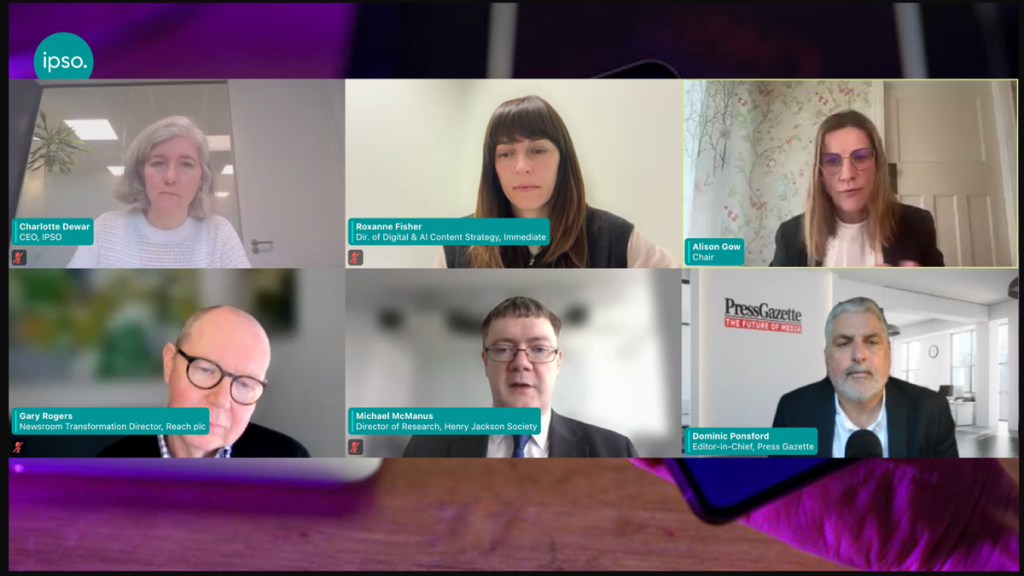

As artificial intelligence rapidly reshapes the information landscape, media professionals face growing concerns about its potential misuse in spreading disinformation. A recent webinar hosted by the UK’s independent press regulator IPSO brought together editors and strategists to examine how AI is being weaponized against journalism and what newsrooms can do to protect their integrity.

Michael McManus, research director at the Nation-states think tank, delivered a sobering assessment of AI’s role in disinformation campaigns. He warned that malicious actors—including hostile nation-states, extremist groups, and ideologically motivated individuals—are leveraging AI to pollute the information ecosystem with unprecedented speed and sophistication.

“The leaps are geometric,” McManus noted, explaining how AI enables mass production of convincing fake narratives, personalized phishing attacks, and increasingly realistic deepfakes. Recent research on Russian operations revealed sophisticated AI-driven social engineering scams targeting high-ranking officials with tailored, credible-sounding messages.

The most alarming prospect, according to McManus, is the approaching “event horizon” for deepfake technology—a point where distinguishing real from fabricated content becomes nearly impossible for journalists. This blurring of reality creates fertile ground for both deliberate disinformation and unwitting misinformation spread by well-intentioned professionals.

McManus advocated for a “verify, then trust” approach, highlighting initiatives like BBC Verify and Finland’s educational curriculum on deepfake detection as potential models for building “herd immunity” against false information. Despite technological advances, he emphasized that human oversight remains essential, as AI tools ultimately reflect the biases of their programmers.

The webinar also addressed growing concerns about deception in the public relations sector. Press Gazette’s editor-in-chief Dominic Ponsford shared findings from the publication’s “Reality Wars” investigation, which uncovered numerous cases where respected news outlets, including The Telegraph, Wired, and Business Insider, published content featuring AI-generated experts and fabricated quotes.

Ponsford described how some PR agencies have “weaponised and industrialised” the process of securing media mentions for brands, exploiting journalists’ traditional trust in press releases. This has resulted in thousands of mainstream media articles featuring entirely fictitious sources and experts.

To combat this trend, Ponsford recommended that journalists adopt a default position of skepticism toward unsolicited emails, verify sources through more secure channels like direct phone calls, and utilize AI-detection tools such as Pangram and Identify-AI. He warned against trusting supposed experts solely based on previous media appearances, noting cases where fabricated sources had been cited in dozens of publications.

Gary Rogers, newsroom transformation director at Reach, one of the UK’s largest news publishers, acknowledged that his organization had been caught publishing fabricated content. “We took the Press Gazette investigation very seriously and removed affected stories,” Rogers said, highlighting the damage such incidents cause to the crucial trust between PR professionals and journalists.

In response, Reach has implemented more rigorous vetting procedures for PR agencies and developed an advisory research assistant tool that flags suspicious emails and verifies sender legitimacy. Rogers emphasized that their approach combines purchased and in-house developed AI solutions, with strict governance protocols surrounding privacy and source protection.

“They will cause you problems. They could save you effort. You have to get the balance right,” Rogers cautioned, pushing back against the notion of AI as a “miracle engine” for newsrooms.

Roxanne Fisher, director of digital and AI content strategy at magazine publisher Immediate Media, shared her company’s approach centered on transparency and responsible experimentation. Immediate Media has been open about its AI implementation from the outset, establishing clear boundaries for appropriate use cases.

To address concerns about data security with external AI models, the publisher built “First Draft,” an internal tool trained exclusively on the company’s own content archives. This system supports research and content repurposing while providing full citations for every output.

“We talk about being here for assisted, not generated AI content,” Fisher explained, underscoring that all AI-produced material undergoes human review. The company has also prioritized staff training through “immersion days” featuring external experts discussing ethics and building confidence in AI applications.

As newsrooms navigate these challenges, the consensus among participants was clear: while AI offers potential benefits for journalism, it requires rigorous safeguards, ongoing education, and a renewed commitment to verification principles that have always underpinned quality reporting.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

13 Comments

The battle against AI-driven disinformation is a high-stakes game. Kudos to the IPSO for convening this discussion and raising awareness of the issue. Protecting the integrity of journalism is vital for a healthy democracy.

The sheer scale and sophistication of AI-driven disinformation campaigns is truly alarming. It’s a complex issue with no easy solutions, but I’m glad to see media leaders grappling with it head-on. Protecting the truth has never been more crucial.

Fascinating insight into the evolving landscape of AI and disinformation. The prospect of mass-produced, highly personalized fake narratives is deeply concerning. Kudos to the experts for shedding light on this critical challenge facing journalism.

You’re absolutely right. The potential of AI to undermine the credibility of news sources is a major threat. Developing robust verification and fact-checking processes will be crucial in the fight against disinformation.

The rapid progress of AI is both exciting and concerning. It’s critical that we stay vigilant against its potential misuse in spreading disinformation. Kudos to the IPSO for convening this important discussion on the challenges ahead.

Well said. Disinformation is a serious threat to the integrity of journalism and public discourse. AI tools make it easier to scale up these attacks, so we need robust safeguards and fact-checking mechanisms.

Fascinating insights on the growing threat of AI-driven disinformation. It’s alarming to see how quickly the technology is advancing and being exploited by bad actors. Newsrooms will need robust defenses to protect their integrity in this shifting landscape.

Agreed. AI is a double-edged sword – incredible potential, but also dangerous in the wrong hands. Tackling this challenge will require a multi-pronged approach from media, tech companies, and policymakers.

The potential for AI to be misused in spreading disinformation is truly alarming. Kudos to the IPSO for convening this important discussion and raising awareness of the challenge. Developing robust verification and fact-checking processes will be key to combating this threat.

Absolutely. Collaboration between media, tech companies, and policymakers will be essential in developing effective countermeasures. We must stay vigilant and innovative in the face of this rapidly evolving landscape.

Fascinating insights on the threat of AI-driven disinformation. It’s deeply concerning to see how quickly the technology is advancing and being exploited by bad actors. Protecting the integrity of journalism has never been more crucial.

The growing sophistication of AI-powered disinformation campaigns is truly alarming. It’s crucial that newsrooms and tech companies work together to develop effective countermeasures. Fact-checking and media literacy will be key to stemming the tide.

Well said. Tackling this challenge will require a multi-stakeholder approach, drawing on the expertise of journalists, technologists, and policymakers. Vigilance and innovation will be essential in this rapidly evolving landscape.