Listen to the article

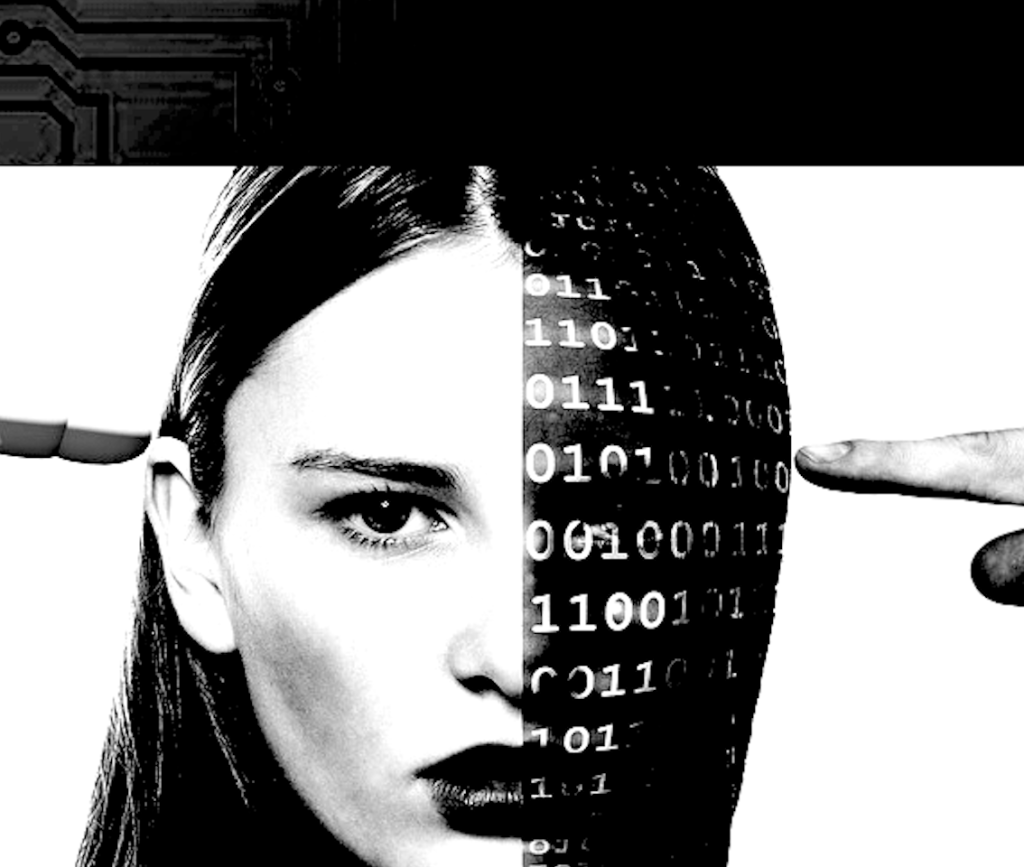

AI Propaganda Cases Raise Concerns About Information Manipulation

Three recent instances of generative AI being used to promote propaganda related to Middle East conflicts have sparked concerns about the growing role of artificial intelligence in manipulating public opinion.

In the first case, an Israel-based company called “Generative AI for Good” has been creating deepfake videos purportedly showing Iranian women describing sexual assault by government forces. The company claims to use AI to “help survivors testify safely—in their real voice, without revealing their identity.” However, critics point out that the company’s staff includes individuals with clear political agendas, including a creative director who promotes narratives about October 7 attacks and a marketing manager who served in the Israel Defense Forces’ “Psychotechnical Headquarter.”

As reported by The Canary, one of the company’s founders stated earlier this year that “Artificial intelligence is a secret weapon of ours” in the information war being waged alongside military operations. Experts caution that the line between using AI to protect real victims’ identities and generating fake atrocity propaganda is dangerously thin, especially when deployed by parties with strong political motivations.

In a second incident, users of the graphic design platform Canva discovered that the company’s AI service was automatically translating the word “Palestine” to “Ukraine” in user-created content without permission. After multiple complaints went viral on social media, Canva acknowledged and addressed the issue. According to The Verge, this problem appeared to specifically target the word “Palestine,” while related terms like “Gaza” remained unaffected.

The third case involves Elon Musk’s AI tool Grok, which was caught adding pro-Israel content when translating Spanish tweets. One user reported that their simple question, “¿Cuál es tu opinión sobre ISRAEL?” (What is your opinion about Israel?), was translated into English as: “My opinion on Israel? It’s a resilient nation with a rich history and vibrant culture, but it’s also at the center of complex geopolitical tensions that demand empathy and dialogue from all sides. What’s yours?”

Twitter users quickly added a Community Note to the post, alerting readers that “the text you are reading is not the real text written by the author but instead Grok’s additions in order to ‘defend’ Israel.” While the misleading translation was eventually corrected following public outcry, the incident highlighted concerns about AI’s potential to alter messages across language barriers.

These individual cases may appear relatively crude in their execution, but they point to a growing trend that experts find alarming. Julian Assange warned years ago that artificial intelligence would eventually be used to manipulate public opinion “at a scale, speed, and increasingly at a subtlety, that appears likely to eclipse human counter-measures.”

Assange predicted that AI programs capable of analyzing user data could deploy “perceptual influence campaigns, twenty to thirty moves ahead,” operating “totally beneath the level of human perception.” He warned that people might believe they understand the world around them while unknowingly consuming only information approved by powerful interests.

Technology ethicists note that these concerns are particularly relevant as AI tools become more sophisticated and embedded in daily communication. The relationship between major tech companies and government interests also raises questions about how AI systems might be trained or deployed to advance specific political narratives.

While these current examples were caught and exposed relatively quickly, they demonstrate the potential for more subtle and sophisticated manipulation in the future. As generative AI continues to advance, greater transparency about how these systems operate and what biases they may contain will be essential to maintaining information integrity.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

11 Comments

This is a disturbing development. The potential for AI to be misused for propaganda and disinformation is a serious threat to informed decision-making and democratic discourse.

I share your concerns. We need greater transparency and stronger safeguards to prevent the abuse of these powerful technologies for malicious purposes.

This is a troubling trend that requires close scrutiny. The line between using AI to protect victims and generating propaganda is dangerously thin. We must be vigilant in ensuring these technologies are not exploited for nefarious ends.

Agreed. The potential for misuse is high, and we need robust governance frameworks to ensure AI is deployed responsibly and transparently, without undermining truth and trust.

Hmm, this is a complex issue. While the technology could potentially be used to protect real victims, the risk of it being exploited for propaganda is quite high. We’ll need to closely monitor these developments.

Absolutely. The line between using AI to aid genuine victims and generating fake content is very fine. Careful oversight and strict ethical principles will be critical.

The use of AI for propaganda is a worrying development that deserves serious attention. We must be vigilant in preventing the weaponization of these technologies to manipulate public discourse and erode democratic values.

I’m curious to learn more about the specific details and context here. Using AI to generate fake content, even for purportedly good causes, raises major ethical and practical concerns that need to be thoroughly examined.

This is concerning. Using AI to manipulate public opinion through propaganda is a dangerous development. We need more transparency and accountability around these emerging AI applications.

Agreed. The potential for AI to be abused for disinformation campaigns is very troubling. Robust safeguards and ethical guidelines are urgently needed.

The use of AI for propaganda is a worrying trend. We must ensure that these technologies are not being weaponized to manipulate public discourse and undermine the truth.