Listen to the article

As artificial intelligence tools become increasingly accessible to the public, healthcare professionals are raising serious concerns about patients using AI platforms for medical advice instead of consulting physicians.

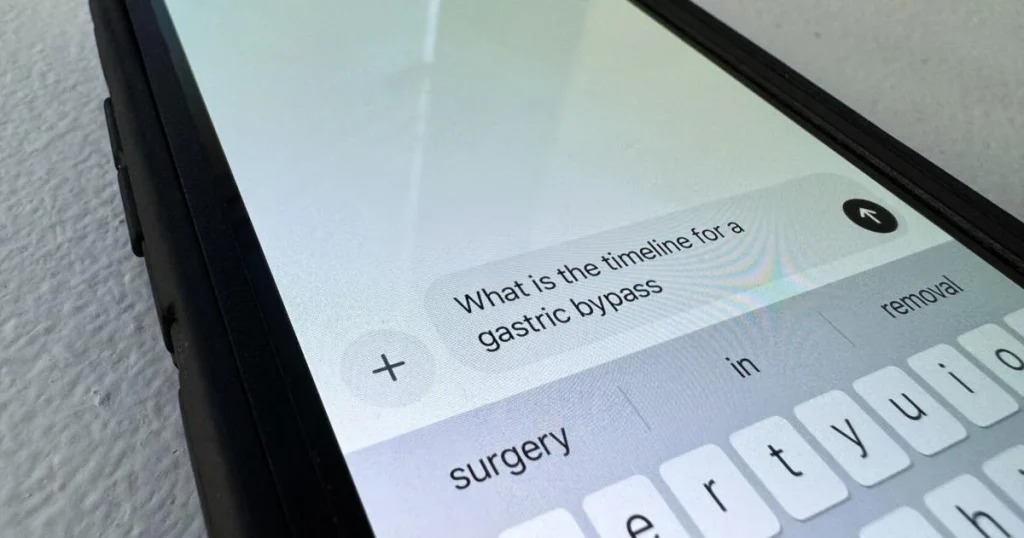

Medical experts across the country report a troubling trend of patients arriving at appointments having already consulted AI chatbots like ChatGPT or Google’s Bard about their symptoms, often armed with incorrect self-diagnoses or inappropriate treatment plans.

Dr. James Wilson, an internal medicine specialist at Northwestern Memorial Hospital, has witnessed this phenomenon firsthand. “I’ve had patients come in convinced they have rare conditions because an AI told them so, when in reality, their symptoms pointed to something much more common and treatable,” he explained. “The danger is that these platforms sound authoritative but lack the clinical judgment developed through years of medical training.”

The American Medical Association recently issued guidance cautioning against over-reliance on AI for medical decisions. Their report highlighted several documented cases where patients delayed seeking proper medical care after receiving reassuring but incorrect assessments from AI platforms.

One particularly concerning case involved a 42-year-old woman who experienced chest pain but was told by an AI system that she likely had acid reflux. When she finally visited an emergency room three days later, doctors discovered she had suffered a minor heart attack.

“AI systems are trained on vast amounts of text data, but they don’t have the ability to physically examine a patient, order tests, or interpret subtle clinical signs,” explained Dr. Sarah Mendez, a digital health researcher at Johns Hopkins University. “They’re essentially making educated guesses based on pattern recognition.”

Healthcare technology experts note that current consumer-facing AI tools weren’t specifically designed for medical diagnosis. Unlike FDA-approved clinical decision support systems used by healthcare providers, public AI chatbots haven’t undergone rigorous testing for accuracy in medical applications.

The issue extends beyond incorrect diagnoses. Some patients are using AI to interpret their lab results or imaging reports, potentially missing critical nuances that would be apparent to trained medical professionals.

“There’s also the problem of confirmation bias,” said Dr. Michael Chen, a primary care physician in Seattle. “People often search for explanations that match what they already suspect or fear, and AI systems might inadvertently reinforce these concerns without the balanced perspective a doctor would provide.”

Medical misinformation, already a significant challenge in the digital age, may be amplified by AI systems that occasionally “hallucinate” or generate fictitious information presented as fact. This can include fabricated medical studies or treatments that don’t exist.

Industry analysts predict the global market for AI in healthcare will exceed $120 billion by 2028, reflecting the technology’s growing integration into various aspects of medicine. While healthcare providers generally welcome AI advancements that can enhance their diagnostic capabilities or streamline administrative tasks, most draw a clear line at patients substituting AI consultation for professional medical care.

Insurance companies are also monitoring the trend closely. Some health plans are exploring how to integrate validated AI tools into care pathways while discouraging reliance on unregulated platforms.

Despite these concerns, experts acknowledge that AI can play a positive role in healthcare when used appropriately. “These tools can help patients better articulate their symptoms or understand general health concepts,” noted Dr. Mendez. “The problem arises when they’re used as replacement rather than supplement to professional medical care.”

Healthcare providers recommend patients use reputable sources like the CDC or Mayo Clinic websites for general health information. If using AI tools, patients should approach the information cautiously and always discuss findings with qualified healthcare professionals.

“Technology has tremendous potential to improve healthcare,” Dr. Wilson concluded. “But the relationship between doctor and patient remains irreplaceable. AI simply doesn’t have the clinical intuition developed through years of treating actual patients.”

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

I’m curious to hear more about the specific cases where patients delayed seeking proper care after receiving incorrect assessments from AI chatbots. It would be helpful to understand the real-world impacts of this trend.

I can see why doctors would be wary of patients using AI for medical advice. The risk of misinformation and inappropriate self-diagnosis is high. AI simply cannot replace the value of human medical expertise.

Agreed. AI may be useful for general health information, but anything requiring clinical evaluation should be left to qualified doctors.

This is a concerning trend. AI systems may sound authoritative but lack the clinical expertise and judgment of trained medical professionals. Patients should always consult their doctors for proper diagnosis and treatment.

As someone interested in the mining industry, I can understand the appeal of using AI for information. But when it comes to my health, I would much rather trust the judgment of an actual doctor over an AI system.

The American Medical Association is right to issue this guidance. AI can be a useful tool, but it should never replace the vital role of trained medical professionals in providing accurate diagnoses and treatment plans.

This is an important issue that extends beyond just the medical field. We need to be cautious about over-relying on AI, especially for critical decisions that require human expertise and judgment.

As someone who follows the mining and commodities space, I can appreciate the potential of AI for things like market analysis and trend forecasting. But when it comes to my personal health, I’ll always defer to the expertise of my doctor.