Listen to the article

A viral video purporting to show a teacher in a headscarf leading white children in Islamic prayers has been identified as an AI-generated fake by experts who analyzed the footage for Reuters.

The 15-second clip, which began circulating widely on social media on November 7, was designed to resemble CCTV footage and carried a timestamp of 10:24 on November 6, 2025. In the video, approximately a dozen children in school uniforms are shown kneeling on prayer mats in a classroom setting. They are being led by a woman wearing a headscarf who speaks with a British accent.

The footage depicts the children raising their hands and repeating “Allahu Akbar” (“God is Great” in Arabic) after the teacher’s prompt. The teacher then appears to sit down and instructs the children to repeat another phrase: “Subhan Allah al-A’la” (“Glory be to God the Most High” in Arabic).

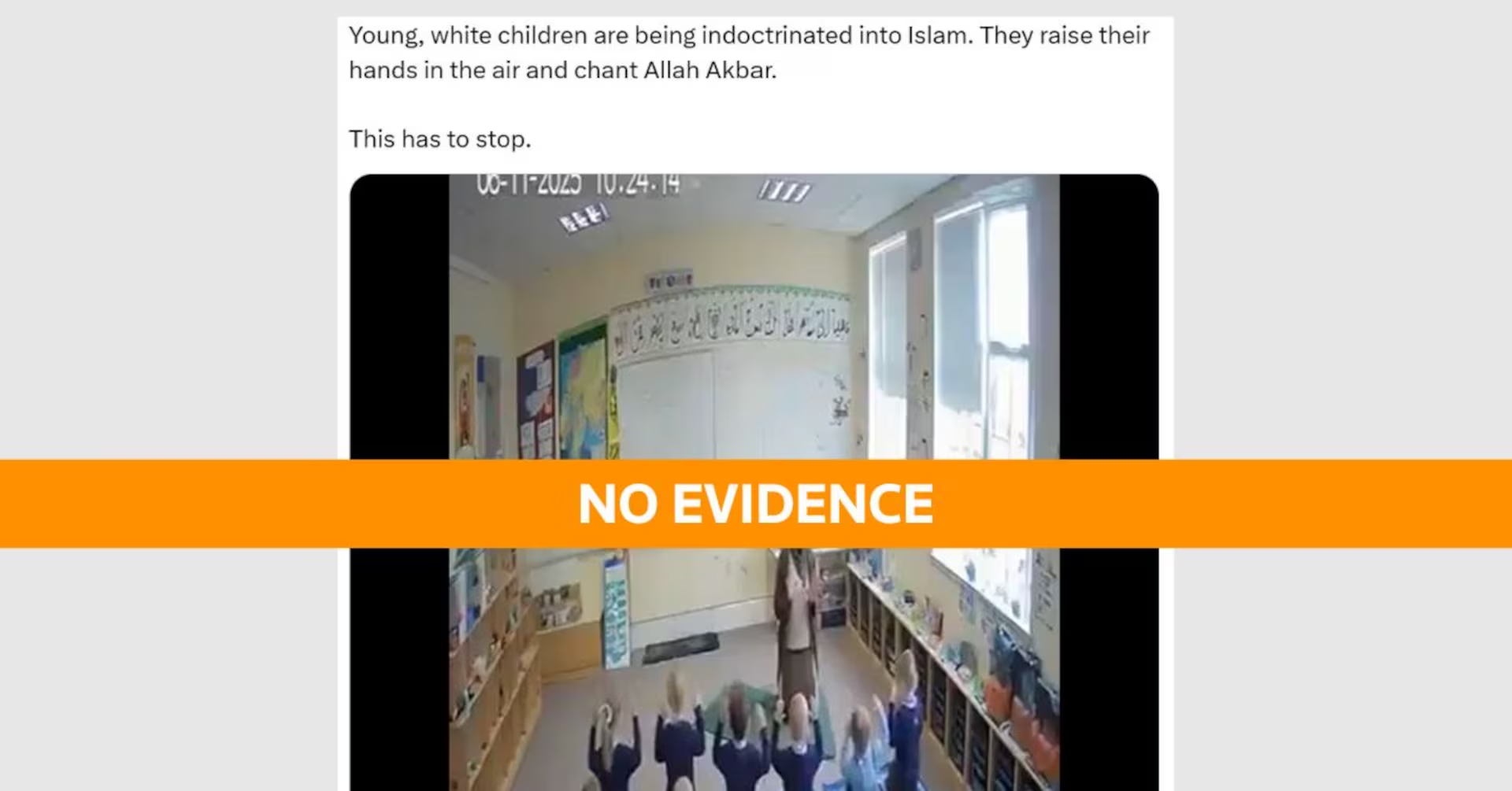

The clip rapidly gained traction on social media platforms, particularly on X (formerly Twitter), where one post garnered 1.8 million views with the inflammatory caption: “Young, white children are being indoctrinated into Islam. They raise their hands in the air and chant Allah Akbar. This has to stop.” Another post that received 1.1 million views described the content as “sick” and referred to it as “Muslim indoctrination.”

However, multiple technical experts who reviewed the footage found clear evidence of artificial intelligence manipulation, confirming the video is not authentic.

Siwei Lyu, a computer science professor at the University at Buffalo, told Reuters via email that the clip “exhibits multiple signs of AI generation.” He identified several visual anomalies that would not be present in genuine footage, including the teacher appearing to sit on an invisible chair and her face showing distortion.

Lyu also pointed out that students in the front row had heads that stretched unnaturally, wall decorations and text changed throughout the brief video, and a girl’s twin braids inconsistently appeared and disappeared – all telltale signs of AI-generated content.

Rob Cover, a professor of digital communication at RMIT University in Australia, concurred with this assessment, noting additional indicators of AI manipulation. He described the audio as having a characteristic “metallic sounding” quality common in lower-quality AI-generated content.

From a visual perspective, Cover highlighted how the bodies, heads, and hair of the children appeared less focused than their surroundings – another common artifact in AI-generated imagery. He also noted unusual movement in wall posters, inconsistencies in a girl’s hair tie, and most conclusively, the absence of a chair when the teacher appears to be sitting down.

This incident illustrates the growing sophistication and danger of AI-generated disinformation, particularly content designed to inflame cultural and religious tensions. The video’s timestamp of 2025 – a year in the future – was overlooked by many viewers who shared it as if it were current footage.

The rapid spread of this falsified content underscores the challenges facing social media platforms and users in distinguishing between authentic and AI-generated material. As AI technology becomes more accessible and advanced, the potential for creating convincing fake videos that promote divisive narratives increases.

Media literacy experts emphasize the importance of critical evaluation of online content, particularly videos that depict inflammatory scenarios. Key warning signs include visual inconsistencies, unnatural movements, and audio that sounds slightly processed or metallic.

The Reuters Fact Check team, which regularly investigates viral claims and content, concluded there is no evidence to suggest the video is authentic, pointing to the multiple inconsistencies identified by AI forensics experts as clear indicators of artificial generation.

As AI-generated content becomes more prevalent, the need for sophisticated detection tools and greater public awareness about the markers of synthetic media becomes increasingly urgent for preserving information integrity in digital spaces.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

It’s troubling to see how easily misinformation can spread, even when the source appears to be fabricated. This highlights the need for media literacy and critical thinking skills, so people can identify potential AI-generated content.

This is a concerning development if the video was indeed fabricated using AI. The ability to create realistic-looking but false footage could have serious implications for public discourse and trust. Rigorous analysis is needed.

You make a good point. The spread of AI-generated misinformation is a growing challenge that will require robust fact-checking efforts and media literacy education to combat.

The ability of AI to create such convincing fakes is certainly concerning. While the content of this particular video is sensitive, I’m glad to see experts analyzing it to understand the technology behind these kinds of manipulations.

You’re right, it’s important to approach these topics with care and nuance. Relying on credible sources and fact-checking is crucial to prevent the spread of misinformation, regardless of the technology used to create it.

The ability of AI to create such convincing fakes is certainly worrying. While the content of this video is sensitive, it’s important to approach the issue objectively and rely on credible sources to understand the facts. Fact-checking and media literacy education will be key to combating the spread of misinformation.

While the content of the video is sensitive, I’m glad experts are investigating the potential use of AI in creating this kind of footage. Understanding the technologies behind misinformation is key to developing effective countermeasures.

Absolutely. Staying vigilant and relying on credible sources is crucial, especially when controversial topics are involved. Fact-checking is essential to prevent the spread of false narratives.

The use of AI to create fake videos is a troubling development that could have significant consequences for public discourse. I’m glad to see experts investigating this case thoroughly to better understand the technology and its implications.

Absolutely. Identifying and addressing the spread of AI-generated misinformation is crucial for maintaining a well-informed and healthy public dialogue. Fact-checking and media literacy education will be important tools in this effort.

This report highlights the importance of critical thinking and media literacy, especially when it comes to viral content. The potential for AI-generated fakes is concerning, and I’m glad to see experts analyzing this case to better understand the technology and its impacts.

This is a complex issue that requires a balanced and thoughtful approach. While the potential for AI-generated misinformation is worrying, it’s important to avoid inflaming tensions or making unfounded claims. Rigorous analysis and fact-checking are key.

Interesting report on the potential use of AI to create disinformation. Verifying the authenticity of viral videos is crucial, as they can easily spread misinformation. I’m curious to hear more expert analysis on this particular case.

Agreed, it’s important to be cautious about such viral content and rely on credible sources to understand the facts. AI-generated fakes can be quite convincing, so fact-checking is essential.