Listen to the article

AI Warfare: The Evolution of Information Manipulation in Modern Conflict

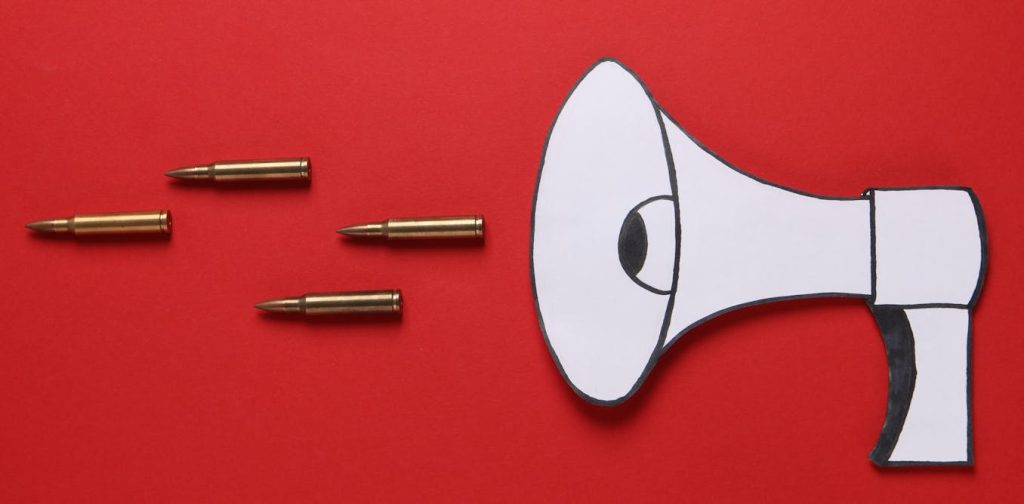

While artificial intelligence may be a relatively recent technology, information warfare has been a staple of human conflict throughout history. For thousands of years, armies and nations have deployed propaganda, deception, and psychological operations to influence enemy decision-making and morale.

The Mongols of the 13th century demonstrated an early form of psychological warfare by destroying entire cities, allowing word to spread to neighboring regions. This strategic brutality often broke the morale of potential adversaries, compelling them to surrender before Mongol troops even arrived at their gates.

As technology advanced, so did the methods of information warfare. During World War II and the 1991 Gulf War, aircraft dropped leaflets containing propaganda messages to demoralize enemy forces. The Vietnam War saw the emergence of targeted radio broadcasts, with the infamous “Hanoi Hannah” (Trịnh Thị Ngọ) taunting American troops by broadcasting their locations and casualties, effectively undermining their will to fight. The devastating power of radio was later demonstrated during the Rwandan Genocide in 1994, when broadcasts were used to coordinate and incite mass killings.

The advent of cable television created another paradigm shift. The 1991 Gulf War marked the first major conflict broadcast on a 24-hour news cycle, replacing periodic bulletins with continuous information streams. This technological transition fundamentally shaped public perception, with coverage often skewed toward national interests, leading historians to label it the “CNN War.”

Today, we are witnessing the next stage in this evolution—from print, radio, and television to social media and artificial intelligence. If the First Gulf War was the “CNN War,” the 2025-2026 conflicts involving the United States, Israel, and Iran can be considered the first “TikTok War” and the first major “AI War.”

AI has introduced unprecedented forms of information warfare targeting perceptions, information environments, and trust itself. AI-generated videos have fundamentally transformed how both state and non-state actors conduct information campaigns, manipulate populations, and compete for narrative control—not just in regional conflicts but on the global stage.

This “synthetic media” serves two primary purposes: falsifying footage of actual events (like fabricating devastating military attacks or creating fake videos of officials pleading for ceasefire) and producing obviously fictional but highly effective propaganda material. A prime example of the latter is Iran’s viral Lego videos that have successfully mocked both Israel and the United States throughout recent conflicts.

The Digital Battlefield and Hyperreality

To comprehend AI’s disruptive potential in warfare, we can look to the dystopian speculations of science fiction. Author William Gibson, who coined the term “cyberspace” in his 1983 novel “Neuromancer,” described it as a “consensual hallucination”—a graphic representation of data, not reality itself.

However, when digital tools like AI videos and social media function as weapons, the boundary between cyberspace and physical reality becomes increasingly blurred. They create what French media theorist Jean Baudrillard termed “hyperreality”—a condition where the distinction between reality and simulation collapses, and the simulation feels “more real than real.”

Baudrillard’s concept of “simulacra” is particularly relevant—these are copies or representations that increasingly lose connection to original reality. Iran’s Lego videos exemplify this concept, depicting surreal scenarios like Trump and Netanyahu using war as distraction while worshiping pagan deities. Despite having no connection to the actual Danish toy company, these videos have gained enormous traction globally as viral propaganda.

Medium as Message in the AI Era

Media theorist Marshall McLuhan’s famous phrase “the medium is the message” takes on new significance in the AI era. While Iranian, American, and Israeli AI videos contain vastly different content, the medium itself communicates something profound: these videos transcend geographical boundaries in unprecedented ways.

Unlike traditional pamphlets, radio broadcasts, or television networks, AI content can be both produced and consumed anywhere—Tehran, Tel Aviv, Washington, or any corner of the globe. This has spawned a new era of borderless, decentralized, viral digital diplomacy that operates outside traditional communication channels.

Deepfakes and Truth Decay

Unlike obviously fictional content like Iran’s Lego videos, AI deepfakes present realistic but fabricated content that challenges viewers’ ability to distinguish truth from falsehood. Early deepfakes were crude and easily identifiable, but modern versions have achieved photorealistic quality and vocal authenticity that can deceive even experienced observers and automated detection systems.

During the “12-Day War” of 2025 between Israel and Iran, AI deepfakes and video game footage were used to simulate combat footage. These fabrications included scenes of destroyed Israeli aircraft, collapsing buildings in Tel Aviv, and Israeli strikes on Tehran. Even when some content was obviously fake—like a widely-shared flight simulator image of a downed Israeli F-35 fighter that was disproportionately sized—it still garnered millions of views and was circulated by networks aligned with Russia to suggest vulnerability in American-made aircraft.

The three most viewed deepfake videos during the 2025 conflict received an astounding 100 million views across social media platforms. One particularly deceptive Facebook video depicted Israeli officials supposedly pleading for American intervention, falsely claiming “we cannot fight Iran any longer.”

Legal scholars have termed this emerging phenomenon “liar’s dividend” and “truth decay”—a media environment where AI-generated fakes cast doubt on all evidence, eroding public trust to the point where any image can be dismissed as potentially fabricated.

The conflicts of 2025-2026 demonstrate that alongside conventional arms races for drones, missiles, and defense systems, a parallel competition is unfolding online. The digital revolution, enhanced by advances in AI, has exponentially increased the speed, scale, and sophistication of information manipulation. These conflicts herald a new era of warfare where AI technologies are weaponized to influence, disrupt, and destabilize adversaries in ways previous generations could hardly imagine.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

The historical examples of psychological warfare provided really put this Iran Lego video case in context. It’s a reminder that information manipulation is nothing new, but the AI tools make it far more scalable and convincing. Staying vigilant against these tactics is crucial.

Absolutely. As AI continues to advance, we’ll likely see even more sophisticated and pervasive propaganda efforts. Developing counter-strategies will be an ongoing challenge for governments and tech platforms.

This article offers a sobering look at the new frontiers of information warfare, with Iran’s use of AI-generated Lego videos for propaganda. The historical context provided is helpful in understanding how these tactics have evolved over time. Staying vigilant and developing effective countermeasures will be an ongoing challenge.

Agreed. As AI capabilities continue to advance, we can expect to see even more sophisticated and pervasive disinformation campaigns. A comprehensive, multi-stakeholder approach will be essential to combat these emerging threats.

The use of AI-generated Lego videos for propaganda is a concerning new frontier. It shows how quickly these technologies can be weaponized, even in seemingly innocuous formats. We’ll need robust media literacy efforts to help the public spot these kinds of deepfakes.

Agreed. Leveraging popular media like Lego videos is a savvy way to make the propaganda more accessible and engaging. Combating this will require a multi-pronged approach.

This article highlights how quickly the landscape of information warfare is shifting. The use of AI-generated Lego videos for propaganda is a novel and concerning tactic. It underscores the need for robust media literacy education to help the public identify these kinds of deepfakes.

Absolutely. As AI capabilities advance, we can expect to see increasingly sophisticated and convincing propaganda efforts. Staying vigilant and developing effective countermeasures will be an ongoing challenge.

The evolution of information warfare, from leaflets to radio to AI-generated videos, is a sobering reminder of the relentless drive to weaponize communication technology. Combating this will require exceptional media literacy, fact-checking, and technological solutions.

Well said. The stakes are high, as disinformation can have devastating real-world consequences. A multifaceted, proactive approach will be essential to stay ahead of these emerging threats.

Fascinating how information warfare has evolved over the centuries, from psychological tactics like the Mongols to modern AI-generated propaganda. It highlights the constant innovation in this space and the need for vigilance against disinformation campaigns.

You raise a good point. As AI capabilities advance, I imagine these kinds of propaganda techniques will only become more sophisticated and challenging to detect.

The historical examples provided give helpful context on the evolution of information warfare tactics. The shift to AI-generated propaganda, like these Lego videos, is a worrying development. Combating this will require a multi-pronged approach of technological solutions, media literacy, and fact-checking.

You make a good point. The scale and potential impact of AI-powered disinformation is a serious concern. Developing robust strategies to identify and mitigate these threats will be crucial going forward.