Listen to the article

Researchers have found that efforts to combat misinformation on social media platforms may not always work as expected, with some widely-implemented tactics potentially backfiring in surprising ways.

The selective labeling of false content, a common approach used by platforms like Facebook and Twitter, could inadvertently increase user trust in unlabeled content—even when that content is also false. This “implied truth effect” means that a warning system with incomplete coverage might actually be worse than having no warnings at all, as users may assume anything without a warning is verified and accurate.

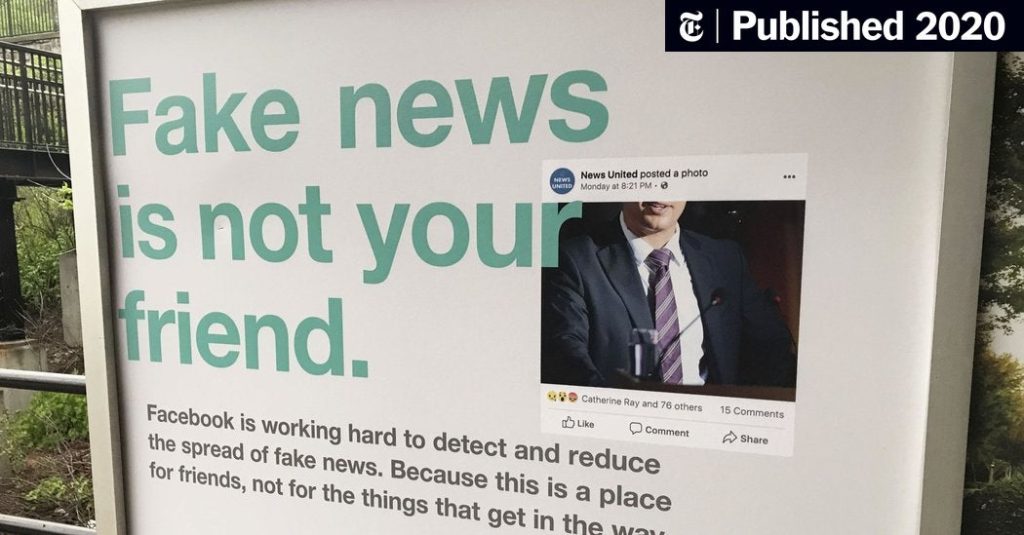

General awareness campaigns about misinformation have shown similarly counterproductive results. Facebook’s 2017 initiative featuring billboards and subway ads declaring “Fake news is not your friend” fell short of its intended impact. Studies reveal such broad warnings often diminish trust in all news sources indiscriminately, regardless of their reliability—ironically aligning with the goals of many disinformation campaigns seeking to erode trust in legitimate media.

Despite these setbacks, some intuitive approaches have proven effective. Research confirms that encouraging users to slow down and think more critically does help reduce belief in and sharing of fake news. This “cognitive reflection” approach has shown promising results in multiple studies.

In a surprising twist, methods initially dismissed by critics have sometimes demonstrated unexpected effectiveness. When Facebook proposed surveying users about trusted news sources in 2018, the idea was widely ridiculed. However, empirical testing conducted by researchers Gordon Pennycook and David Rand revealed that this crowdsourcing approach was remarkably effective at identifying misinformation sources.

“The data showed that collective wisdom from users could reliably distinguish between trustworthy and untrustworthy sources,” said Pennycook, an assistant professor at the University of Regina’s Hill and Levene Schools of Business, and Rand, who teaches at MIT’s Sloan School of Management and its department of brain and cognitive sciences.

The researchers emphasize that social media companies need to move beyond intuition and common sense when developing anti-misinformation strategies. “What sounds reasonable in theory might fail in practice, and what seems counterintuitive might actually work well,” they noted, pointing to the importance of rigorous testing.

This evidence-based approach requires technology companies to invest time in proper research before widespread implementation of new features. While this methodology may delay the rollout of misinformation countermeasures, the researchers argue that effectiveness should take precedence over speed.

The public also has a role to play by allowing tech companies sufficient time to conduct thorough evaluations rather than demanding immediate solutions to complex problems. However, this patience should be conditional on the companies demonstrating genuine commitment to scientific assessment.

“Social media platforms should be transparent about their internal evaluations and collaborate more with independent researchers who will publish accessible reports,” the researchers suggest. This transparency would build public trust while ensuring accountability.

The challenge of combating online misinformation highlights a fundamental tension between quick action and effective intervention. As platforms face increasing pressure to address the spread of false information, the research indicates that hasty implementation of untested solutions could exacerbate rather than solve the problem.

For effective oversight of technology companies in this domain, stakeholders must prioritize evidence-based approaches that demonstrably reduce misinformation, even if developing these solutions takes longer than initially hoped. As Pennycook and Rand conclude, “Proper oversight of these companies requires not just a timely response but also an effective one.”

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.