Listen to the article

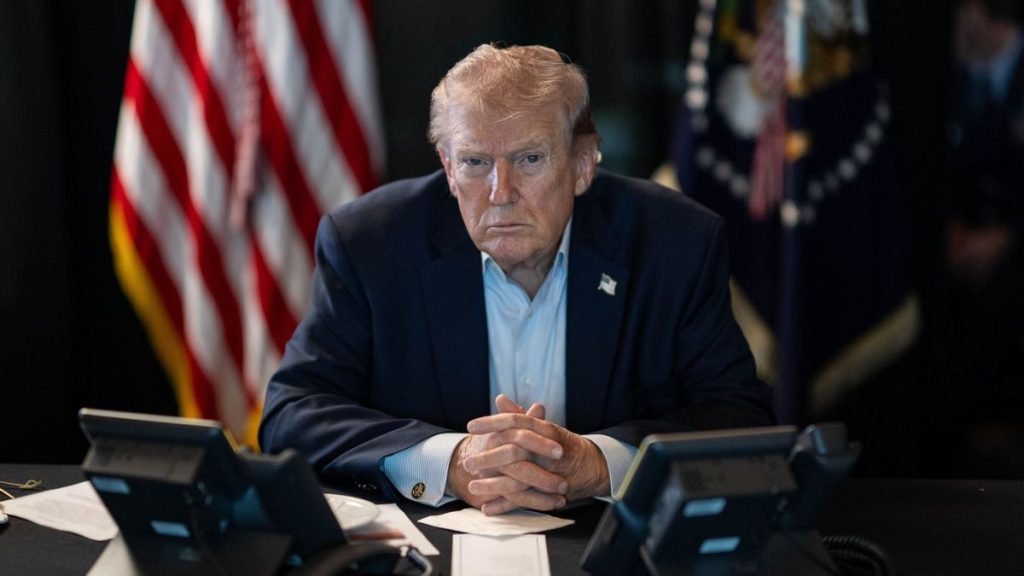

Fact Check: Debunking Viral “Trump-Epstein Files” Audio Recording

A fabricated audio clip purporting to feature former President Donald Trump panicking about the release of Jeffrey Epstein-related files has been circulating widely across social media platforms since late February, gaining significant traction amid escalating tensions in the Middle East.

The recording, which emerged shortly after U.S. and Israeli forces launched airstrikes against Iran on February 28, allegedly captures Trump in a profanity-laced tirade, instructing staff to prevent the release of documents connected to convicted sex offender Jeffrey Epstein.

“[We’re] not releasing the Epstein files! F Marjorie Taylor Greene. I don’t care what you do. Start a fing war. Just don’t let ’em get out. If I go down, I will bring all of you down with me,” the voice in the recording states.

Forensic analysis has definitively confirmed the audio is entirely fabricated, created using OpenAI’s sophisticated Sora 2 artificial intelligence tool, which enables users to generate realistic video and audio content.

The original clip appears to have first surfaced on Facebook with two distinct watermarks—one for TikTok user @fresh_florida_air and another for Sora 2 user @bradbradt31. The TikTok account, which has since been removed, previously hosted numerous AI-generated videos, including the Trump recording, which was initially posted on November 16, 2025.

Before the account’s removal, its owner acknowledged in private messages to fact-checkers that both the visuals and audio were “fully AI-generated creative elements” designed for “artistic experimentation and social commentary” rather than representing actual events or statements.

“My intent is creative expression, not presenting anything as factual,” the account owner stated.

This wasn’t the only fabricated Trump audio produced by this creator. Another clip from the same account depicted Trump saying, “I don’t f***ing care how long this shutdown lasts. We will not lose to the Democrats. We will not release the Epstein files. I don’t care if the entire country starves.” That recording abruptly ended with Trump supposedly saying, “SNAP benefits can go to…”

The timing of the video’s viral spread coincides with heightened international tensions and ongoing Congressional investigations into connections between high-profile figures and Jeffrey Epstein. This context likely contributed to the clip’s rapid dissemination across multiple platforms, where it was frequently shared without the original AI disclosure.

Media analysts note this incident highlights the increasing sophistication of AI-generated content and the challenges it presents for information integrity. The Sora 2 tool, released by OpenAI in September 2025, represents a significant advancement in AI’s ability to create convincing synthetic media that can be difficult for average users to identify as fake.

“This type of sophisticated AI forgery represents a growing concern for election security and public discourse,” said Dr. Melissa Hartman, digital misinformation researcher at Columbia University. “The technology has advanced to where these fakes can spread widely before they’re debunked.”

The video appears designed to play into existing conspiracy theories connecting geopolitical events to the Epstein case, which has remained a controversial topic since the financier’s death in 2019. Congressional hearings involving former President Bill Clinton and former Secretary of State Hillary Clinton regarding Epstein have further kept the matter in public discourse.

Social media platforms have taken varied approaches to addressing this content, with some removing the videos while others have applied warning labels identifying them as AI-generated. Digital literacy experts recommend users approach emotionally charged political content with heightened skepticism, particularly when it appears to show public figures making extreme statements that haven’t been reported by major news organizations.

This incident underscores the ongoing challenge of navigating an information landscape increasingly populated by convincing synthetic media, particularly as the 2026 election cycle approaches.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

10 Comments

While the technology behind this fabricated audio is impressive, it’s deeply troubling to see how easily disinformation can spread online. We must remain vigilant, rely on authoritative sources, and do our part to combat the erosion of trust in our institutions and leaders.

I’m glad to see this ‘leaked call’ audio has been definitively debunked as a fake. In an era of AI-generated deepfakes, we must all be critical consumers of online content and prioritize fact-based reporting over sensationalized or unverified claims.

This ‘leaked call’ seems to be a fake audio clip generated using sophisticated AI tech. It’s troubling to see such convincing disinformation circulating, undermining trust in our leaders and institutions. We should be vigilant about verifying claims before spreading them further.

I’m glad this audio recording has been debunked as fabricated. With deepfakes and AI-generated content becoming more advanced, it’s crucial we approach online claims with healthy skepticism and rely on authoritative, fact-based sources. Spreading unverified information can have serious consequences.

Agreed. Fact-checking and media literacy are essential skills in this digital age. We must be cautious consumers of information, always seeking to verify claims before amplifying them.

This debunked ‘leaked call’ audio is a prime example of the challenges we face in the digital age. As AI-powered misinformation becomes more sophisticated, we all have a responsibility to think critically, verify claims, and avoid amplifying unsubstantiated narratives.

It’s troubling to see how easily fabricated audio clips can go viral these days. I’m glad this one has been debunked, but it’s a reminder that we all need to be critical consumers of online content and do our part to combat the spread of disinformation.

The proliferation of AI-generated deepfakes is a concerning trend that undermines our ability to have meaningful, evidence-based discourse. I’m glad this particular audio clip has been exposed as a fake, but we must stay vigilant against the spread of similar disinformation going forward.

Fabricated audio like this ‘leaked call’ is a growing threat to our ability to have informed, fact-based discussions. We must remain vigilant and rely on authoritative sources to separate truth from fiction, lest we risk further erosion of trust in our institutions and leaders.

This is a concerning example of how AI-powered misinformation can spread rapidly online. While the technology behind these fakes is impressive, we must remain vigilant and hold ourselves and others accountable for sharing only verified, credible information.