Listen to the article

The Digital Divide in Misinformation Detection: Understanding the Role of Digital Literacy

In an era where false information spreads faster and more widely than accurate news, understanding how people identify and process misinformation has become crucial for maintaining informed societies. Researchers continue to disagree on precise definitions, but broadly speaking, misinformation refers to the unintentional spreading of false information, while disinformation is deliberately created to mislead.

The phenomenon is hardly new. Misinformation has influenced European politics since the 16th century, notably during the French Revolution. What has changed dramatically is the ease and speed with which false information now spreads, particularly through social media networks.

Studies consistently show that misinformation propagates more extensively than factual news. Perhaps more concerning, repeated exposure to false information increases its perceived credibility, regardless of its accuracy. This “illusory truth effect” occurs even when people initially recognize information as untrue.

The modern media landscape, particularly social networks, has created unprecedented opportunities for individuals and groups to spread misinformation for political, social, and economic objectives. Political actors regularly deploy misinformation campaigns to skew public opinion and influence political systems globally.

“The consequences of this phenomenon are serious,” notes Dr. Nili Steinfeld, whose research in Israel shows a clear majority of citizens believe false messages leave the public confused about basic facts. Similar concerns have been documented in the United States, the United Kingdom, Australia, and many other countries worldwide.

Demographic research offers some insights into who tends to spread misinformation. Age appears to be a significant factor, with older adults more likely to share false content online. However, variables such as income and gender have not consistently predicted misinformation-sharing behavior. Some studies suggest education may play a role, with less educated individuals more likely to unintentionally share false information.

Digital literacy has emerged as a critical factor in combating misinformation. This extends beyond traditional media literacy to encompass the ability to navigate, evaluate, and effectively use digital information technologies. Digital literacy includes technical competence with internet platforms, cognitive abilities to analyze information critically, and practical skills in verifying information.

In our data-driven world, data literacy—the ability to interpret, use and assign meaning to data—has become an essential component of digital literacy. Experts argue that in an “information distortion” era, we must reconsider what skills and thinking processes are necessary to handle misinformation effectively.

Israel presents a fascinating case study in this regard. The country exhibits one of the widest digital divides among OECD nations, particularly between its ultra-Orthodox (Haredi) and non-Haredi Jewish populations.

The Haredi community emphasizes traditional literacy skills focused on reading and understanding religious texts. Gender differences in education within this community are pronounced—boys’ education centers on Torah study with minimal secular content, while girls receive more secular education to prepare them for supporting their families financially. Despite this emphasis on women’s education, both Haredi men and women demonstrate relatively low digital problem-solving abilities, which hinders their workforce integration.

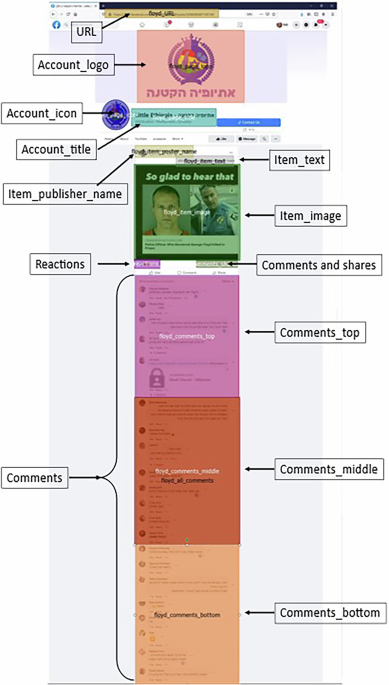

Researchers have begun using eye-tracking technology to objectively measure digital literacy and information assessment behaviors. Unlike self-reported measures, eye tracking provides unbiased data on how people scan webpages and process information. The technology reveals which areas of content receive attention and how people interact with metadata—contextual information that provides clues about content credibility.

Metadata elements such as account details, posting dates, follower counts, and engagement metrics serve as important credibility indicators for digital content. Studies show that people with higher digital literacy pay more attention to these elements when assessing information.

Recent eye-tracking studies have revealed distinct patterns when people encounter misinformation: increased eye regressions (looking back at previously viewed content) and higher fixation counts are common when processing false information. These behaviors suggest deeper cognitive processing as readers attempt to evaluate dubious content.

As societies grapple with the challenges of widespread misinformation, understanding how different populations process digital information and identifying the specific literacy skills that enable effective misinformation detection will remain crucial research priorities.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

22 Comments

Nice to see insider buying—usually a good signal in this space.

Exploration results look promising, but permitting will be the key risk.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

The cost guidance is better than expected. If they deliver, the stock could rerate.

I like the balance sheet here—less leverage than peers.

Nice to see insider buying—usually a good signal in this space.

Good point. Watching costs and grades closely.

Exploration results look promising, but permitting will be the key risk.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

I like the balance sheet here—less leverage than peers.

Good point. Watching costs and grades closely.

The cost guidance is better than expected. If they deliver, the stock could rerate.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.

Production mix shifting toward Social Media might help margins if metals stay firm.

Nice to see insider buying—usually a good signal in this space.

Good point. Watching costs and grades closely.

Interesting update on Investigating Digital Literacy: Eye-Tracking Study Examines Misinformation Recognition in Ultra-Orthodox Community. Curious how the grades will trend next quarter.

Good point. Watching costs and grades closely.

Good point. Watching costs and grades closely.