Listen to the article

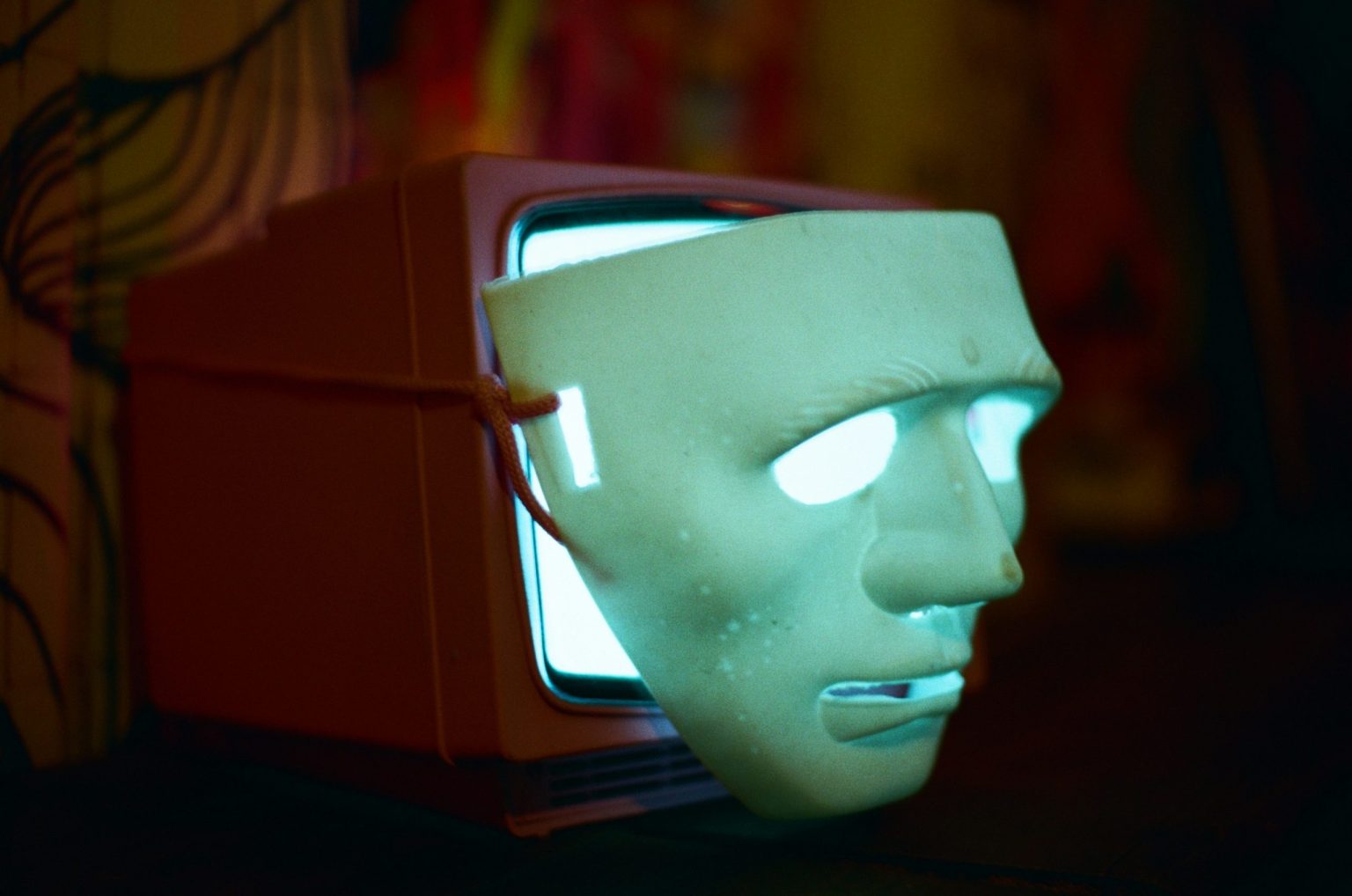

Russia Weaponizes AI for Deepfake Propaganda, Experts Warn

A theater professor at King’s College London, Alan Read, recently discovered something disturbing – his own face and voice promoting anti-European Union propaganda he never created. In a sophisticated deepfake video circulating on social media, an artificial voice nearly identical to Read’s made political statements against French President Emmanuel Macron and other Western leaders, claiming they were aboard a sinking “Titanic” called the European Union.

“Almost everything in the altered video was irredeemably stupid and terrible to listen to,” Read told the BBC, describing his shock at seeing himself portrayed as someone completely alien to his actual views and expertise.

This incident represents just one example of a growing wave of Russia-linked deepfake campaigns targeting Western democracies. Security experts are increasingly concerned about the Kremlin’s expanding influence operations using artificial intelligence tools.

“Not only has there been an increase in deepfakes, but we’re witnessing a fundamental change in how influence operations are conducted,” said Chris Kremidas-Courtney, a defense and security analyst at the European Policy Centre think tank. “Society is now confronted with systems capable of creating large-scale deception at a fraction of the traditional cost, and no current governance system is equipped to combat this effectively.”

The surge in AI-generated propaganda comes as technology companies race to develop and release increasingly sophisticated video generation tools. OpenAI’s latest Sora2 software has sparked competition among AI companies, many of which are cutting costs by removing security features like watermarks that could help identify artificial content.

While OpenAI includes watermarks in Sora2 videos and claims to prevent the use of real people’s likenesses without permission, many competing apps have no such restrictions. OpenAI told the BBC it takes action against accounts engaging in deceptive activities aimed at causing harm, including those that mislead about content origins.

The technological competition has fueled both the volume and quality of foreign influence campaign content, strengthening Russia’s hybrid warfare capabilities against Western nations. These deepfake campaigns often target EU institutions and spread anti-Ukraine narratives as Europe struggles with decisions about continued financial aid to Kyiv.

Poland recently experienced a wave of deepfake videos on TikTok featuring young Polish women calling for Poland’s exit from the European Union. Adam Szlapka, a Polish government spokesman, definitively labeled it “a disinformation campaign created by Russia,” noting the videos contained telltale signs of Russian syntax. Polish authorities have called on the European Commission to investigate, while TikTok has removed the videos and accounts responsible.

The platform reports having dismantled 75 covert influence operations by 2025, highlighting the scale of the problem.

In the United Kingdom, Parliament has raised concerns that Russian deepfake news could influence upcoming local elections in May. The UK’s Online Safety Act requires platforms to remove content created by foreign influence agents, but detection and removal often take time while videos can reach viral status within hours.

Tracing the origin of these campaigns presents significant challenges. However, Western researchers note common characteristics across many posts – from stylistic patterns to distribution channels – that connect them to organized disinformation units with links to the Kremlin.

Unlike traditional Russian propaganda outlets such as RT and Sputnik, which faced Western sanctions after Russia’s full-scale invasion of Ukraine, deepfake campaigns provide plausible deniability that makes them considerably more difficult to combat.

The evolution of these AI-powered influence operations represents a significant escalation in information warfare. As detection technology struggles to keep pace with increasingly sophisticated deepfakes, experts warn that democratic institutions must develop more robust defenses against this emerging digital threat.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

18 Comments

This is a stark reminder of the potential dangers of AI in the wrong hands. I appreciate the experts’ warnings about the fundamental shift in how influence operations are being conducted. We must stay ahead of these rapidly evolving threats.

Absolutely. Proactive, coordinated efforts to build resilience against deepfake-fueled disinformation campaigns will be essential going forward.

The Kremlin’s use of deepfake technology to undermine trust in Western leaders is a concerning development. I’m curious to learn more about the specific tactics and techniques they are employing, as well as the countermeasures being developed to address this threat.

That’s a great point. Understanding the evolving nature of Russian influence operations and staying ahead of their tactics will be critical to protecting the integrity of our information environment.

This is a sobering example of how rapidly evolving AI capabilities can be weaponized for nefarious purposes. It’s a stark reminder that we must stay vigilant and continue to invest in countermeasures to protect the integrity of our information ecosystem.

This is a disturbing trend. Deepfake videos can be a powerful tool for disinformation and undermining trust in democratic institutions. It’s crucial that we develop robust methods to detect and counter these AI-manipulated propaganda efforts.

Agreed. Tackling the spread of deepfake misinformation will require a multi-pronged approach involving technology, media literacy, and international cooperation.

While AI-generated deepfakes are concerning, I’m curious to learn more about the specific tactics and techniques Russia is employing. What other types of influence operations are they pursuing, and how can we strengthen our defenses against these threats?

Good point. Understanding the Kremlin’s evolving playbook is key to developing effective countermeasures. Continued vigilance and information-sharing between governments, tech companies, and civil society will be crucial.

This incident highlights the importance of verifying the authenticity of digital media, especially in the context of high-stakes political discourse. We must continue to invest in the research and development of deepfake detection technologies.

The use of deepfakes to undermine trust in democratic institutions is deeply concerning. While the technology holds promise in many applications, we must ensure robust safeguards are in place to prevent its malicious use.

Well said. Effective regulation, digital forensics, and public awareness campaigns will all be critical to mitigating the risks of AI-manipulated media.

While the capabilities of AI-generated deepfakes are concerning, I’m encouraged to see experts and policymakers taking this threat seriously. Collaborative efforts to counter misinformation and protect democratic institutions are crucial.

Agreed. Strengthening international cooperation and information-sharing will be key to developing effective strategies to combat the spread of AI-manipulated propaganda.

Deepfake videos are a concerning new frontier in the information war. I’m curious to learn more about the specific tactics Russia is employing and how the EU and other Western democracies are responding to these threats.

Agreed. Understanding the Kremlin’s playbook and developing robust, coordinated responses will be crucial in safeguarding our democratic institutions and processes.

The use of AI-manipulated videos to spread disinformation is a troubling development. It underscores the need for greater media literacy and the development of effective technological solutions to detect and counter these emerging threats.

Absolutely. A multi-stakeholder approach involving government, tech companies, and civil society will be essential to addressing the challenge of deepfake-fueled propaganda.