Listen to the article

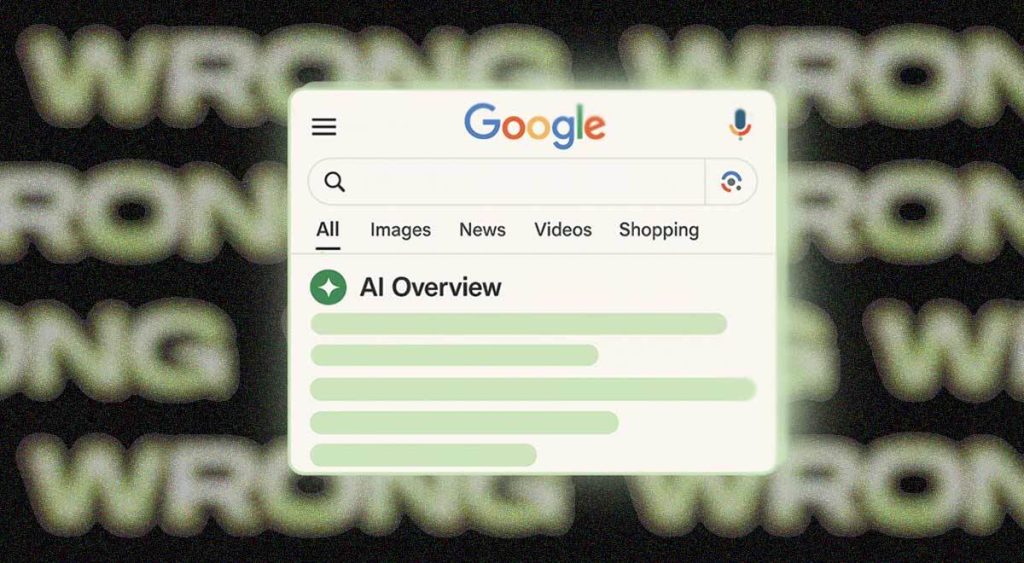

Google’s AI Overviews Shows 91% Accuracy Rate, Still Generates Millions of Errors Daily

A recent study has found that Google’s AI Overviews feature provides accurate and reputable responses approximately 91% of the time. While this figure appears impressive at first glance, experts warn it actually represents a concerning volume of misinformation given the massive scale of Google’s search operations.

The analysis, conducted by AI startup Oumi and reported by The New York Times, reveals the double-edged nature of Google’s transformation from a curator of information into a direct publisher of content. With Google processing an estimated 5 trillion search queries annually, the 9% error rate translates to tens of millions of incorrect answers being generated every hour—or hundreds of thousands of inaccuracies every minute.

Oumi researchers utilized SimpleQA, an industry-standard benchmark test for AI systems, to evaluate the accuracy of Google’s AI-powered summaries that appear above traditional search results. The testing occurred in two phases: first in October using Google’s Gemini 2 model, then again in February after the company upgraded to Gemini 3.

The improvement between versions was notable. Accuracy rates rose from 85% with Gemini 2 to 91% with Gemini 3, which is widely considered the least hallucinatory AI model currently available. The analysis focused specifically on 4,326 complex Google searches, providing a substantial sample size for evaluation.

Perhaps more troubling than the raw error rate is what researchers discovered about verification. According to Oumi, more than half of even the accurate responses were classified as “ungrounded,” meaning they linked to websites that didn’t fully support the information provided. This creates significant barriers for users attempting to verify the accuracy of AI-generated summaries.

“Even when the answer is true, how can you know it is true? How can you check?” questioned Manos Koukoumidis, CEO of Oumi, highlighting a fundamental issue with AI-generated responses.

Further complicating matters, Google’s system may produce different answers to identical queries submitted just seconds apart—one potentially accurate and another potentially false. This inconsistency undermines user confidence and makes systematic quality control exceptionally difficult.

The findings come at a critical moment in the evolution of search technology, as major platforms increasingly integrate generative AI capabilities into their core services. Google has positioned its AI Overviews as a convenience feature designed to save users time by directly answering questions without requiring clicks through to source websites.

While Google has added a disclaimer to its AI Overviews feature stating “AI can make mistakes, so double-check responses,” research suggests this caution often goes unheeded. One report found that only 8% of users actually verify an AI’s answers, while another study revealed people continued trusting AI responses 80% of the time even when given incorrect information.

The situation highlights a growing disconnect between public perception of AI reliability and its actual capabilities. Despite significant advances in recent years, large language models still struggle with factual consistency, particularly for nuanced or specialized queries.

For Google, the stakes are particularly high. As the dominant search provider worldwide, errors in its AI-generated responses can quickly reach massive audiences, potentially undermining the company’s reputation for providing reliable information.

Industry analysts note that this issue extends beyond Google to all companies deploying generative AI in consumer-facing applications. The balance between innovation and accuracy remains precarious, with tech giants under pressure to both advance AI capabilities and minimize harmful misinformation.

As AI continues to transform how people access information online, these findings underscore the importance of maintaining robust fact-checking mechanisms and encouraging critical evaluation of AI-generated content, even from trusted technology providers.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

As an investor, I’m very concerned about the potential for AI-driven misinformation to disrupt commodity and energy markets. Accurate, reliable information is essential for making sound investment decisions. This report is a wake-up call for the industry.

Absolutely. Maintaining the integrity of market data and analysis is crucial, especially in volatile sectors like mining and energy. I hope regulators and industry leaders work together to address these AI accuracy challenges head-on.

It’s alarming to think about the real-world impact of millions of AI-generated errors per day. This underscores the urgent need for stronger safeguards and accountability measures around the deployment of these powerful technologies.

I agree completely. The scale of these AI systems means even small error rates can have huge consequences. Rigorous testing, transparent reporting, and robust governance frameworks are critical to mitigating the risks.

As a mining investor, I’m concerned about the potential for AI-driven misinformation to impact commodity markets and companies. Rigorous fact-checking and source validation will be critical to avoid costly errors.

Good point. Misinformation around mining and energy could lead to irrational market movements and hurt investors. Regulatory oversight may be needed to ensure AI systems meet high accuracy standards in these sensitive domains.

As someone who relies on accurate commodity and energy market data, this report is concerning. I hope the mining and energy sectors work closely with tech companies to ensure AI systems generate reliable, fact-based information.

This highlights the challenges of scaling AI systems to handle the massive volume of online information. Benchmarking and continuous improvement are crucial, but the errors can have significant real-world impacts if not addressed.

The scale of these AI systems means even a small error rate can have huge real-world consequences. I hope tech leaders take this report seriously and dedicate serious resources to improving the reliability of AI-generated content.

I appreciate the transparency around the limitations of Google’s AI overviews. Acknowledging the problem is the first step, but the industry needs to develop more robust solutions to ensure online information remains trustworthy.

I’m curious to see what steps Google and other tech leaders take to enhance AI accuracy and reduce the spread of misinformation. Transparency around model performance and limitations will be important for building public trust.

Interesting report on the accuracy issues with Google’s AI overviews. While 91% seems high, the sheer volume of searches means millions of errors daily. Clearly more work is needed to improve AI reliability for critical information.