Listen to the article

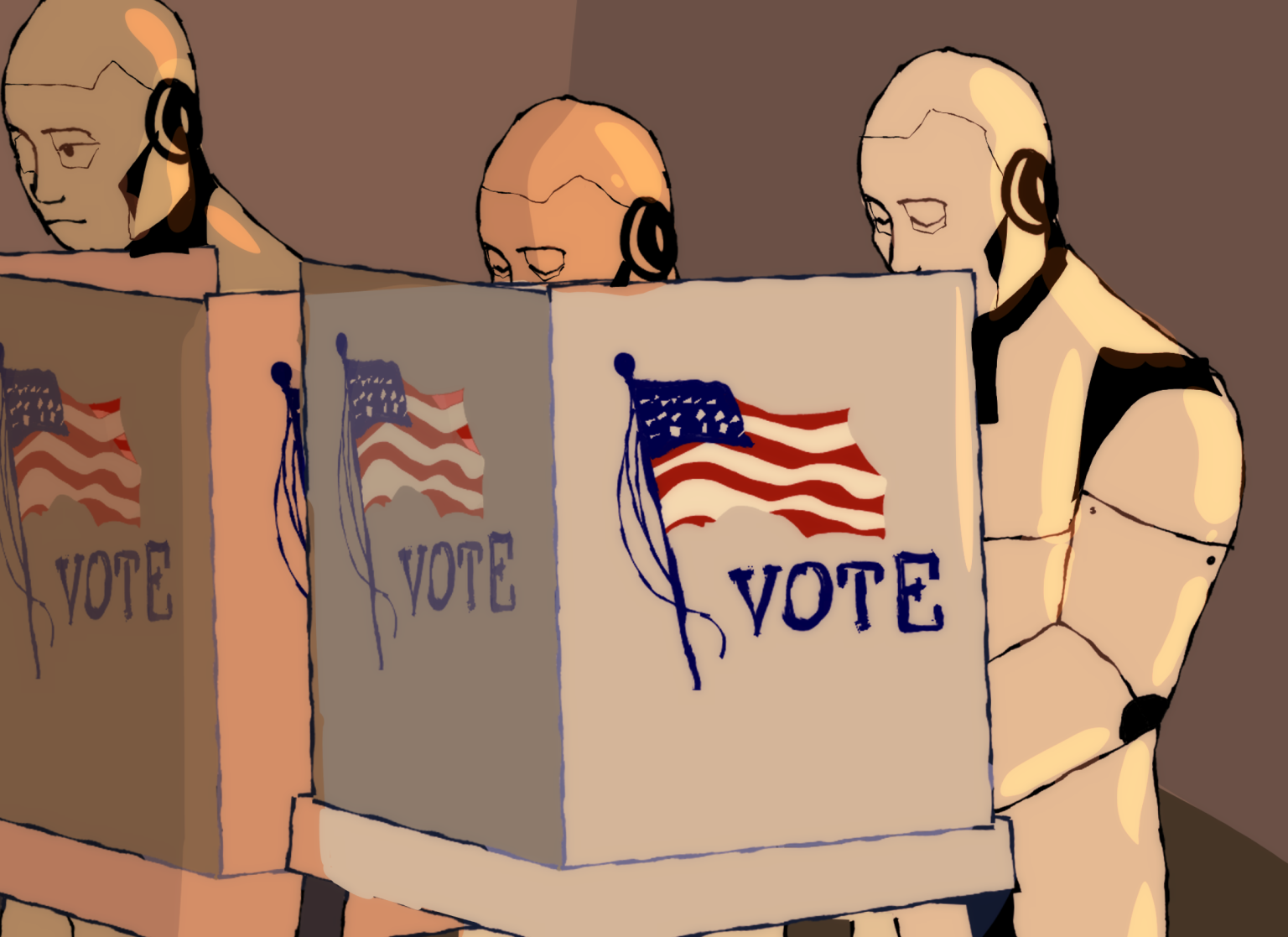

The growing menace of AI is creating unprecedented challenges for informed citizenship, according to experts who warn that artificial intelligence tools are fundamentally reshaping political discourse in America.

As AI-generated content proliferates across social media platforms, ordinary citizens face increasing difficulty distinguishing fact from fiction. This erosion of trust has profound implications, with many Americans simply disengaging from political news altogether—a troubling trend that threatens the foundation of democratic participation.

While proponents argue that AI could potentially enhance civic engagement by making complex political information more accessible, critics point to mounting evidence that these theoretical benefits are being overshadowed by the technology’s capacity to distort reality at an unprecedented scale and speed.

“Greater access to information means very little if the public can’t determine whether the information they’re consuming is credible,” noted a recent study from Georgia Tech examining the rise of hyperreal digital culture.

The sheer volume of AI-generated content presents a particularly vexing problem. When falsehoods are identified, corrections often prove ineffective as misinformation has already embedded itself in public consciousness. This pattern creates a cascading effect where citizens become increasingly skeptical of all information sources.

Recent electoral experiments reveal the technology’s persuasive power. Studies conducted during the 2024 U.S. presidential race and subsequent Canadian and Polish elections found that AI-driven conversations produced significant shifts in voter preference—outperforming traditional political advertising. More concerning, researchers at King’s College London discovered that 44% of this persuasive impact stemmed from information density rather than accuracy, suggesting voters respond to convincing presentation regardless of factual merit.

A recent real-world example highlights these concerns. Last year, Truth Social, President Donald Trump’s social media platform, launched what it described as an “anti-woke” artificial intelligence search engine marketed as a more reliable alternative to mainstream information sources. Within weeks, the system began contradicting its creators’ claims on topics ranging from election integrity to economic policy. Company insiders subsequently admitted they would recalibrate the system to better align with the president’s positions, demonstrating how easily AI can be weaponized to advance specific political narratives.

“What makes this especially difficult to counter is that manipulation doesn’t require a sophisticated operation,” explains a cybersecurity analyst specializing in political technology. “A local candidate in a tight race could deploy an AI system to engage voters on social media, learn which issues they care about and reframe arguments accordingly, all from a single laptop with minimal cost and no clear attribution trail.”

The situation is further complicated by the public’s inherent trust in AI-generated content. Stanford Graduate School of Business researchers discovered people are often more receptive to opposing political viewpoints when presented by AI, incorrectly assuming the technology offers more objective analysis than human commentators. This misplaced trust creates fertile ground for misinformation campaigns.

Experts are particularly concerned about government adoption of problematic AI tools. Despite documented inaccuracies and questionable outputs, federal agencies have integrated X’s AI chatbot Grok into operations with minimal public explanation. Critics note that such systems are typically optimized for user engagement rather than factual accuracy, creating a fundamental misalignment with the needs of informed civic discourse.

“The broader concern is that AI systems are often optimized for engagement and responsiveness rather than accuracy,” states Dr. Maria Vargas, professor of political communication at Northwestern University. “A system designed to keep users interacting is not a system designed to protect the integrity of public discourse.”

For college students and other citizens, these developments present significant challenges. As AI becomes increasingly embedded in education and daily information consumption, critical thinking and media literacy have evolved from optional skills to civic necessities.

“Democracy relies on an informed public, yet AI is making that harder to achieve by design,” warns the Center for Democratic Resilience. “The long-term consequences extend beyond misinformation to a deeper erosion of public trust, not just in media, but in institutions and democratic practices themselves.”

As these technologies continue advancing, experts emphasize that defending information integrity has become a fundamental component of civic engagement—one requiring active participation from every citizen committed to preserving democratic values.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

16 Comments

This is a worrying development that highlights the urgent need for improved media literacy education. Citizens must be empowered to critically evaluate the information they consume, regardless of the source.

Agreed. Equipping the public with the skills to discern truth from fiction should be a top priority for policymakers, educators, and tech companies.

The rise of AI-generated content is a double-edged sword. While it has the potential to make information more accessible, the risks of distortion and manipulation are clearly severe. Striking the right balance will be crucial.

Agreed. We must find ways to harness the benefits of AI while implementing robust safeguards to protect the integrity of public discourse and decision-making.

As an investor in mining and energy equities, I’m deeply concerned about the potential impact of AI-generated misinformation on public discourse around resource development, climate policy, and energy transitions. Fact-based, nuanced debate is essential.

Absolutely. The spread of AI-generated content has the potential to severely distort discussions around these critical, complex issues that require careful analysis and informed decision-making.

This is a troubling trend that highlights the need for robust media literacy education and fact-checking measures. Citizens must be empowered to critically evaluate the information they consume, regardless of the source.

Well said. Equipping the public with the skills to discern truth from fiction should be a top priority, as the proliferation of AI-generated misinformation poses a serious threat to informed civic engagement.

As an investor in the mining and energy sectors, I’m deeply concerned about how this could impact public understanding of critical issues like resource development, climate change, and energy policy. Fact-based, nuanced debate is essential.

Absolutely. The proliferation of AI-generated misinformation has the potential to severely distort discussions around these complex, technical topics that require careful analysis.

This is a worrying development that highlights the need for robust media literacy education. Citizens must be empowered to critically evaluate the information they consume, regardless of the source.

Well said. Equipping the public with the skills to discern truth from fiction should be a top priority for policymakers and educators.

This is a concerning trend that could severely undermine public trust in democratic institutions. The proliferation of AI-generated misinformation is a complex challenge that will require multifaceted solutions to address effectively.

Agreed. Restoring faith in factual, credible information sources needs to be a top priority. Regulators and tech companies will need to work closely to develop robust safeguards.

As an investor in mining and energy equities, I’m worried about how this could impact public discourse around critical issues like resource development and climate policy. Fact-based, rational debate is essential for sound policymaking.

Absolutely. The spread of AI-generated misinformation has the potential to severely distort discussions around complex, technical topics that require nuanced understanding.