Listen to the article

X Platform Cracks Down on AI-Generated War Videos to Combat Misinformation

Elon Musk’s social media platform X announced a significant policy change on March 3, targeting creators who post artificially generated war footage without proper disclosure. The platform will suspend users from its lucrative Creator Revenue Sharing program for 90 days if they fail to clearly label AI-generated videos depicting armed conflicts.

Nikita Bier, X’s head of product, explained the decision in a post on the platform, emphasizing the critical need for authentic information during wartime. “With today’s AI technologies, it is trivial to create content that can mislead people,” Bier stated.

The new policy implements a three-month revenue suspension for first-time violators, with repeat offenders facing permanent removal from the monetization program. X plans to identify violations through technical detection tools, metadata analysis, and the platform’s crowd-sourced fact-checking system known as Community Notes.

The timing of this policy shift coincides with escalating tensions in the Middle East involving the United States, Israel, and Iran. Recent weeks have seen Israeli and American strikes on Iran’s military and nuclear facilities, reportedly resulting in the death of Iran’s Supreme Leader Ali Khamenei and other top officials. Iran subsequently launched retaliatory missile attacks targeting U.S. military installations across the region and various locations in Israel.

During such high-stakes conflicts, visual content plays a crucial role in shaping public perception. However, these same conditions create an environment where manipulated or synthetic imagery can rapidly spread misinformation.

The scale of the problem became evident when X recently uncovered a coordinated network of 31 accounts operated by a single individual in Pakistan. These compromised accounts had their usernames changed to variations of “Iran War Monitor” around February 27 and were used to distribute AI-generated war footage across multiple profiles, creating an illusion of independent confirmation.

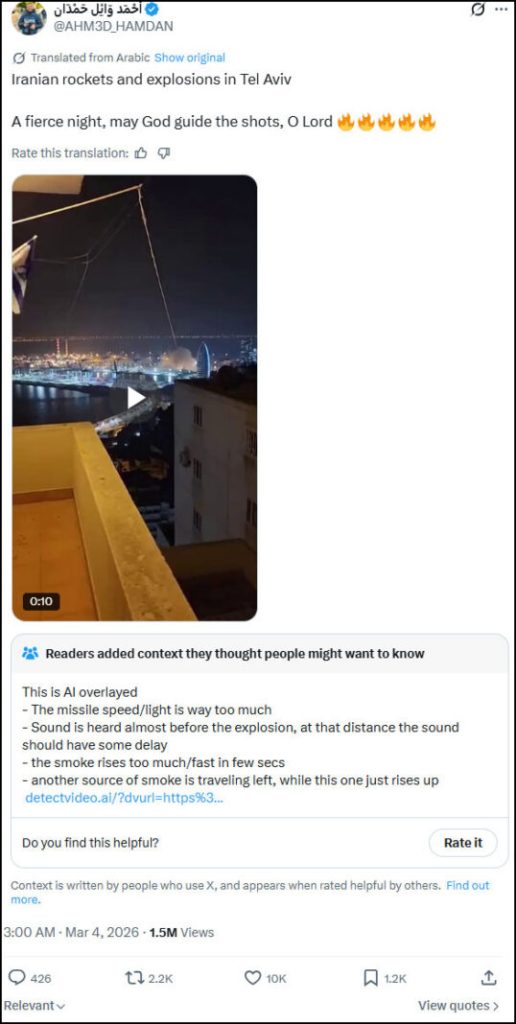

In one notable instance, a viral video purportedly showing Iranian rockets striking Tel Aviv gained significant traction before Community Notes identified several inconsistencies. Users pointed out unrealistic missile speed, premature explosion sounds, and unnatural smoke behavior that suggested artificial generation. The video originated from an account claiming to be a Gaza-based journalist named Ahmed Hamzan.

The challenge of combating such content stems from both technical and economic factors. Research consistently shows that sensational content spreads faster on social media platforms, with emotional triggers like explosions and destruction generating stronger engagement. Modern AI tools have made creating convincing war footage increasingly accessible, allowing creators to produce realistic scenes depicting missile launches and urban destruction within minutes.

This problem is exacerbated by the economic incentives of social media platforms. X’s Creator Revenue Sharing program allows eligible users to earn a portion of advertising revenue generated by engagement on their posts, potentially rewarding those who produce provocative or misleading content that attracts attention.

“Digital platforms operate within an attention economy, where content that attracts more engagement becomes more valuable,” explained researcher Carlos Diaz Ruiz in a 2023 paper on disinformation. Algorithms that prioritize engagement can inadvertently create powerful incentives for sensationalism over accuracy.

The recent policy change represents X’s attempt to disrupt this dynamic by removing the financial motivation behind misleading war footage. By suspending monetization for those who fail to disclose AI-generated content, the platform hopes to reduce the economic incentive for creating and spreading synthetic war videos.

Industry experts note that while this is a step in the right direction, the battle against AI-driven misinformation continues to evolve rapidly. As AI tools become more sophisticated and accessible, the line between authentic and synthetic content grows increasingly blurred for average users.

X’s intervention highlights a growing recognition across the tech industry that the digital information space has become as strategically important as physical battlefields in shaping global perceptions of conflict. Whether such platform-level policies will be sufficient to counter the rising tide of synthetic media remains an open question in the evolving landscape of digital information warfare.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

6 Comments

As AI capabilities advance, it will be an ongoing challenge to ensure the authenticity of online content. X’s proactive approach using detection tools and community fact-checking is a step in the right direction.

I’m curious to see how effective X’s new policy will be in practice. Policing AI-generated content at scale is no easy feat, but this seems like a necessary measure to preserve the integrity of information during sensitive geopolitical events.

This is a concerning development. Disinformation can be a real threat, especially during conflicts. I’m glad to see X taking steps to combat the spread of AI-generated war footage that could mislead people.

The timing of this policy change is interesting, given the heightened tensions in the Middle East. Combating disinformation around armed conflicts is critical, so I’m glad to see X taking action on this front.

Proper disclosure of AI-generated content is crucial for maintaining trust and credibility. X’s policy to suspend monetization for violators sends a strong message about the importance of transparency.

Agreed. Audiences deserve to know when they’re viewing AI-generated material so they can evaluate the information accordingly.