Listen to the article

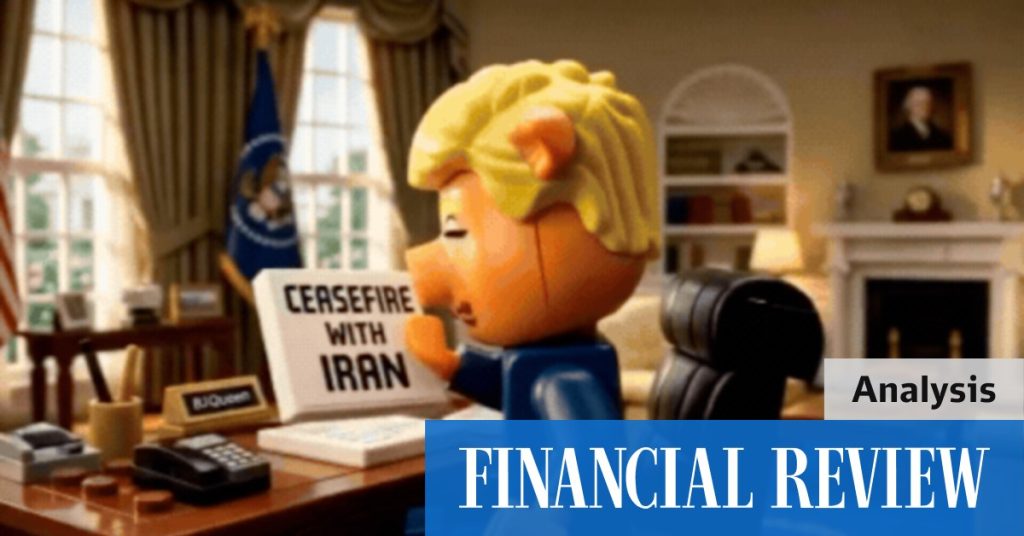

Iran is using AI-generated videos featuring Lego figures to spread anti-Western propaganda on social media platforms, marking a new frontier in digital information warfare.

Intelligence analysts and disinformation researchers have identified dozens of these animated videos on platforms including X, TikTok and YouTube. The content typically depicts American and Israeli leaders as villains in scenarios that align with Tehran’s geopolitical narratives.

The emergence of this technique, dubbed “slopaganda” by experts for its often crude production quality, demonstrates how artificial intelligence is making sophisticated propaganda tools accessible even to nations with limited resources.

“What we’re seeing is the democratization of propaganda creation,” said Dr. Emerson Collins, a digital intelligence analyst with the Centre for Information Resilience. “Five years ago, this kind of operation would have required significant resources and technical expertise. Now, with generative AI tools, a small team can produce dozens of videos weekly at minimal cost.”

The videos typically feature recognizable Lego-style characters representing Western leaders engaged in nefarious activities. One widely shared clip showed a figure resembling President Biden plotting against Iran with Israeli Prime Minister Benjamin Netanyahu, while another depicted U.S. officials celebrating attacks on civilian infrastructure in Gaza.

While the animations may appear crude to sophisticated viewers, they effectively target specific demographics, particularly younger social media users and populations in regions where anti-Western sentiment already exists. The content is often translated into multiple languages including Arabic, Turkish, Urdu, and Russian to maximize reach.

“These videos leverage existing cultural touchpoints—Lego is globally recognizable—to make complex geopolitical narratives more digestible,” explained Maya Winters, a senior fellow at the Digital Diplomacy Institute. “The playful, familiar aesthetic helps the content avoid immediate identification as propaganda.”

The Iranian campaign represents a broader trend of state actors adapting consumer AI tools for influence operations. The videos typically cost under $50 each to produce using commercially available AI animation software, according to technical analysis from cybersecurity firm Mandiant.

Western intelligence agencies have expressed concern about the increasing sophistication of these operations. A senior U.S. State Department official, speaking on condition of anonymity, confirmed that Iranian-backed digital influence campaigns have expanded significantly in 2023, with AI-generated content becoming a primary vector.

“What’s particularly challenging about this evolution is the speed at which content can be produced,” the official said. “When a major geopolitical event occurs, we now see tailored propaganda responses within hours, not days or weeks.”

Social media companies have struggled to effectively moderate this content. The videos often avoid explicit policy violations while still conveying misleading messages, making them difficult to flag for removal.

“These videos exist in a gray zone,” said Dr. Samira Patel, content policy director at a major social media platform. “They’re clearly political speech, which platforms are reluctant to restrict, but they also spread demonstrable falsehoods in visually compelling ways.”

Market analysts note that Iran’s embrace of AI propaganda techniques comes as the country faces increasing international isolation and economic pressure. The digital influence campaign provides Tehran with a relatively low-cost method to project power and shape narratives globally without military escalation.

The phenomenon also highlights how commercially available AI tools can be repurposed for information warfare. Several AI companies have implemented guardrails to prevent misuse of their technology, but determined state actors can often circumvent these protections.

Security experts warn that as AI technology continues to advance, the sophistication of such influence campaigns will likely increase. Future iterations may feature more realistic animations, seamlessly dubbed audio in multiple languages, and content tailored to specific audience segments based on data analytics.

“We’re just seeing the beginning of AI-powered propaganda,” cautioned Collins. “As these tools become more accessible and powerful, the ability to discern authentic content from manufactured narratives will become increasingly challenging for average citizens.”

Western governments and tech companies are now developing countermeasures, including enhanced content authentication systems and public media literacy campaigns, though experts acknowledge these efforts face significant challenges in an environment where technology evolves rapidly.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

This is a sobering example of how AI can be leveraged for malicious purposes. The ability to produce slick-looking but misleading Lego videos on a shoestring budget is quite alarming. I worry about the long-term societal impacts of this type of targeted propaganda.

Wow, this is a new and concerning development in propaganda tactics. Using AI to cheaply produce Lego-style videos to spread anti-Western narratives is quite worrying. I wonder how effective these crude animations are at influencing public opinion.

You’re right, the accessibility of these tools is alarming. Even smaller nations can now create large volumes of sophisticated-looking propaganda with minimal resources. It will be important to closely monitor the spread and impact of these videos.

I’m curious to see how the international community responds to this new form of digital information warfare. Will social media platforms be able to effectively identify and remove these AI-generated Lego propaganda videos? Combating this kind of content will be a real challenge.

That’s a good point. Platforms will need to develop new detection methods to stay ahead of the AI-powered propaganda tools. It will be an ongoing battle to maintain information integrity online.

The democratization of propaganda creation is a concerning trend. While AI opens up new creative possibilities, bad actors can misuse these technologies to spread disinformation at scale. I hope this doesn’t lead to a further erosion of public trust in media and institutions.

As an investor focused on mining and energy, I’m curious to see how this new propaganda tactic might impact public sentiment around those industries, especially ones tied to geopolitical tensions. Careful analysis will be needed to distinguish fact from fiction.

Good point. These AI-generated videos could potentially sway public opinion on important natural resource and energy issues. Fact-checking and media literacy will be crucial for investors to navigate this landscape effectively.