Listen to the article

Top U.S. Financial Officials Warn Bank Executives About AI Cybersecurity Threats

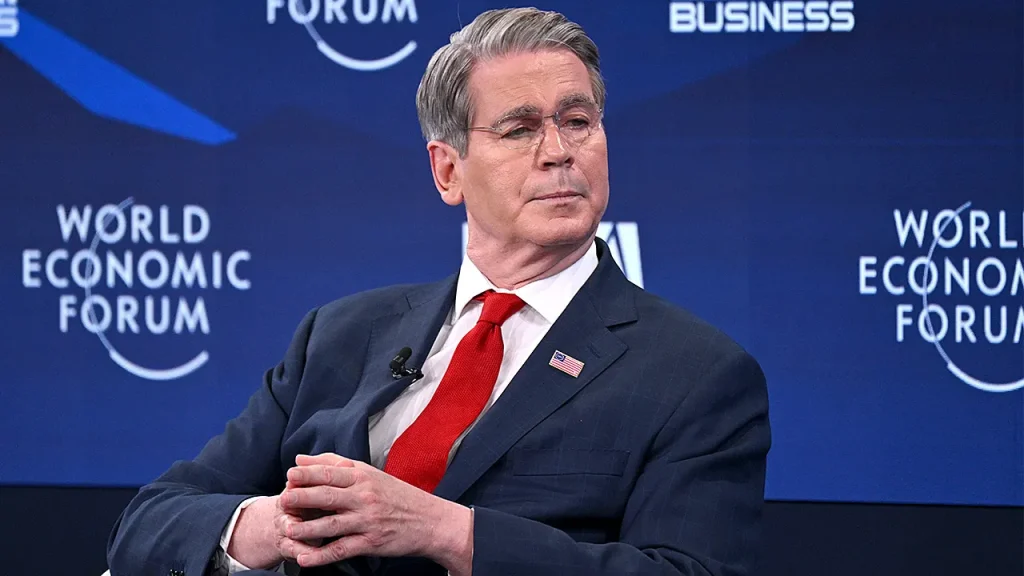

Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an urgent meeting with heads of major Wall Street banks on Tuesday to address potential cybersecurity risks posed by Anthropic’s new AI model, Claude Mythos Preview.

The high-level gathering, held at Treasury headquarters in Washington, D.C., included chief executives from Goldman Sachs, Citigroup, Morgan Stanley, Bank of America, and Wells Fargo—all institutions designated by the Federal Reserve as “structurally important” to the global financial system.

Bank of America CEO Brian Moynihan attended the meeting, according to a source familiar with his schedule. JPMorgan Chase CEO Jamie Dimon was also invited but could not attend, Bloomberg reported. Notably, JPMorgan participates in Anthropic’s “Project Glasswing,” an initiative using Mythos to defend against similar models.

The meeting centered on Anthropic’s new “frontier model,” which the company claims can autonomously identify and exploit software vulnerabilities. According to Anthropic, Mythos can outperform “all but the most skilled humans at finding and exploiting software vulnerabilities” and has already uncovered thousands of previously unknown software flaws, including decades-old vulnerabilities in companies widely considered security strongholds.

“This could make cyberattacks of all kinds much more frequent and destructive, and empower adversaries of the United States and its allies,” Anthropic stated in a blog post. “Addressing these issues is therefore an important security priority for democratic states.”

A source close to Anthropic told Fox News Digital that the company has briefed senior U.S. government officials about Mythos, though did not specify which agencies received these briefings.

The hastily arranged meeting reflects growing concern among financial regulators about the potential systemic risks posed by advanced AI systems to critical financial infrastructure. Banking systems contain vast amounts of sensitive customer data and handle trillions in transactions daily, making them prime targets for sophisticated cyberattacks.

The financial sector has increasingly embraced AI technologies for fraud detection, algorithmic trading, and customer service, but this integration creates new vulnerabilities that could be exploited by advanced systems like Mythos.

Anthropic’s relationship with the U.S. government has become increasingly complicated in recent months. Once a key partner of the U.S. military with a $200 million Pentagon contract secured in July 2025, the partnership deteriorated in February after Anthropic established boundaries against using its technology for autonomous weapons and domestic surveillance.

After issuing an ultimatum, Secretary of War Pete Hegseth designated Anthropic as a supply chain risk, effectively barring federal contractors from using its products. Anthropic’s attempt to appeal this designation was rejected by a federal appeals court just one day before the Treasury meeting.

Acting Attorney General Todd Blanche celebrated the court’s decision, stating, “Today’s D.C. Circuit stay allowing the government to designate Anthropic as a supply chain risk is a resounding victory for military readiness. Our position has been clear from the start — our military needs full access to Anthropic’s models if its technology is integrated into our sensitive systems.”

The Treasury meeting underscores the delicate balance officials must strike between harnessing AI’s benefits while mitigating its potential risks to national security and economic stability. As these advanced models become more powerful, financial regulators appear increasingly concerned about ensuring adequate safeguards are in place across the banking system.

Neither the Department of Treasury nor the Federal Reserve Board responded to requests for comment on the meeting.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

13 Comments

This emergency meeting highlights the need for continued collaboration and information-sharing between policymakers, security experts, and technology companies to stay ahead of evolving AI-related risks.

It’s encouraging to see major banks taking part in initiatives like Anthropic’s Project Glasswing to defend against similar AI models. Public-private partnerships will be key to mitigating these emerging threats.

The potential for AI-driven attacks on financial systems is a serious concern that deserves close attention. Proactive measures to assess and address vulnerabilities are a wise investment.

The involvement of high-profile officials like Bessent and Powell underscores the gravity of the situation. Cybersecurity threats from advanced AI systems are a growing risk for the entire financial ecosystem.

Agreed, this is likely just the beginning of more regulatory scrutiny and collaboration to address AI-related risks across industries.

Interesting to see regulators taking AI cybersecurity risks seriously, especially for major financial institutions. Proactive measures are crucial as advanced AI models continue to evolve.

Absolutely, the potential for AI-driven attacks on financial systems is a major concern that requires close attention from policymakers and industry leaders.

This emergency meeting highlights the need for robust AI governance frameworks to ensure responsible development and deployment of powerful technologies like Anthropic’s Mythos model.

I agree, getting ahead of the curve on managing AI risks is critical, especially for critical infrastructure sectors like finance.

This development raises important questions about the appropriate balance between innovation and risk management when it comes to powerful AI technologies. Ongoing dialogue between policymakers, technologists, and industry leaders will be crucial.

Definitely, finding that balance will be critical as these advanced AI models continue to advance and proliferate.

As Anthropic’s Mythos model demonstrates, the ability of AI to autonomously identify and exploit software vulnerabilities is a game-changer. Regulators are right to be vigilant and engage with industry leaders on this issue.

Absolutely, the potential for AI-powered cyberattacks is a major threat that requires a coordinated response from both the public and private sectors.