Listen to the article

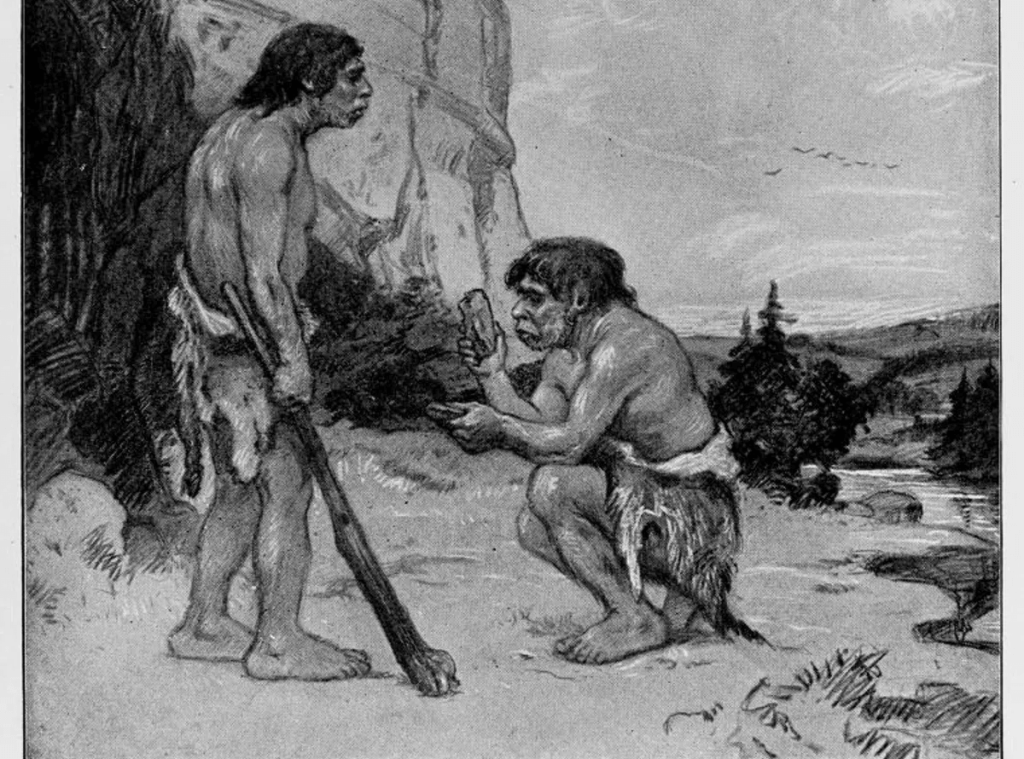

The popular image of Neanderthals as hairy, hunched creatures may seem scientifically sound, but according to new research, artificial intelligence systems are perpetuating outdated stereotypes about our ancient relatives.

A study recently published in Advances in Archaeological Practice reveals how AI image and text generators like ChatGPT and DALL-E produce significantly inaccurate representations of Neanderthals that contradict modern scientific understanding.

Researchers Matthew Magnani of the University of Maine and Jon Clindaniel of the University of Chicago tested these AI systems by prompting them to describe and illustrate Neanderthals. What they received were depictions that would have been more at home in the early 20th century than in contemporary scientific literature.

“A majority of images depict human-like figures, slightly stooped, with large quantities of body hair. These depictions have more in common with early twentieth-century drawings of Neanderthals than contemporary scientific knowledge,” the researchers noted in their study.

Modern paleontological and genetic evidence shows that Neanderthals were far more similar to modern humans than these outdated characterizations suggest. They had sophisticated cultures, created art, used advanced tools, and even interbred with Homo sapiens. Many people today carry Neanderthal DNA as a result of these ancient interactions.

The discrepancy between AI-generated content and scientific reality highlights a significant challenge with how these systems learn. Generative AI models typically operate as “black boxes,” with limited transparency regarding their training data. However, it’s well understood that they primarily learn by scraping information from the internet.

This creates an inherent problem for scientific accuracy. While some scholarly articles are available through open access, many remain behind paywalls, making them less accessible to AI training algorithms. The most readily available information tends to be older, popularized material that may perpetuate outdated scientific concepts.

Dr. Oren Kolodny, an evolutionary biologist not involved in the study, explained the implications: “The internet is saturated with older depictions of Neanderthals that align with early misunderstandings about their physiology and cognitive abilities. When AI systems prioritize this more abundant content, they inevitably reproduce these misconceptions.”

The issue extends beyond simple historical inaccuracy. As AI systems become increasingly integrated into educational tools, search engines, and creative applications, their portrayal of scientific concepts can shape public understanding. Misinformation about human evolution could influence everything from science education to cultural perceptions.

The researchers suggest this problem isn’t limited to Neanderthals but likely extends to many scientific and historical topics where understanding has evolved significantly in recent decades. The challenge is particularly acute in rapidly developing fields where newer research might be underrepresented in easily accessible online content.

This study arrives amid growing concerns about AI’s potential to spread misinformation. While much attention has focused on deliberate falsehoods, this research highlights how AI systems can inadvertently perpetuate outdated scientific concepts simply through their training methodology.

Some solutions may involve more deliberate curation of training data for AI systems, especially for educational applications. Others suggest that embedding explicit timestamps on AI-generated information could help users understand when content might be based on outdated sources.

For archaeologists and anthropologists, the findings underscore the importance of making current research more accessible and visible online. Digital archives and open-access initiatives could help ensure that AI systems encounter more accurate, up-to-date information about human evolution and other scientific topics.

As AI continues to develop and become more integrated into everyday information sources, addressing these challenges will be crucial for ensuring these powerful tools advance, rather than hinder, scientific understanding.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

12 Comments

Interesting that AI systems are propagating outdated stereotypes about Neanderthals. It’s a good reminder that these models can be biased by the training data they’re built on. I wonder what the implications are for scientific misinformation more broadly.

You make a good point. AI models can amplify misinformation if not properly trained on accurate, up-to-date scientific knowledge.

This is concerning. Inaccurate portrayals of Neanderthals could spread and become accepted as fact, especially if they’re being generated by popular AI tools. Researchers will need to be diligent in correcting these misrepresentations.

Agreed. The researchers are right to call attention to this issue – AI-generated content has the potential to significantly impact public understanding of science if left unchecked.

This is a fascinating study on the intersection of AI, science communication, and public perception. I’m curious to see how the research community addresses these issues around AI-generated content and scientific accuracy.

The researchers make a compelling case that AI is perpetuating outdated stereotypes about Neanderthals. It’s a concerning trend that could have broader implications for public understanding of science and prehistory.

Agreed. The ability of AI to spread misinformation, even inadvertently, is worrying and highlights the need for rigorous testing and oversight.

It’s ironic that advanced AI is reverting to outdated, cartoon-like depictions of Neanderthals. I wonder if this speaks to a broader challenge of incorporating nuanced, contemporary scientific knowledge into these language and image models.

That’s a good observation. Bridging the gap between the latest scientific research and AI-generated content will be an ongoing challenge.

This is a sobering reminder that AI systems can amplify misinformation, even on topics we think are well-understood. I hope the researchers’ findings spur more scrutiny of how these models are trained and the data they’re exposed to.

Interesting findings. It’s a good reminder that AI systems can perpetuate outdated stereotypes and inaccuracies, even on topics we assume are well-understood. Addressing this will be an important challenge for the scientific community.

Absolutely. Ensuring AI accurately represents the latest scientific knowledge will be crucial as these technologies become more widespread.