Listen to the article

Indian Minister Sounds Alarm on Deepfake Threats, Calls for Platform Accountability

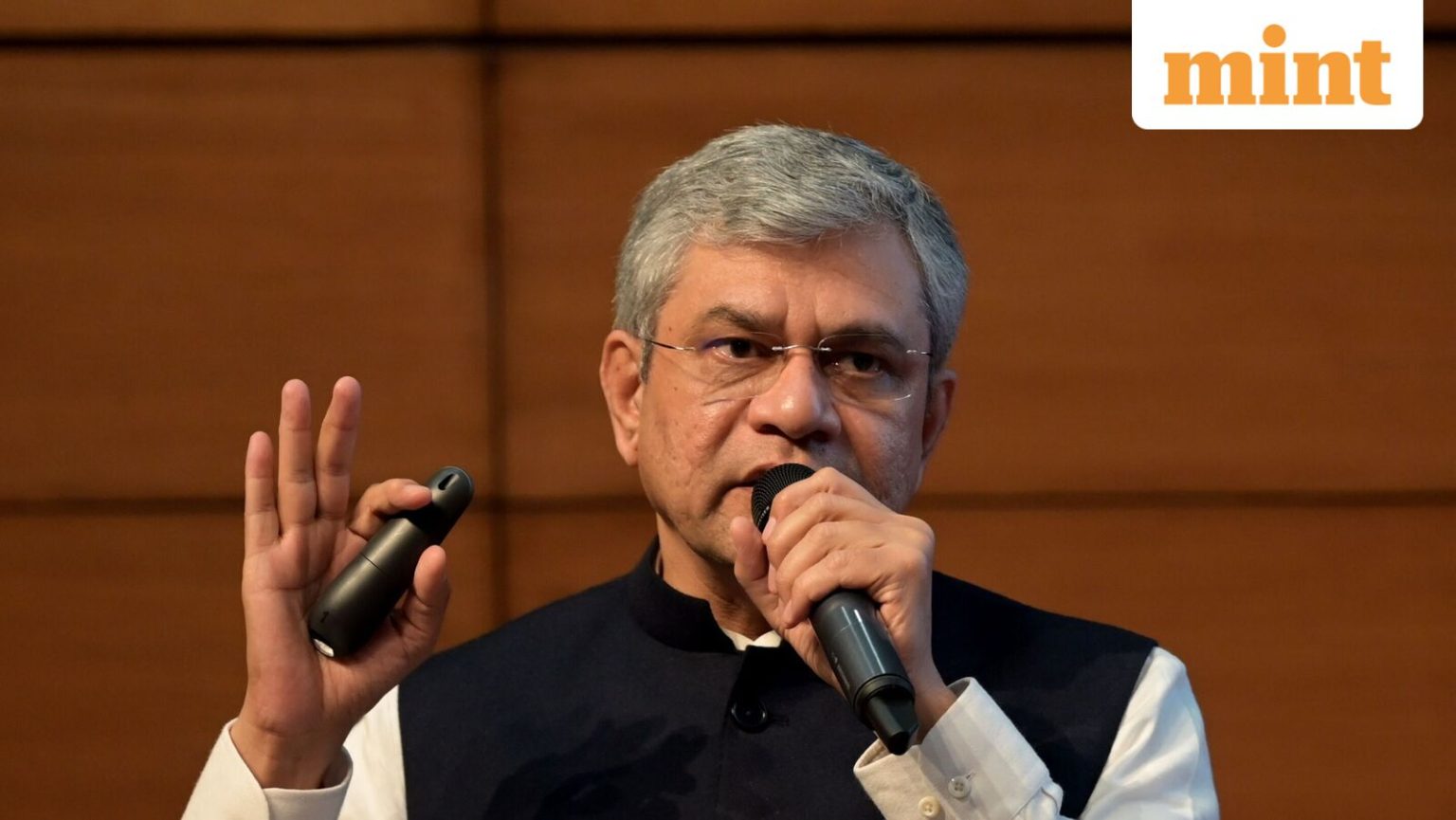

Union Minister for Information and Broadcasting Ashwini Vaishnaw has issued a stark warning about the growing menace of deepfakes and coordinated disinformation campaigns, emphasizing that these technologies pose a significant risk to public trust worldwide. Speaking at the Digital News Publishers Association (DNPA) Conclave 2026, Vaishnaw stressed that digital platforms must take responsibility for the content they host.

“The threat is coming from so many different angles – deepfakes – which can make you believe things that have never happened anyway,” Vaishnaw stated. He further cautioned about ongoing misinformation campaigns designed to manipulate public perception, adding, “Disinformation barrage – which can cause a sense of distrust that doesn’t exist in real life.”

The minister expressed particular concern about synthetic content featuring respected public figures, noting the alarming trend of “creating synthetically generated pictures of people well respected in society, creating videos which have absolutely no correlation with reality.”

According to Vaishnaw, the rapid advancement of technology has enabled the production and dissemination of manipulated content at an unprecedented scale. What were once isolated incidents of misinformation have evolved into a widespread, systemic problem threatening societal foundations.

The minister emphasized that digital platforms play a critical role in upholding trust in institutions built over millennia. He asserted that these platforms bear responsibility not only for the content they host but also for ensuring online safety, particularly for children and vulnerable users. Failure to meet these responsibilities, he warned, would make platforms accountable for the consequences.

“The time has come to make that big inflectional change. I request the platforms to cooperate with this human society’s basic needs. The society which is today asking for this change has to be respected,” Vaishnaw urged.

Highlighting the importance of consent in AI-generated content, the minister stated that such material should not be created without permission from individuals whose faces, voices, or personalities are used. This stance aligns with India’s recent moves to strengthen regulation of artificial intelligence applications.

Vaishnaw contextualized his concerns within a broader framework of institutional trust, explaining that human society relies on trust in various institutions—from family and social structures to judiciary, media, and legislature. Using media as an example, he pointed out that its credibility hinges on impartiality, thorough verification of information, and accountability for published content.

The minister’s comments come at a critical juncture when digital manipulation technologies are becoming increasingly sophisticated and accessible. Recent incidents of deepfakes targeting public figures in India have raised significant concerns about their potential to disrupt democratic processes, damage reputations, and spread misinformation during critical events like elections.

The DNPA Conclave, where Vaishnaw delivered his address, brings together policymakers, media executives, and industry experts to explore emerging trends in digital journalism and artificial intelligence. The event covers topics ranging from regulatory frameworks and newsroom transformation to content monetization strategies in an AI-driven media landscape.

DNPA Chairperson Mariam Mammen Mathew emphasized the importance of collaboration in addressing these challenges, noting that AI is fundamentally reshaping the news industry. She called for publishers, policymakers, and platforms to work together to create a framework based on trust and responsibility.

As India continues to develop its digital regulatory framework, Vaishnaw’s statements signal the government’s increasing focus on holding technology platforms accountable for the content they disseminate and the potential societal impact of emerging technologies like AI-generated deepfakes.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

9 Comments

Deepfakes and coordinated disinformation campaigns pose a significant risk to democratic processes and social cohesion. Robust safeguards and transparency measures are essential to protect the public.

As digital technologies continue to advance, the threat of deepfakes and misinformation will only grow. Proactive and adaptive measures are needed to stay ahead of this rapidly evolving challenge.

Deepfakes and misinformation pose a serious threat to public trust. The Indian IT Minister is right to sound the alarm and call for greater platform accountability. It’s a complex challenge that requires vigilance and a multi-faceted approach.

The Indian IT Minister’s call for greater platform accountability is a welcome development. Platforms must be held responsible for the content they host and the impact it has on public discourse.

Synthetic content featuring respected public figures is particularly concerning. Manipulating people’s perceptions in this way can have far-reaching consequences. Platforms need to take decisive action to address this growing problem.

Agreed. Platforms have a responsibility to ensure the integrity of the information they host. Proactive measures to detect and remove deepfakes and coordinated disinformation campaigns are crucial.

The minister’s concern about the ‘sense of distrust that doesn’t exist in real life’ is particularly troubling. Restoring public trust will require concerted efforts to counter misinformation and strengthen the credibility of information sources.

The minister’s warning about the ‘disinformation barrage’ is a sobering reminder of the scale of the challenge. Rebuilding public trust will require a concerted, collaborative effort between government, tech companies, and civil society.

Absolutely. Tackling this issue will require a multi-stakeholder approach. Clear guidelines, robust detection tools, and user education will all be important components of an effective strategy.