Listen to the article

As Middle East conflict escalates, experts warn of AI-generated misinformation

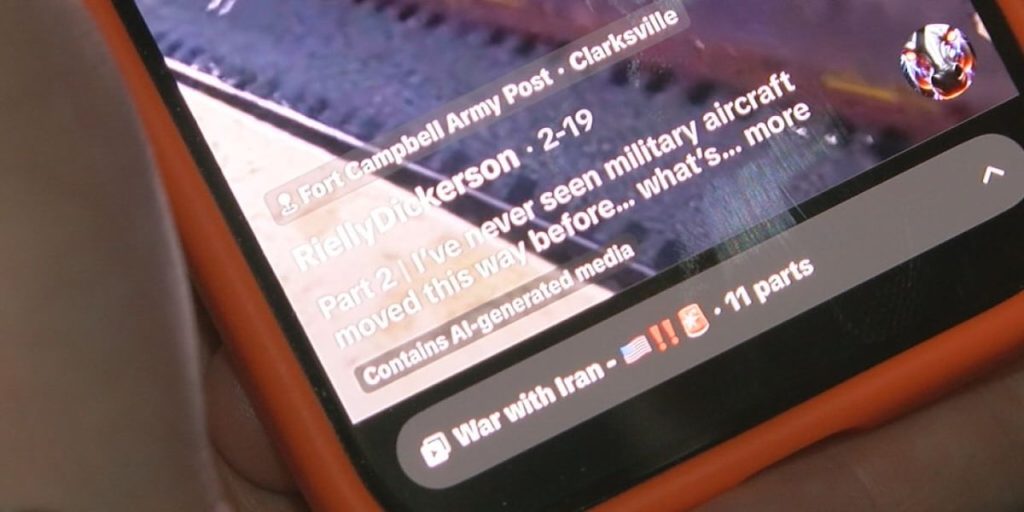

As fighting intensifies across the Middle East, social media platforms are being flooded with dramatic images and videos purporting to show real-time battle scenes and missile strikes. However, cybersecurity experts are warning that many of these seemingly authentic visuals may actually be artificially generated.

In the aftermath of recent U.S. and Israeli attacks on Iran, a significant surge in fake and misleading content has emerged online. Fact-checkers globally and in Birmingham are raising concerns about fabricated explosions, staged missile strikes, and manufactured scenes of chaos that are rapidly spreading and misleading millions of users.

Dr. Ragib Hassan, Professor of Computer Science and Director of the UAB Center for Cyber Security at the University of Alabama at Birmingham, explains that technological advancements have made distinguishing between authentic and artificial content increasingly challenging.

“These days, it has become very hard to detect AI-generated images and videos because of the advances in AI,” Hassan said. The sophistication of today’s technology represents a dramatic shift from just a year ago, when AI-generated content often contained telltale flaws that made it easier to identify.

Previously, artificial images typically featured subtle but recognizable errors—distorted fingers, unnatural lighting patterns, or inconsistent shadows—that allowed viewers to identify them as fake. Today’s AI technology, however, can create near-perfect visuals from simple text prompts, eliminating many of these identifying markers.

“With advances in AI models and all these different tools that are out there and available to everyone, it’s very easy to create these videos from simple prompts,” Hassan noted. “Most of the AI platforms allow you to write a prompt and create very realistic videos from that.”

The accessibility of these powerful tools presents a significant challenge in the information ecosystem surrounding global conflicts. Misinformation can now spread at the same rate as—or even faster than—verified reporting. A single convincing video, such as footage appearing to show a fighter jet evading missile attacks, can accumulate thousands of shares before viewers question its authenticity.

While major social media platforms have implemented measures to flag or label AI-generated content, these efforts often fall short. Hassan emphasizes that technology alone cannot effectively combat this problem, and critical thinking remains the most important defense against misinformation.

“Common sense is the best defense against AI-generated videos,” Hassan advised. “If you see something that doesn’t look real or if you see something that clearly is serving the purpose of someone spreading misinformation, then most likely that is AI-generated content.”

This problem is particularly acute during times of international conflict, when emotional responses may override critical assessment of content. The Middle East situation has created fertile ground for misinformation, with various actors potentially using fake content to influence public opinion or advance political narratives.

Media literacy experts suggest several strategies for verifying content before sharing. These include checking if the same footage appears on established news networks, looking for inconsistencies in lighting or physics, and researching the original source of the content. Reverse image searches can also help determine if a supposed “current” image actually originated months or years earlier.

Hassan strongly recommends that social media users rely on verified news outlets and cross-check information across multiple credible sources before sharing dramatic images or videos online. This practice becomes increasingly important as the technology behind fake content grows more sophisticated.

As the overseas conflict continues to unfold, experts emphasize that the battle over information on social media platforms is occurring with equal intensity—and every share carries a responsibility to prevent the spread of misinformation.

In this new reality of sophisticated AI-generated content, the burden of verification increasingly falls on individual users to approach dramatic wartime footage with healthy skepticism and responsible sharing practices.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

14 Comments

Disturbing to see how AI can be used to manipulate information and inflame conflicts. Fact-checking and digital literacy education will be vital to help people navigate this landscape.

Couldn’t agree more. Empowering the public to critically analyze online content is key to building resilience against the spread of AI-generated misinformation.

The potential for AI-driven misinformation to escalate tensions in the Middle East is very concerning. Robust regulations and industry cooperation are needed to stay ahead of this threat.

Absolutely. The global community must act swiftly to develop effective policies and technologies to detect and limit the spread of synthetic media fueling conflicts.

The proliferation of AI-generated content is a significant challenge that demands a coordinated, global response. Strengthening digital literacy and transparency around AI systems will be crucial.

Agree completely. This is a complex issue that requires sustained, multi-stakeholder efforts to develop effective solutions and protect the integrity of online information.

This is a complex issue with no easy solutions. As AI becomes more sophisticated, the potential for abuse grows. Policymakers, tech companies, and the public must work together to address this challenge.

Well said. Collaborative efforts to improve detection, regulation, and public awareness will be crucial in the fight against AI-driven disinformation.

The advancements in AI are both amazing and alarming. While the technology can be used for good, bad actors will inevitably exploit it to sow discord. Staying vigilant and relying on authoritative sources is vital.

Absolutely. The battle against misinformation is an ongoing one, and we must be proactive in developing new ways to identify and combat AI-generated fakes.

This is a worrying trend. AI-generated misinformation can have real-world consequences, especially in volatile regions like the Middle East. Fact-checking must be a top priority to maintain public trust.

Well said. The responsibility falls on tech companies, governments, and citizens to work together and combat the rise of AI-driven disinformation campaigns.

This is concerning. The spread of AI-generated misinformation could really inflame tensions in the Middle East. Fact-checking will be crucial to counter false narratives and maintain public trust.

Agreed. Platforms need robust policies and tools to detect and limit the virality of synthetic media. Accountability and transparency from tech companies will be key.