Listen to the article

AI Detection Tools for Fighting Fake News Fall Short, Experts Say

Journalists battling the rising tide of AI-generated fake news are increasingly turning to detection tools, but research indicates these technologies have significant limitations that undermine their reliability in real-world scenarios.

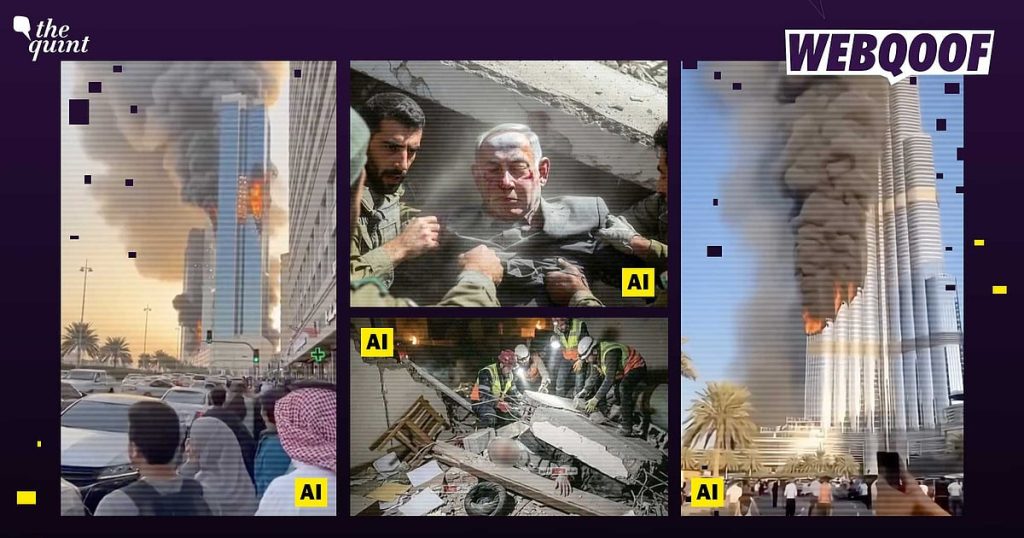

The fact-checking team at WebQoof employs a variety of AI detection platforms including Deepfake-O-Meter, Hive Moderation, AI or Not, Sightengine, Was It AI, and Contrails AI. However, each tool demonstrates specific weaknesses when confronted with different types of manipulated content.

Contrails, developed by a Bengaluru-based startup, has shown promise in identifying deepfake videos but struggles considerably when analyzing other forms of AI-generated content (AIGC). Similarly, tools like Deepfake-O-meter frequently fail to accurately identify fabricated videos of supposed missile strikes or bombing incidents that regularly go viral across social media platforms.

A doctoral study conducted by Dorsaf Sallami at the University of Montreal’s Department of Computer Science and Operations Research highlights a concerning disparity between laboratory results and real-world performance. Her research reveals that while these detection tools often demonstrate impressive accuracy rates in controlled testing environments, their effectiveness diminishes dramatically when confronted with the complexity and variability of actual misinformation campaigns.

“These systems are essentially probabilistic in nature,” explains a digital forensics expert familiar with the research. “They make educated guesses based on patterns they’ve learned from their training data. This means they’re inherently limited by the quality, diversity, and biases present in that data.”

One of the fundamental issues is that detection tools function more as “mirrors” of their training datasets rather than true arbiters of fact. This creates significant vulnerabilities when the tools encounter content that differs from their training examples or addresses emerging topics for which they lack reference data.

The limitations become particularly evident when these systems analyze nuanced or evolving news stories. A detection tool trained on certain patterns of manipulation may completely miss newer techniques or misclassify legitimate content that shares superficial similarities with known fake patterns.

“We’re seeing an arms race between fake content generators and detection tools,” notes a cybersecurity analyst at a leading tech policy institute. “As detection methods improve, so do the techniques for creating more convincing fakes. Unfortunately, the detection technology currently lags behind.”

The reliability challenge extends to context understanding. AI detection tools typically analyze content in isolation, without the broader contextual awareness that human fact-checkers employ. This fundamental limitation leads to both false positives—flagging genuine content as fake—and false negatives—missing sophisticated manipulated material.

Media literacy experts emphasize that these technical shortcomings make AI detection tools insufficient as standalone solutions for combating misinformation. Instead, they recommend a multi-layered approach that combines technological tools with human expertise and critical thinking.

“These tools should be viewed as one component in a larger verification toolkit,” suggests a journalism professor specializing in digital media. “They can provide useful signals, but journalists and fact-checkers need to supplement them with traditional verification methods, source analysis, and contextual evaluation.”

For news organizations and fact-checking operations, the implications are clear: while AI detection tools offer valuable assistance, their outputs require careful interpretation and should never be the sole basis for determining authenticity.

As misinformation continues to spread through increasingly sophisticated means, the journalism industry faces mounting pressure to develop more robust verification systems. Researchers are calling for greater transparency from detection tool developers about specific limitations and error rates, which would allow fact-checkers to make more informed decisions about when and how to incorporate these technologies into their workflows.

Until more reliable detection methods emerge, the frontline defense against AI-generated fake news remains a combination of technological assistance, journalistic expertise, and public media literacy—a multi-faceted approach that acknowledges both the promise and the significant limitations of current AI detection capabilities.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

19 Comments

The findings in this article are deeply worrying. The threat of AI-generated misinformation is a serious challenge that requires a multi-faceted response from various stakeholders. Strengthening detection tools and public awareness will be crucial.

I agree, this is a pressing issue that needs to be addressed urgently. Continued research and innovation in AI detection, coupled with robust public education campaigns, will be key to combating this growing threat.

This is a complex and concerning problem that has significant implications for national security, public discourse, and trust in media. The limitations of current detection tools are particularly troubling.

Absolutely. The need for more advanced and reliable AI detection capabilities, as well as public education, cannot be overstated. This issue demands immediate attention and action.

This is a concerning and complex issue that has significant implications for national security, public discourse, and trust in media. The findings about the limitations of current AI detection tools are particularly worrying.

I share your concerns. Addressing the threat of AI-generated misinformation will require a coordinated, multi-stakeholder effort to develop more robust detection capabilities and raise public awareness.

This is an alarming development that has serious implications for national security and public trust. The findings about the limitations of current detection tools are particularly concerning.

I share your worries. Investing in more robust AI detection capabilities and public awareness campaigns will be crucial to mitigate the risks posed by AI-generated misinformation.

The limitations of current AI detection tools, as outlined in this article, are deeply troubling. Combating the rising tide of AI-generated misinformation will require a multi-pronged approach that combines technological innovation and public education.

Absolutely. This is a complex issue that demands immediate attention and action from media, tech companies, and policymakers. The stakes are high, and we cannot afford to fall behind in this critical battle.

The challenges outlined in this article highlight the urgent need for improved AI detection tools and greater public understanding of the threats posed by synthetic content. Addressing this issue should be a top priority.

Agreed. Tackling the growing problem of AI-generated misinformation will require a coordinated, multi-stakeholder effort to stay ahead of the rapidly evolving landscape.

The examples highlighted here demonstrate the challenges journalists and fact-checkers face in reliably identifying AI-generated content. This issue will only become more complex as the technology continues to advance.

That’s a fair assessment. The rapidly evolving landscape of AI-powered misinformation requires a dynamic, multi-faceted response from media, tech companies, and policymakers.

This is a concerning development that highlights the need for more advanced AI detection capabilities and greater public understanding of the risks posed by synthetic content. Addressing this challenge should be a top priority.

Well said. Tackling the problem of AI-generated misinformation will require a coordinated, multi-stakeholder effort to stay ahead of the rapidly evolving landscape.

This is a concerning issue that requires a multi-pronged approach. AI-generated misinformation can have devastating consequences, especially in the context of modern warfare. Robust detection tools and user education will be key to combating this growing threat.

You raise a good point. Empowering the public to critically evaluate online content and spot potential AI fabrications will be crucial in the fight against misinformation.

I agree, the limitations of current detection tools are worrying. More research and innovation is needed to stay ahead of increasingly sophisticated AI-generated content.