Listen to the article

TikTok Under Scrutiny for Manipulating Content in Favor of Chinese Government Interests

New research suggests TikTok systematically suppresses content critical of China while potentially shaping American users’ perceptions of the country’s human rights record and appeal as a tourist destination.

A comprehensive three-part study conducted by researchers from multiple institutions reveals troubling patterns in how TikTok’s algorithm handles content related to sensitive topics for the Chinese Communist Party (CCP), including Tibet, Tiananmen Square, Uyghur rights, and Xinjiang.

The investigation, which compared content across TikTok, Instagram, and YouTube, found that TikTok consistently served up significantly less content critical of China than other platforms while flooding searches with irrelevant material that distracted users from sensitive issues.

“When we searched for terms like ‘Uyghur’ or ‘Tiananmen’ on TikTok, we found dramatically less critical content about China’s human rights issues compared to identical searches on Instagram or YouTube,” said lead researcher Lee Jussim. “This pattern was consistent across all four search terms we examined.”

In the first study, researchers created new teen user accounts across all three platforms and analyzed the content returned from searches on sensitive topics. While Instagram and YouTube returned substantial amounts of content critical of China’s human rights record, TikTok searches yielded far more irrelevant content and significantly less material critical of the Chinese government.

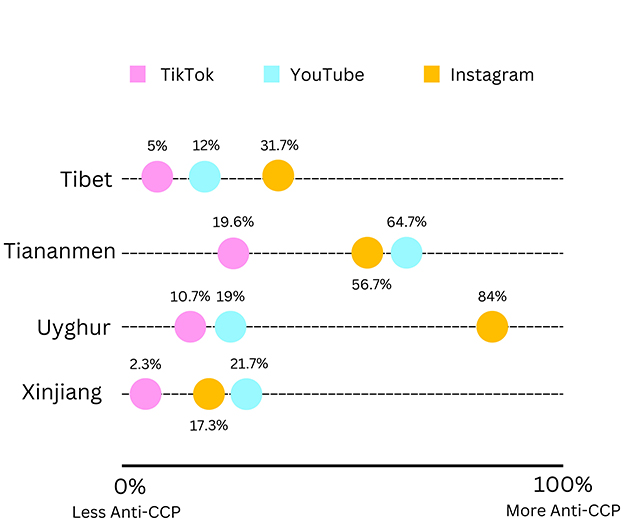

For instance, when searching “Tiananmen,” only 19.6% of TikTok results contained content critical of China, compared to 56.7% on Instagram and 64.7% on YouTube. Similarly, searches for “Uyghur” on TikTok returned just 10.7% critical content versus 84% on Instagram.

The second study analyzed user engagement with content across platforms and uncovered an even more concerning pattern. Despite TikTok users showing significantly higher engagement with content critical of China – through likes and comments – the platform’s algorithm continued to promote pro-China content while suppressing critical material.

“This inverts what we’d expect from a purely commercially-driven algorithm,” explained researcher Daniel Finkelstein. “If TikTok were simply trying to maximize engagement, we’d see more critical content because that’s what users are engaging with. Instead, we’re seeing the opposite.”

The researchers found that while users engaged with anti-CCP content nearly four times more than with pro-CCP content on TikTok, the platform’s search algorithm produced nearly three times as much pro-CCP content in results.

The third study surveyed 1,214 American adults about their social media usage and perceptions of China. After controlling for demographic factors and usage of other platforms, researchers found that heavier TikTok use was significantly associated with more positive views of China’s human rights record and greater likelihood of viewing China as a desirable travel destination.

“The correlation between TikTok usage and favorable attitudes toward China was stronger than for any other platform we examined,” said researcher Jessica Zucker. “This relationship held even when controlling for age, political affiliation, ethnicity, gender, and use of other social media platforms.”

The findings come amid growing concerns about ByteDance, TikTok’s Chinese parent company, and potential CCP influence over the platform. Recent reporting by NBC News cited evidence that a Chinese government company holds a 1% “golden share” in ByteDance, which includes board seats and special privileges.

While the researchers acknowledge limitations in their study – including its exploratory nature and the subjective judgment involved in content classification – the findings add to a growing body of evidence suggesting TikTok may function as a vehicle for CCP propaganda.

“These studies raise legitimate concerns about algorithmic manipulation and free inquiry,” Jussim said. “The pattern of suppressing critical information while promoting favorable narratives suggests an approach to information control that aligns with known CCP propaganda strategies.”

The researchers emphasize that their findings don’t conclusively prove direct CCP control over TikTok but demonstrate patterns consistent with a platform being used to advance Chinese government interests through subtle content manipulation rather than overt censorship.

The study recommends greater transparency in social media algorithms and calls for researchers and policymakers to develop better methods for detecting when platforms may be subverting free expression without user consent.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

10 Comments

As someone who follows news and analysis on China and global affairs, I find these findings very troubling. If TikTok’s algorithm is indeed suppressing content critical of the Chinese government, that would be a significant breach of the platform’s responsibility to provide users with accurate, unbiased information. This needs to be addressed.

Absolutely. The integrity of information flows online is crucial, especially when it comes to sensitive geopolitical issues. Platforms must be held accountable for any systematic biases or manipulation in their content curation.

The researchers’ findings about TikTok’s handling of content related to China are quite alarming. If true, it would suggest a troubling pattern of information manipulation that could have serious consequences for how young Americans perceive global affairs. This warrants further investigation and scrutiny.

I agree. The potential for social media platforms to shape public opinion, especially among impressionable young users, is a major concern that deserves close examination. Transparency and accountability are critical in this space.

This study highlights the need for greater scrutiny of social media platforms and their potential role in information manipulation. The findings about TikTok’s handling of content related to sensitive topics in China are quite troubling. I hope this leads to further investigation and accountability.

I agree. Platforms like TikTok wield a lot of power when it comes to shaping public perceptions, and that power should come with greater responsibility and oversight.

As someone who uses TikTok, I’m concerned to hear about these findings. If the algorithm is systematically suppressing certain types of content, that could have significant ramifications for how users form their views on important geopolitical issues. I hope TikTok addresses this transparently.

You’re absolutely right. Platforms need to be upfront about their content moderation policies and the potential biases in their algorithms. Anything less undermines trust and the integrity of online discourse.

Interesting research on how TikTok’s algorithm may be shaping users’ perceptions of China. I wonder if this is intentional manipulation or just a reflection of the platform’s broader content curation policies. Either way, it’s concerning if it’s leading to a skewed understanding of important human rights issues.

You raise a good point. Platform algorithms can have unintended consequences when it comes to information access and public discourse. Transparency around these systems is crucial.