Listen to the article

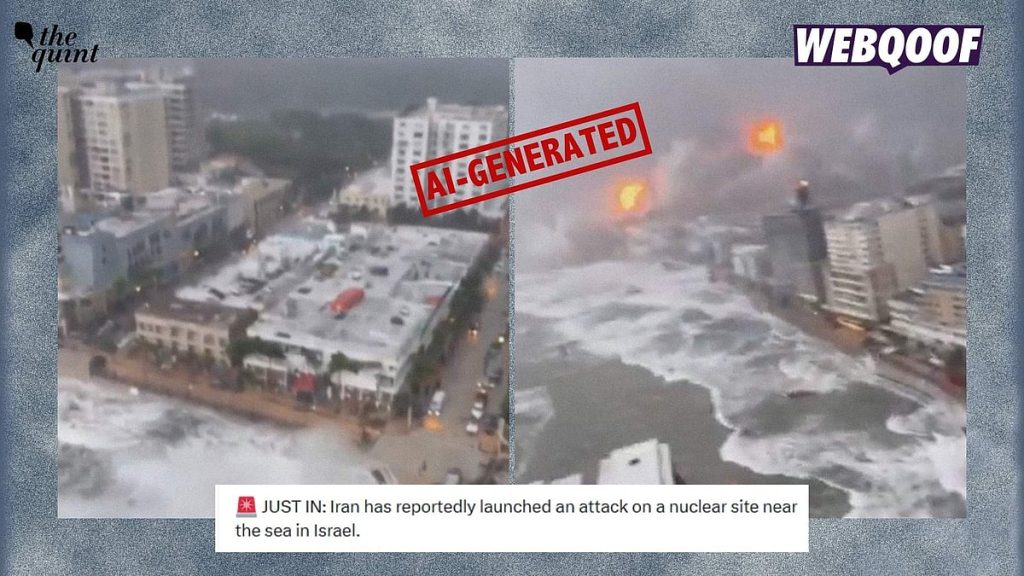

FACT CHECK: AI-Generated Video Falsely Claims to Show Iranian Attack on Israel

A recent viral video purporting to show Iranian missile attacks on Israel has been conclusively identified as artificially generated content, according to multiple technical verification methods employed by our fact-checking team.

The widely shared footage, which depicts dramatic scenes of explosions and waves crashing into buildings along what appears to be a coastline, contains numerous technical inconsistencies that reveal its synthetic nature.

Our investigation began with a frame-by-frame analysis of the clip. Multiple screenshots were extracted and subjected to reverse image searches using Google Lens technology. This initial analysis failed to locate any matching authentic footage or credible sources that could validate the video’s authenticity.

Further technical examination focused on detecting signs of artificial generation. While the footage did not contain the SynthID watermark commonly embedded by GoogleAI in its generated content, it displayed several telltale indicators of AI production that experts recognize as red flags.

Most notably, the video exhibits what technical analysts call “morphing textures” – subtle, unnatural movements and patterns in background elements like water and sky that don’t follow the laws of physics. This phenomenon is a common artifact in AI-generated videos where the algorithm struggles to maintain consistent rendering of dynamic natural elements.

“The behavior of debris falling and the way waves interact with buildings defies natural physics,” noted our technical analyst. “These inconsistencies are typically invisible to casual viewers but stand out clearly during forensic video analysis.”

To confirm these observations, our team processed the footage through DeepFake-O-Meter, a specialized tool designed to detect artificially generated visual content. The results unambiguously classified the video as AI-generated rather than authentic footage.

The timing of this fabricated content is particularly concerning as it coincides with heightened tensions between Iran and Israel. Recent weeks have seen legitimate concerns about potential escalation following various diplomatic and military developments in the region. Misinformation of this nature can potentially influence public perception and increase anxiety during already volatile situations.

This incident highlights the growing sophistication of AI-generated content and the challenges it poses for information integrity during geopolitical conflicts. As generative AI technology becomes more accessible, distinguishing between authentic and synthetic media grows increasingly difficult for average viewers.

Media literacy experts recommend several practices when encountering dramatic footage online, especially during times of international tension: check if established news organizations have verified and reported the content; look for unusual visual artifacts like unnatural lighting, strange movements, or inconsistent textures; and be particularly skeptical of dramatic footage that emerges without clear attribution to journalists or witnesses on the ground.

The proliferation of such synthetic content underscores the importance of robust fact-checking mechanisms and public awareness about the capabilities of generative AI. As tensions continue in the Middle East, distinguishing between factual reporting and synthetic misinformation becomes even more crucial for informed public discourse.

If you encounter suspicious content online and wish to have it verified, our fact-checking team welcomes submissions for analysis. Readers can forward questionable content through our website or email for professional verification.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

5 Comments

Interesting that this video was flagged as AI-generated. I wonder how the experts were able to identify the technical inconsistencies that revealed its synthetic nature. It’s important to be vigilant about verifying the authenticity of online content, especially when it depicts such dramatic events.

Yes, that’s a good point. With the increasing sophistication of AI-generated media, it’s crucial that we have rigorous fact-checking processes in place to catch these kinds of fabrications.

It’s disturbing to see how advanced AI technology has become in creating such realistic yet fabricated footage. I appreciate the efforts of the fact-checkers to thoroughly investigate and expose this video as synthetic. We must remain vigilant against the growing threat of deepfakes and other forms of digital manipulation.

This is a concerning development, as the ability to create such convincing yet false footage could be exploited for malicious purposes. I’m glad the fact-checkers were able to identify the technical flaws that exposed the video as AI-generated. We need to stay vigilant against the spread of disinformation.

Absolutely. The proliferation of AI-generated content is a real challenge for maintaining the integrity of online information. Robust fact-checking is essential to combat the spread of these fabrications.