Listen to the article

In an era where artificial intelligence increasingly blurs the line between human and machine-generated content, institutions are grappling with how to reliably identify AI-authored material. New research from the University of Chicago’s Booth School of Business offers a methodical approach to this growing challenge.

Organizations ranging from educational institutions to publishing houses and employers have legitimate reasons to distinguish between human and AI-created work. However, the current generation of AI detection tools presents a significant dilemma: they remain imperfect, creating real risks of falsely flagging human work as machine-generated.

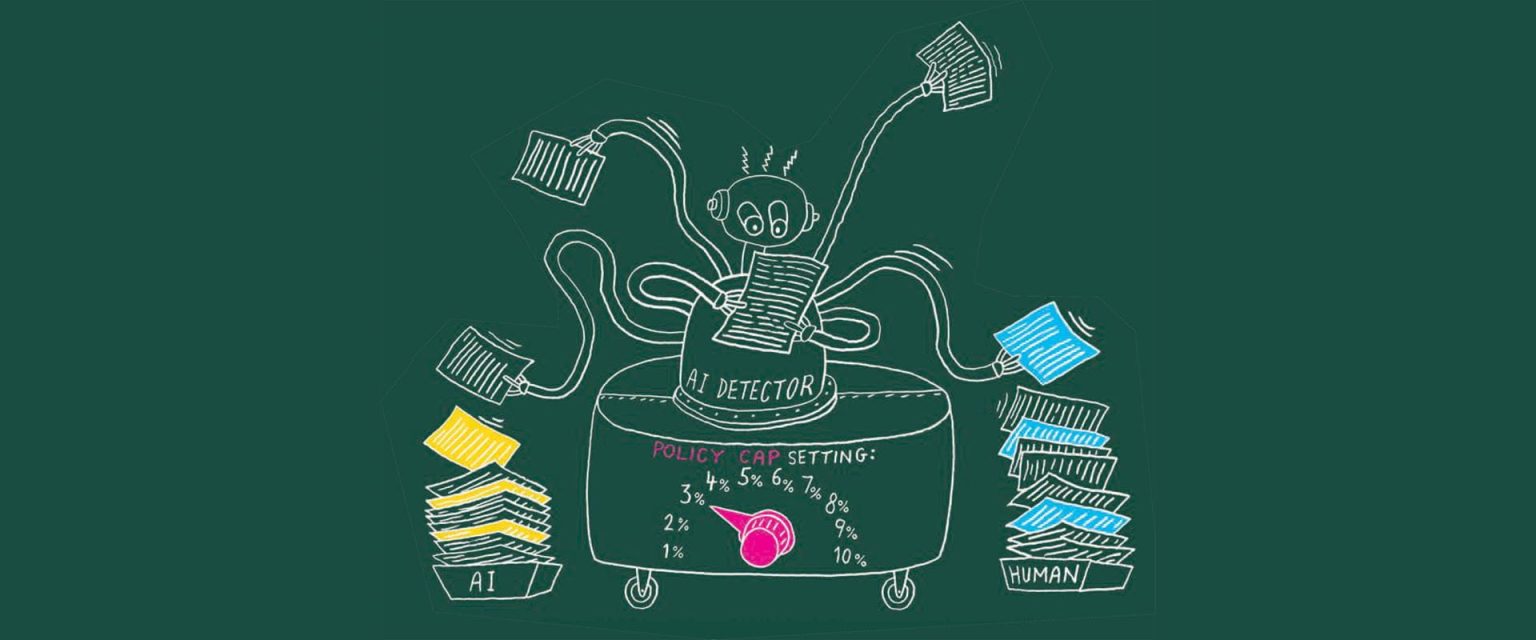

To address this concern, Chicago Booth principal researcher Brian Jabarian and colleague Alex Imas have developed what they call the “policy cap” method. This approach provides institutions with a practical framework for implementing AI detection systems while minimizing harmful false accusations.

The methodology begins with a fundamental decision: determining the maximum acceptable rate of false positives an institution can tolerate. This policy cap serves as the cornerstone of the entire detection system, establishing clear boundaries before any technology is deployed.

Once this threshold is established, detection tools can be calibrated accordingly. The system will set a specific cutoff score that maintains the institution’s predetermined false-positive limit. Any text scoring above this threshold would then be flagged as AI-generated, while staying within the organization’s acceptable margin of error.

Beyond setting this initial boundary, the researchers recommend institutions carefully analyze the corresponding false-negative rate—the percentage of AI-generated content the detector fails to identify at the chosen threshold. This additional layer of assessment helps ensure the system isn’t missing too many instances of machine-created text while attempting to minimize false accusations.

The elegance of the policy cap approach lies in its flexibility and comparative value. Institutions can apply this method regardless of which detection tool they employ, allowing them to maintain consistent standards despite the wide variety of detection algorithms available on the market. Furthermore, the framework enables meaningful performance comparisons between different detection systems, something previously difficult due to the diverse methodologies underpinning various detection technologies.

This research arrives at a critical moment for academic institutions, publishers, and businesses worldwide. As generative AI tools like ChatGPT, Claude, and Bard become increasingly sophisticated, traditional methods of verifying authorship grow increasingly inadequate. Schools have reported surges in suspected AI-written assignments, while publishers face mounting concerns about the authenticity of submitted manuscripts.

The policy cap approach doesn’t solve all detection problems, as even the best current detection tools maintain significant error rates. However, it does provide organizations with a structured way to deploy these technologies responsibly, with full awareness of the statistical limitations and risks involved.

The researchers detail their findings in a paper titled “Do AI Detectors Work Well Enough to Trust?” which examines both the technical performance of current detection systems and the ethical implications of their use in institutional settings.

As AI continues to evolve, the challenge of distinguishing between human and machine-generated content will likely intensify. The Chicago Booth researchers’ work suggests that rather than pursuing perfect detection—which may remain elusive—institutions might benefit from adopting transparent, statistically-grounded policies that acknowledge the inherent uncertainties in the detection process.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

Distinguishing AI-generated from human-created content is a growing challenge, so this research from Chicago Booth is timely. The policy cap framework provides a structured way for institutions to manage the trade-offs and risks involved. Curious to learn more about the practical application and real-world results.

Agree, the policy cap concept appears to offer a more nuanced approach compared to binary AI/human categorization. Implementing it effectively will require careful calibration by each institution based on their specific needs and risk tolerance.

Interesting approach to tackling the challenges of AI-generated content. Establishing a clear policy cap on acceptable false positives seems like a prudent way to balance the need for accuracy with avoiding unfair accusations. Curious to see how this methodology is implemented in practice across different institutions.

Yes, the policy cap framework appears to provide a more nuanced and flexible solution compared to relying solely on current AI detection tools. I’m interested to learn more about the specifics of the approach and how it aims to improve on existing methods.

Maintaining institutional integrity in the face of AI-generated content is a critical issue. This research from the University of Chicago offers a thoughtful, data-driven framework to help organizations navigate this complex challenge. The focus on minimizing harmful false positives is an important consideration.

Agree, the false positive risk is a key concern that needs to be carefully managed. It will be interesting to see how widely this policy cap methodology is adopted and whether it proves effective in practice across different sectors.

The policy cap approach seems like a reasonable step towards more accurate AI detection, though the details of implementation will be crucial. Institutions will need to strike the right balance between setting appropriate thresholds and ensuring fair treatment of human-generated work. Looking forward to seeing how this evolves.

This is a complex issue without easy solutions, so the methodical approach outlined in this research is welcome. Establishing an acceptable false positive rate as the foundation for AI detection systems seems like a prudent way to balance accuracy and fairness. Looking forward to seeing how this gets adopted and refined over time.