Listen to the article

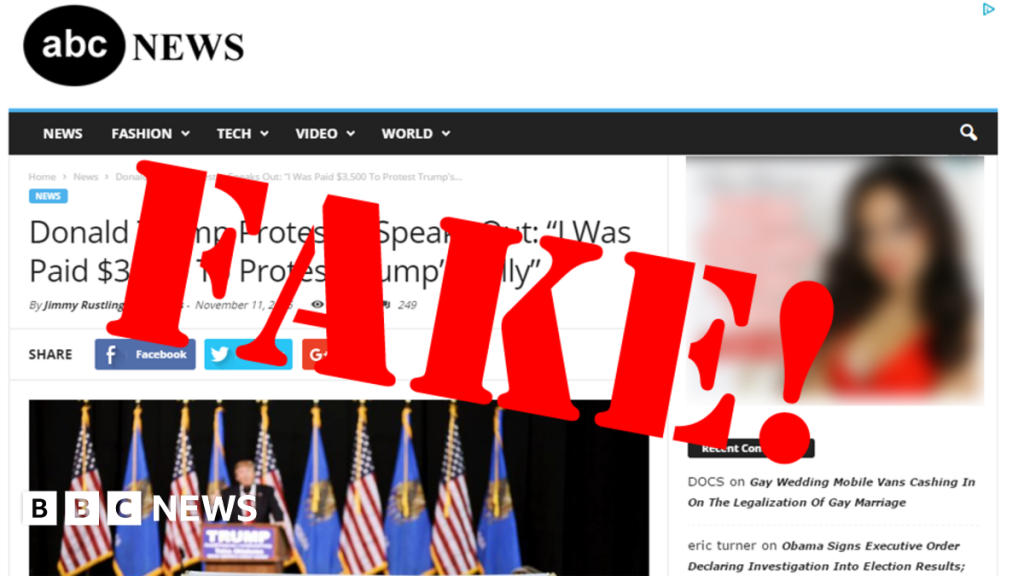

Fake news stories circulating online have become a pressing concern in recent weeks, with particular scrutiny following the U.S. election. While Facebook, Google, and Twitter have faced the brunt of public criticism, numerous other popular platforms maintain their own mechanisms for users to flag misleading content, with varying degrees of effectiveness and commitment.

The proliferation of misinformation online has evolved from a niche concern to a mainstream issue with significant real-world consequences. Recent studies suggest that false news stories received more engagement on social media platforms during the election cycle than legitimate news sources, raising alarms about their potential impact on public opinion and democratic processes.

Facebook, which reaches over 2.7 billion monthly active users globally, has faced particularly intense scrutiny. The platform recently announced expanded efforts to combat misinformation, including partnerships with third-party fact-checkers and changes to its algorithm designed to reduce the visibility of content deemed misleading. These measures come after years of criticism that the company’s policies allowed fake news to flourish unchecked.

Google has similarly implemented changes to its search algorithms and advertising policies. The tech giant now prioritizes authoritative sources in search results during breaking news events and has removed financial incentives for websites that spread misinformation through its AdSense network. These adjustments aim to reduce the economic motivation behind creating fake news content.

Twitter, with its real-time nature and capacity for rapid information spread, introduced labels for misleading content and warnings on tweets containing disputed claims during the election period. The platform has also begun prompting users to read articles before retweeting them, a subtle intervention designed to encourage more thoughtful sharing behaviors.

Lesser-discussed platforms like Reddit, YouTube, and Instagram have their own approaches to combating fake news. Reddit relies heavily on community moderation, while YouTube has adjusted its recommendation algorithm to reduce promotion of borderline content. Instagram has expanded its fact-checking program to include misleading images and memes, recognizing that visual misinformation can be equally damaging.

Industry experts point out that the varying effectiveness of these measures reflects the different challenges each platform faces. “Each social media ecosystem has its own unique vulnerabilities to misinformation,” explains Dr. Claire Wardle, co-founder of First Draft, a non-profit focused on tackling misinformation. “What works for Twitter might not work for YouTube, and platforms need tailored solutions.”

For users concerned about misinformation, there are several practical steps to take. Most major platforms provide mechanisms to report false content, though the processes differ. On Facebook, users can click the three dots on the top right of any post and select “Report” followed by “False Information.” Twitter allows reporting through the dropdown menu on tweets, while YouTube provides a flag icon under videos.

Beyond reporting, media literacy experts recommend verifying information before sharing it. This includes checking the source’s credibility, cross-referencing with other reliable sources, and being particularly cautious about emotionally charged content designed to provoke outrage.

The challenge of combating fake news extends beyond individual platforms to the broader digital media ecosystem. Dr. Samantha Bradshaw, researcher at the Oxford Internet Institute, notes that “misinformation often travels across multiple platforms in coordinated campaigns, making platform-specific solutions insufficient on their own.”

Regulatory approaches vary globally, with some countries implementing strict laws against misinformation while others prioritize freedom of expression. This patchwork of regulations creates additional challenges for global platforms operating across different jurisdictions.

As fake news continues to evolve in sophistication, platforms face mounting pressure to develop more effective countermeasures. The coming months will likely see further refinements to content moderation policies, increased transparency about algorithmic decision-making, and potentially new collaborative efforts between platforms to address this shared challenge.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

8 Comments

Fake news has become a major concern, with real-world consequences for public discourse and decision-making. While reporting misleading content is important, I agree that media literacy and proactive solutions are key. Platforms, policymakers, and the public all have a role to play in combating this threat to informed democracy.

Dealing with fake news is a complex issue without easy solutions. It’s good to see major platforms taking steps to address misinformation, but there’s still a long way to go. Fact-checking and reducing visibility of misleading content are a start, but more needs to be done to empower users to think critically about online information.

Fake news is a serious threat to informed decision-making and democratic discourse. I’m glad to see platforms taking it more seriously, but the problem is far from solved. Ongoing vigilance, cooperation between platforms and fact-checkers, and a renewed focus on media literacy will all be crucial going forward.

Reporting fake news is an important step, but I wonder how effective it really is in the long run. The platforms’ fact-checking and content moderation efforts seem to be playing catch-up with the speed and scale of misinformation online. A more holistic, multifaceted approach is needed to address this challenge.

While the guide provides some useful tips, I worry that reporting fake news may not be enough to stem the tide. Misinformation often spreads rapidly and can be difficult to contain, even after it’s been flagged. More proactive, systemic solutions are needed to address the root causes and stop the problem at the source.

This is a complex issue without easy answers. Reporting fake news is a start, but as others have noted, more systemic solutions are needed. I’m curious to hear about other ideas or approaches that could help address the root causes of misinformation and empower users to think critically about online content.

Reporting fake news is important, but it’s just one piece of the puzzle. We also need to focus on media literacy, teaching people how to spot misinformation and verify sources. Platforms have a big role to play, but individual users have a responsibility to be discerning consumers of online content as well.

Absolutely. Educating the public on media literacy is crucial. Platforms can only do so much – we all need to develop the critical thinking skills to evaluate information and avoid falling for fake news.