Listen to the article

Fake Account Networks Systematically Manipulate Global Election Discourse, Report Finds

Sophisticated networks of fake accounts are systematically manipulating public opinion during elections worldwide, according to a comprehensive report recently released by Israeli data analysis company Cybra. The report, titled “Digital Information War, How to Change Elections,” reveals concerning patterns of coordinated efforts to sway voter sentiment through social media.

Cybra, founded by Israeli information and cybersecurity experts, specializes in analyzing misinformation and public opinion manipulation in social media environments using AI-based digital intelligence. Their investigation examined nine countries: the Philippines, Taiwan, Norway, Germany, Poland, Australia, Portugal, Singapore, and South Korea.

The findings show that manipulation tactics have evolved beyond simply spreading false information. Instead, organized networks now work strategically to shape entire online discourses around candidates and electoral processes.

In the 2022 Philippine general election, fake accounts comprised between 37-45% of the discourse surrounding the two main political coalitions. The opposition alliance “KiBam” faced particular scrutiny, where bot networks disguised as young political enthusiasts repeatedly deployed identical hashtags, generating an estimated 54.2 million potential impressions.

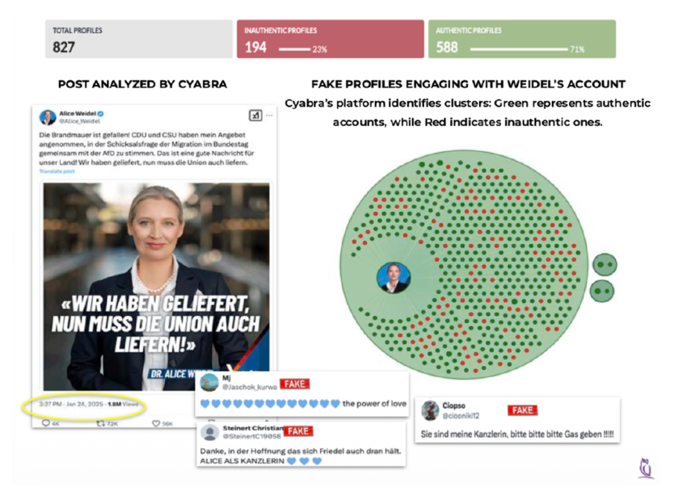

The German federal parliamentary election revealed similar patterns. Posts related to far-right AfD co-representative Alice Weidel reached a potential audience of 126 million. Analysis showed that 33% of accounts engaging in these discussions were fake, as were 23% of positive comments about Weidel. These artificial accounts repeatedly circulated supportive messages like “a leader who will save Germany’s future” and “hope for Germany.”

A hallmark of modern opinion manipulation is the interconnected nature of these operations. The report highlights that fake accounts no longer operate independently but function as coordinated networks that systematically repeat identical messages and hashtags.

“These networks strategically occupy comment sections to create a false impression of the political atmosphere,” explains the report. Another common pattern identified is the prioritization of emotional content—messages triggering fear, ridicule, anger, and disgust—which spread more rapidly than policy discussions or factual information.

South Korea faces similar challenges, with approximately 29% of 1,454 analyzed accounts classified as fake and 14% specifically spreading electoral distrust. Messages questioning election integrity, alleging foreign interference, and claiming political system manipulation have proliferated on platforms like TikTok and X (formerly Twitter).

Further location-based analysis of Korean accounts found 30% were fake, with 33% dedicated to spreading negative messaging. Cybra warns that domestic manipulation efforts in South Korea appear to be shifting from targeting specific candidates to undermining trust in the electoral system itself.

With South Korean local elections approaching later this year, Cybra Korea expressed particular concern about potential manipulation. Key risk factors include the amplification of regional conflicts, character assassination campaigns against candidates, AI-generated content and deepfakes, and growing electoral system distrust.

Local elections may be especially vulnerable to manipulation as they intersect with emotionally charged issues including development, environment, real estate, and transportation—creating fertile ground for emotional framing that can be exploited by coordinated disinformation campaigns.

“The modern information war has transformed into a battle that undermines what can be trusted rather than simply arguing about what is true,” a Cybra representative stated. The company recommends strengthening real-time monitoring cooperation between election management agencies, platform companies, and analysis firms, alongside enhancing digital literacy among voters.

As AI technology continues to advance, the challenge of distinguishing genuine public opinion from artificially manufactured sentiment presents a growing threat to electoral integrity worldwide.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

10 Comments

I’m curious to learn more about the specific tactics used by these fake account networks. What were the most common methods they employed to sway public opinion? Exposing their playbook could help us develop better countermeasures.

Yes, understanding their techniques in detail is key. The report should provide valuable insights that election officials and tech platforms can use to enhance their election security efforts.

This report highlights the urgent need for greater platform accountability and transparency. Social media companies must do more to detect, disrupt and remove coordinated networks of fake accounts.

I agree. Platforms need to be held responsible for the spread of disinformation on their networks and take proactive steps to secure their systems against these manipulation tactics.

This is a global issue that needs a global response. Coordinated international cooperation and information sharing will be crucial to effectively combat these sophisticated disinformation campaigns across borders.

Absolutely. Tackling foreign interference in elections requires a united, multilateral approach. Sharing best practices and intelligence between countries will strengthen our collective defense.

The findings are deeply troubling, but not entirely surprising. Foreign actors have been exploiting social media to interfere in elections for years. We must stay vigilant and keep strengthening our defenses.

This is deeply concerning. Foreign interference in elections through fake accounts is a serious threat to democratic processes. We need robust measures to combat this, including improved platform transparency and accountability.

I agree, these coordinated manipulation tactics are extremely worrying. More transparency and stronger safeguards are crucial to protect the integrity of elections.

Systematic manipulation of public discourse around elections is a threat to democracy itself. We must remain vigilant and continue investing in tools and policies that uphold the integrity of the democratic process.