Listen to the article

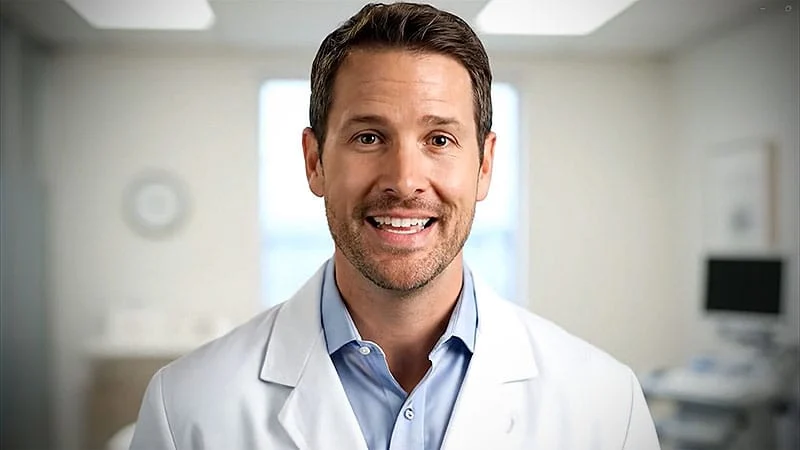

The rise of AI-generated medical influencers has sparked growing concerns as deepfake doctors proliferate across social media platforms, deceiving viewers with false medical advice and dubious product promotions.

Nonprofit organization Media Matters recently identified eight TikTok accounts featuring suspected deepfake doctor videos. These follow earlier incidents documented in a BMJ investigation that found deepfakes of prominent UK physicians being used to promote questionable products. Australia’s Baker Heart and Diabetes Institute also warned about fabricated videos showing their doctors endorsing diabetes supplements.

“What strikes me is how convincing these synthetic doctors are, with the visuals combined with compelling but potentially fake narratives,” said Ash Hopkins, PhD, associate professor of medicine and public health at Flinders University. “The potential for harm is concerning because when misinformation is presented in this form, it can be difficult to distinguish that it is not coming from a genuine healthcare professional.”

The recent explosion in medical deepfakes reflects both technological advances and predictable exploitation patterns. As Hopkins notes, referencing Stuart Russell’s book “Human Compatible,” if a technology like AI can be misused for financial gain, it likely will be.

For scammers, the appeal is straightforward: leveraging the inherent trust people place in medical professionals. By creating deepfake doctors, they can add a veneer of credibility to misleading health claims, miracle cures, or supplement promotions. These tactics particularly target vulnerable individuals with limited health literacy or those skeptical of conventional healthcare.

Daniel S. Schiff, PhD, co-director of the Governance and Responsible AI Lab at Purdue University, confirms this is part of a broader trend of AI-generated content designed to deceive consumers across multiple sectors.

The technology behind these deepfakes has evolved rapidly since around 2018. Early versions used generative adversarial networks—systems where one neural network creates images while another evaluates them. The field expanded dramatically with the introduction of diffusion models like DALL-E, Midjourney, and Stable Diffusion between 2021 and 2022.

“We’re at a point where neural networks trained on massive datasets can produce extremely detailed and realistic visuals, including mimicking human faces and facial expressions,” Hopkins explained. “When combined with large language models that generate coherent text and speech, the result is AI avatars that can speak, emote, and move with remarkable realism.”

What makes the situation particularly concerning is the increasing accessibility of these tools. Christopher Doss, PhD, senior policy researcher at RAND Corporation, points out that creating deepfakes no longer requires specialized knowledge or expensive equipment. Simple applications have made the technology available to anyone with a smartphone or basic computer.

Medical deepfakes represent only one aspect of AI avatars entering healthcare. Companies like Hippocratic AI and Mediktor now offer virtual healthcare providers for tasks ranging from follow-up care to symptom assessment. While these aren’t deepfakes—patients know they’re interacting with AI—they raise their own set of concerns about quality of care and workforce impacts.

In January, the National Nurses Union, representing over 225,000 registered nurses, organized protests against AI adoption without adequate staffing and patient safeguards.

Detecting deepfake medical content remains challenging even for the tech-savvy. A RAND Corporation study found that deepfake videos about climate change deceived between 27% and 50% of viewers—numbers Doss believes would be higher today given rapid technological advancements.

Human biases further complicate detection. A June 2025 study in Frontiers in Public Health revealed that people tend to trust older-looking AI doctor avatars more than younger ones, reflecting common age biases in healthcare.

Rather than relying on visual cues to spot deepfakes, experts recommend focusing on verification. “If you can’t find anything about that doctor other than that video, then that’s probably a clue that doctor may not exist,” advised Doss.

Schiff agrees: “When you see something pop up on social media for something high stakes—a medicine or a cure or whatever it is—instead, go to a reliable medical source.”

Addressing the problem requires multiple approaches. Hopkins advocates for built-in transparency measures like watermarks or explicit disclosures on AI-generated content. However, social media platforms have been reluctant to proactively label such content.

“Social media companies generally are backing away from preemptively labeling these things,” Doss noted, “so it really is becoming more incumbent on the person themselves to protect themselves because it does not seem like social media companies are seeing that as part of their core responsibility at this moment.”

As technology continues advancing, cultivating healthy skepticism and strong digital literacy skills remains the most effective defense against medical misinformation in this new era of synthetic doctors.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

10 Comments

This is a concerning trend. AI-generated medical misinformation could have serious consequences for public health. It’s critical that we find ways to identify and combat these deepfake doctors before they can mislead more people.

You’re right, the potential for harm is worrying. Stronger safeguards and verification processes are needed to protect against this kind of deception, especially on social media platforms.

The rise of AI-generated medical misinformation is a serious concern that deserves attention. Strategies to combat this, such as improved content moderation and public education, will be crucial going forward.

Well said. Maintaining public trust in legitimate medical expertise is vital, so finding effective solutions to this problem should be a high priority.

I’m curious to see how the medical and tech communities respond to this challenge. Identifying and removing these synthetic doctor videos will require a coordinated effort across platforms and disciplines.

That’s a good point. Collaboration between healthcare providers, AI experts, and social media companies will be key to developing effective solutions.

The article highlights an important issue. As AI technology advances, we’ll likely see more attempts to exploit it for financial gain or to spread false information. Maintaining public trust in medical expertise is crucial.

Agreed. Educating the public on how to spot deepfake content, as well as improved content moderation, could help address this problem.

This is a troubling development, but not entirely surprising given the potential for misuse of AI. Safeguarding the integrity of medical information should be a top priority as these technologies continue to evolve.

Absolutely. Proactive measures to address this issue before it escalates further are critical. Vigilance and a commitment to truth-telling will be essential.