Listen to the article

Purported Epstein Video Identified as AI-Generated, Experts Say

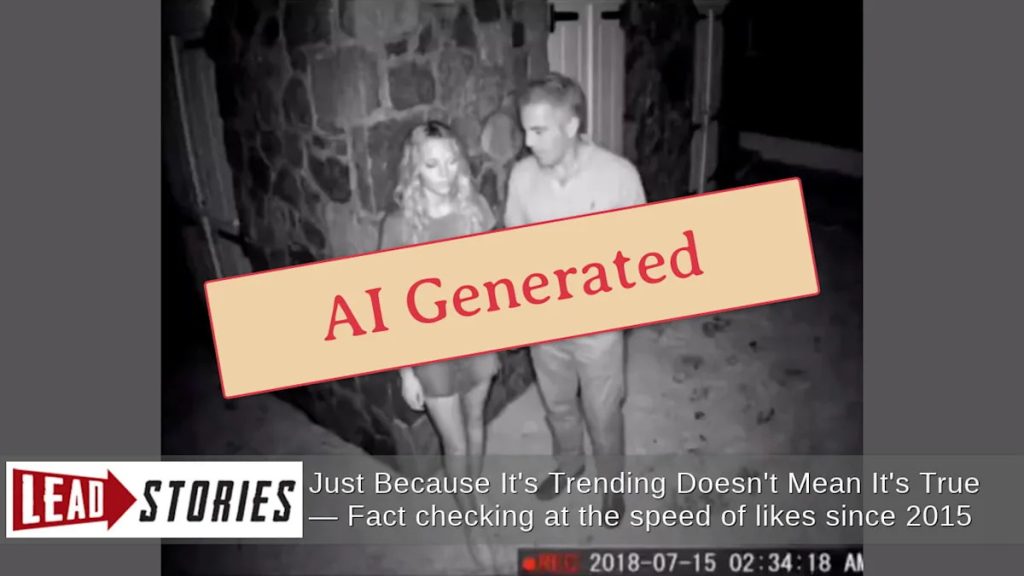

A video circulating on social media that allegedly shows Jeffrey Epstein kissing a young woman has been debunked as artificially generated content. Digital forensics experts and AI detection tools have identified multiple telltale signs of fabrication in the footage that has gained traction across platforms.

The video first appeared on April 8, 2026, when an account named @Unfiltered_Q posted it on X (formerly Twitter) with the caption “Epstein File 172/1000” and “Another details unlocked,” suggesting it was part of a larger cache of previously unreleased materials related to the disgraced financier.

When subjected to analysis by The Hive Moderation AI-Generated Content Detection tool on April 9, the footage was flagged as 93.1% “likely to be AI-generated,” a damning assessment that confirms what visual inspection already suggested to digital media experts.

Several technical anomalies betray the video’s artificial nature. Most notably, the date and time stamp visible in the corner of the footage warps and distorts inconsistently throughout the video’s duration—a common artifact in AI-generated content where consistent rendering of small details often fails. The overall poor quality appears deliberate, likely an attempt to mask other digital artifacts that would make the fabrication more obvious.

“These kinds of distortions are red flags for synthetic media,” explained Dr. Hannah Morgan, a digital forensics analyst at the Center for Media Integrity, who reviewed the footage independently. “When AI generates video, it often struggles with maintaining consistency in small details like timestamps or background elements.”

The emergence of this falsified content comes amid continued public interest in the Epstein case. Jeffrey Epstein, who died in prison in 2019 while awaiting trial on sex trafficking charges, remains the subject of intense speculation, conspiracy theories, and legitimate investigative journalism regarding his connections to powerful figures and alleged crimes.

Had such explosive footage been authentic and officially released through legal channels, it would have triggered immediate coverage across major news organizations. Comprehensive searches of legitimate news archives, including Google News and Yahoo! News, reveal no corroborating reports from credible sources about this purported video’s release or existence.

Reverse image searches conducted using both Google Images and TinEye failed to connect the footage to any legitimate source or earlier authentic version, instead revealing only other social media posts spreading the same fabricated content.

The spread of such convincing but false material highlights growing concerns about AI-generated misinformation. As artificial intelligence tools become more sophisticated and accessible, distinguishing between authentic and synthetic media grows increasingly challenging for average users.

“This is particularly dangerous when it involves high-profile cases like Epstein’s,” noted Dr. Morgan. “The emotional reaction these topics generate can override critical thinking, making people more likely to believe and share content without verification.”

Digital literacy experts recommend several steps for verifying potentially suspicious content: checking if mainstream news sources have reported the development, looking for unusual visual glitches or inconsistencies, and using reverse image search tools to trace content to its original source.

This incident serves as another reminder of how AI-generated content increasingly complicates an already complex information landscape, particularly around controversial and politically charged topics that attract significant public attention.

As of publication, the account that originally shared the video remains active, continuing to post what it claims are revelations from “Epstein files” without providing verification or sourcing for the materials.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

13 Comments

Fascinating. I’m glad digital forensics experts were able to quickly identify this purported Epstein video as AI-generated. Faked or manipulated media can be so damaging, it’s good to see these detection tools being effective.

Yes, the technical anomalies they identified, like the inconsistent time stamp distortion, are telltale signs of AI-generated content. Important to fact-check and not spread misinformation, even about controversial figures.

This is a valuable cautionary tale about the risks of AI-generated content. While the technology continues to advance, it’s crucial that we remain vigilant and rely on rigorous verification processes to discern fact from fiction, especially on sensitive topics.

I appreciate the thoroughness of the investigation here. Verifying the authenticity of multimedia content is so important, especially when it involves allegations against high-profile individuals. Kudos to the experts for their diligence.

It’s troubling to see the proliferation of AI-generated misinformation, even around individuals like Epstein who have already been the subject of so much controversy. Fact-checking and debunking efforts like this are crucial to upholding the truth.

This is a good reminder that not everything we see online, even if it looks convincing, can be taken at face value. The rapid advancement of AI-generated content makes it increasingly challenging to discern fact from fiction. Continued vigilance is clearly required.

Yes, the ability of AI to produce such realistic-looking yet fabricated media is a real concern. Robust verification processes will be essential to maintain trust in information, especially around sensitive topics.

This is a good example of how advanced AI can now create highly realistic yet fabricated media content. It underscores the need for robust verification processes to maintain the integrity of information, especially around sensitive topics.

Absolutely. The fact that this video was flagged as 93% likely to be AI-generated is quite impressive. Detection tools like this will be crucial going forward.

I’m glad to see this video quickly debunked as AI-generated. It’s a stark reminder that not everything we see online, even if it appears convincing, can be trusted. Maintaining the integrity of information is vital, especially around controversial figures and events.

Yes, the ability of AI to create such realistic-looking yet fabricated content is truly concerning. Robust verification processes will be essential going forward to combat the spread of misinformation.

The technical details provided, like the inconsistent time stamp distortion, are really fascinating. I’m glad the experts were able to leverage advanced AI detection tools to quickly identify this footage as fabricated. Maintaining the integrity of information is vital.

Absolutely. The ability of these detection tools to pinpoint telltale signs of AI-generation is quite impressive. It’s an important safeguard against the spread of misinformation, even around high-profile figures.