Listen to the article

Government Faces Criticism Over Selective Application of Singapore’s Anti-Falsehoods Law

Questions are mounting over the Singapore government’s decision not to invoke its online falsehoods legislation against a sophisticated AI-generated disinformation campaign targeting Prime Minister Lawrence Wong, despite wielding the same law aggressively against domestic critics and independent media.

The Protection from Online Falsehoods and Manipulation Act (POFMA), passed in May 2019, was presented to Parliament as a shield against coordinated foreign disinformation campaigns. Then-Law Minister K Shanmugam emphasized that Singapore was “a specific and vulnerable target” for countries seeking to “create deep internal divisions and keep us in a permanent state of internal dissension.”

During parliamentary debates, government ministers repeatedly stressed that POFMA’s “primary focus” was not individual citizens but “the larger tech platforms” and those deliberately creating and disseminating falsehoods at scale.

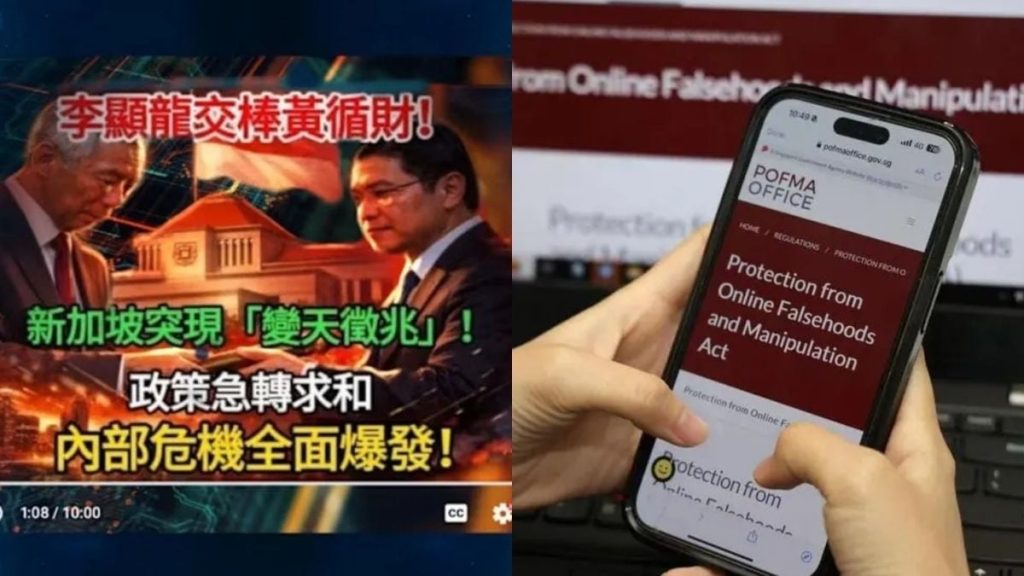

Yet when Singapore recently faced precisely such a threat – nearly 300 AI-generated Chinese-language videos featuring deepfake avatars, created in coordinated 20-minute windows, amassing millions of views, and targeting the Prime Minister – the government opted not to use POFMA.

When questioned in Parliament about this decision, Minister for Digital Development and Information Josephine Teo explained that YouTube had already removed most of the accounts, making POFMA action unnecessary.

This justification stands in stark contrast to the government’s approach toward individual Singaporeans. In September 2025, TikTok user Jay Ish’haq Rajoo received a POFMA correction direction for a video he had already removed within 24 hours of posting. The direction came 12 days after his original post, long after the content had ceased circulating.

This was not Rajoo’s first encounter with the law. In August 2023 alone, he received three separate correction directions from three different ministers within a single week, followed by a conditional warning in July 2024.

The inconsistency is difficult to ignore. A private citizen whose content was already removed faced formal legal action, while a coordinated foreign-linked operation targeting the Prime Minister with fabricated narratives escaped the same scrutiny because some accounts had been removed by the platform.

According to the POFMA Office’s data, the law has been invoked in 89 cases since its inception, resulting in 143 Correction Directions and numerous other enforcement actions. The overwhelming majority of these cases targeted domestic entities: politicians, independent media outlets, activists, and individual content creators.

Shanmugam, who championed the law by warning of foreign interference, became its most prolific user, personally issuing 20 of the 89 total directions. During his tenure, the Ministries of Law and Home Affairs collectively issued 36 directions – over 40% of all cases. Not one targeted a foreign actor, coordinated network, or AI-generated operation.

The data also undermines the government’s key justification for placing POFMA powers with ministers rather than courts – speed. While officials insisted in 2019 that falsehoods moved too quickly for judicial review, in practice, the average gap between an offending post and a POFMA direction was 17.3 days, with a median of five days.

Only one direction was issued on the same day as the original content, and just 15 were issued within 24 hours – most during the COVID-19 pandemic. Many directions took weeks: 35 days for The Online Citizen regarding AIM software, 32 days for former Non-Constituency MP Yee Jenn Jong on the same matter, and 30 days for content about a death row inmate.

POFMA also functions as a financial weapon. Sites that accumulate three or more directions within six months can be designated as “Declared Online Locations,” prohibiting them from carrying paid content or accepting advertising revenue in Singapore. This pipeline has been deployed systematically against independent media organizations including The Online Citizen (designated twice), its successor Gutzy Asia, Kenneth Jeyaretnam’s publication, and the Transformative Justice Collective.

Perhaps most striking is the reversal in government messaging. Having insisted in 2019 that public discernment alone was insufficient against sophisticated disinformation – requiring executive powers – officials now suggest a discerning public consulting official sources is adequate defense against the AI campaign targeting the Prime Minister.

The asymmetry across 89 cases paints a troubling picture: a law that appears far better calibrated to protect the government from domestic criticism than to shield Singaporeans from foreign disinformation – precisely the opposite of what Parliament was promised when POFMA was enacted.

As AI-powered disinformation capabilities grow increasingly sophisticated worldwide, Singapore’s selective enforcement raises serious questions about whether its legal framework is actually serving its stated purpose, or simply reinforcing governmental control over domestic discourse.

Fact Checker

Verify the accuracy of this article using The Disinformation Commission analysis and real-time sources.

5 Comments

This case certainly raises important questions about the true purpose and application of Singapore’s anti-disinformation law. Selective enforcement against critics while letting AI-generated campaigns slide seems concerning.

Interesting that the government seems reluctant to use POFMA against this AI-driven disinformation campaign, despite framing the law as a tool against foreign influence operations. Raises transparency concerns.

The government’s claims that POFMA would target large-scale foreign disinformation efforts appear questionable if it’s not being used against this sophisticated AI-driven campaign. Curious to see how this plays out.

This case highlights the complexities around regulating AI-generated content and the potential for abuse of anti-disinformation laws. The government’s response will be closely watched.

If POFMA is meant to combat coordinated online falsehoods, then why hasn’t it been invoked against this large-scale AI-generated disinformation targeting the PM? Raises doubts about the law’s true intentions.